instantid

Showing

.gitignore

0 → 100644

Contributors.md

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

assets/0.png

0 → 100644

8.31 MB

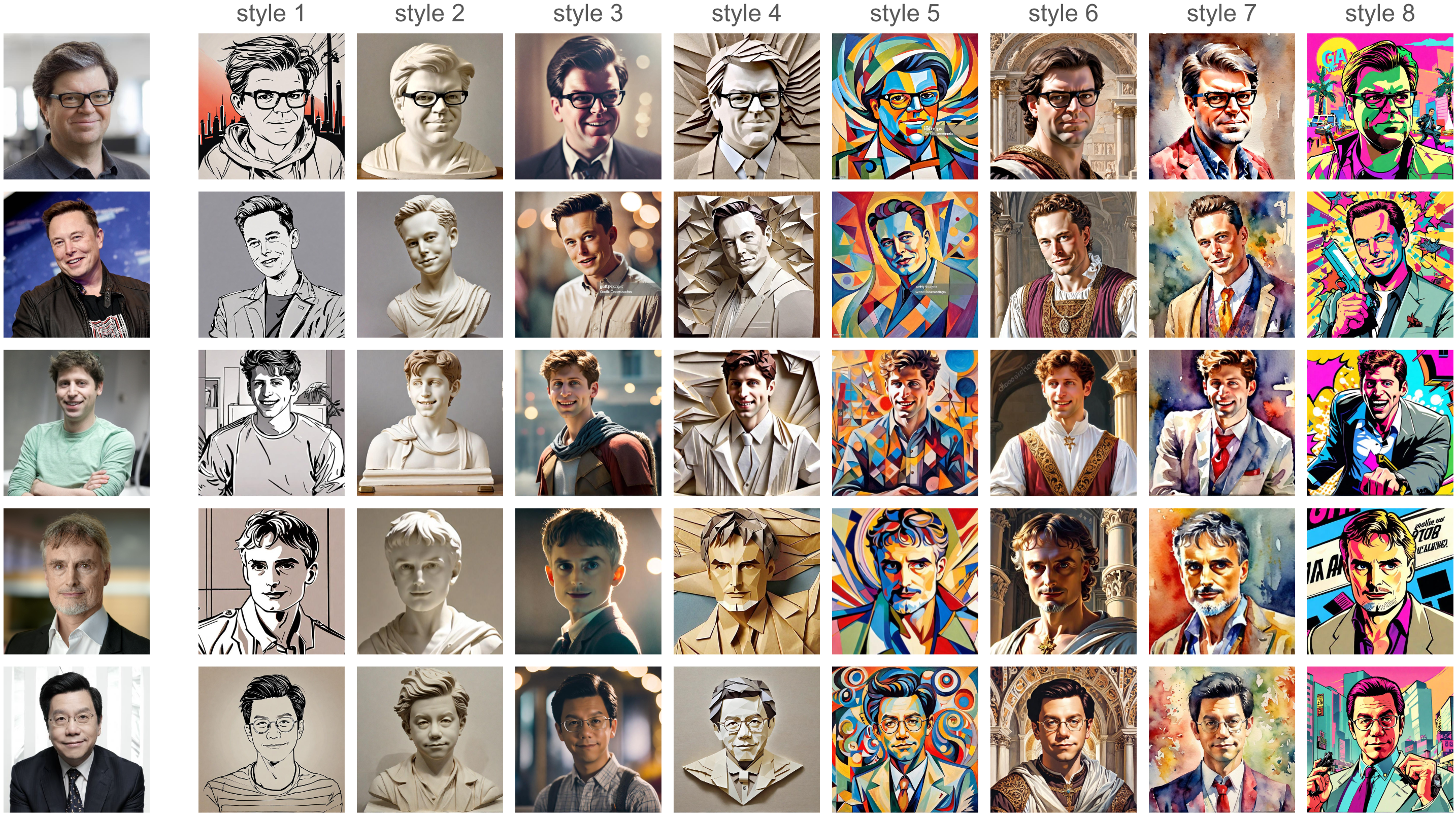

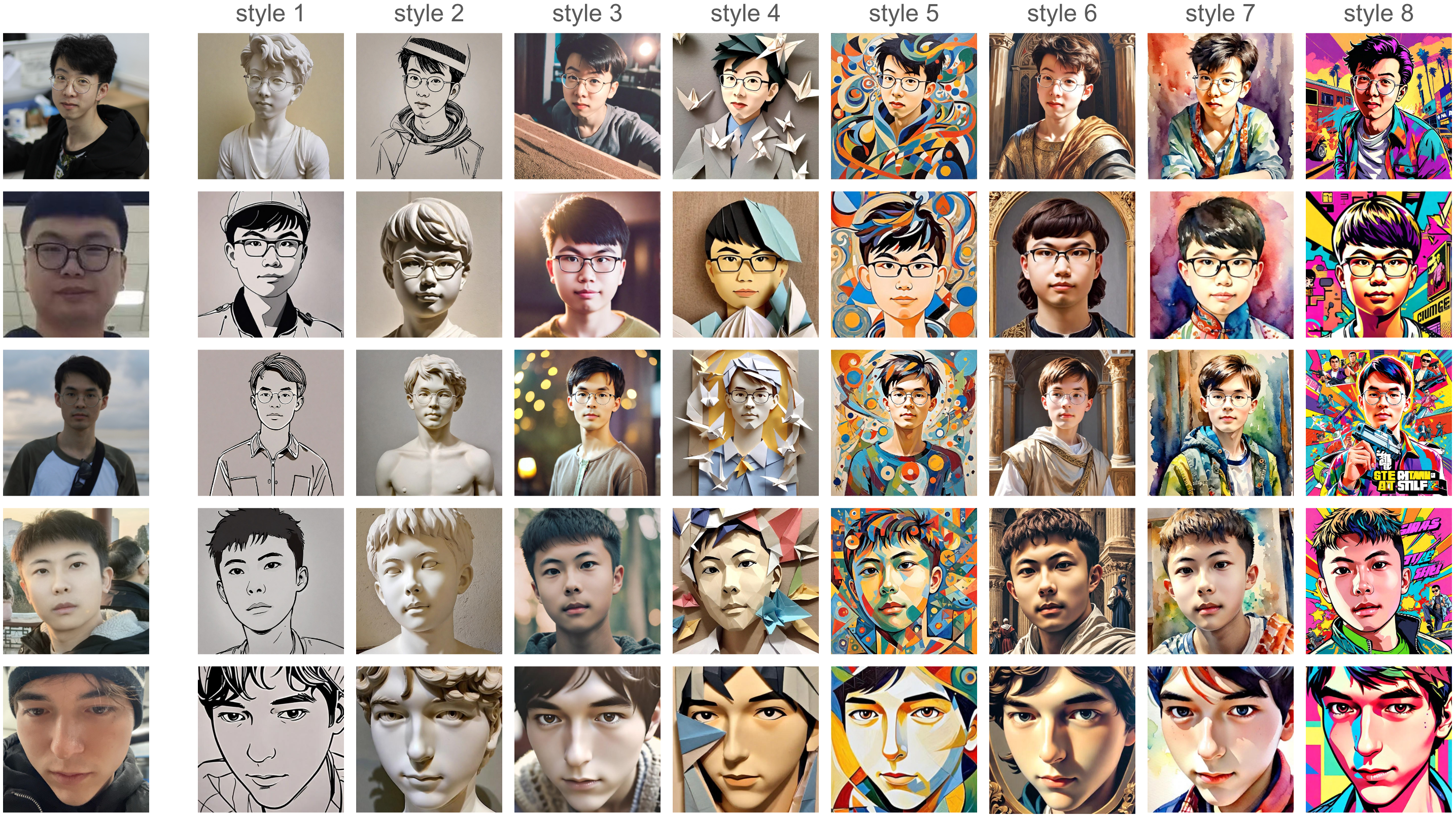

assets/1.png

0 → 100644

8.07 MB

assets/applications.png

0 → 100644

This image diff could not be displayed because it is too large. You can view the blob instead.

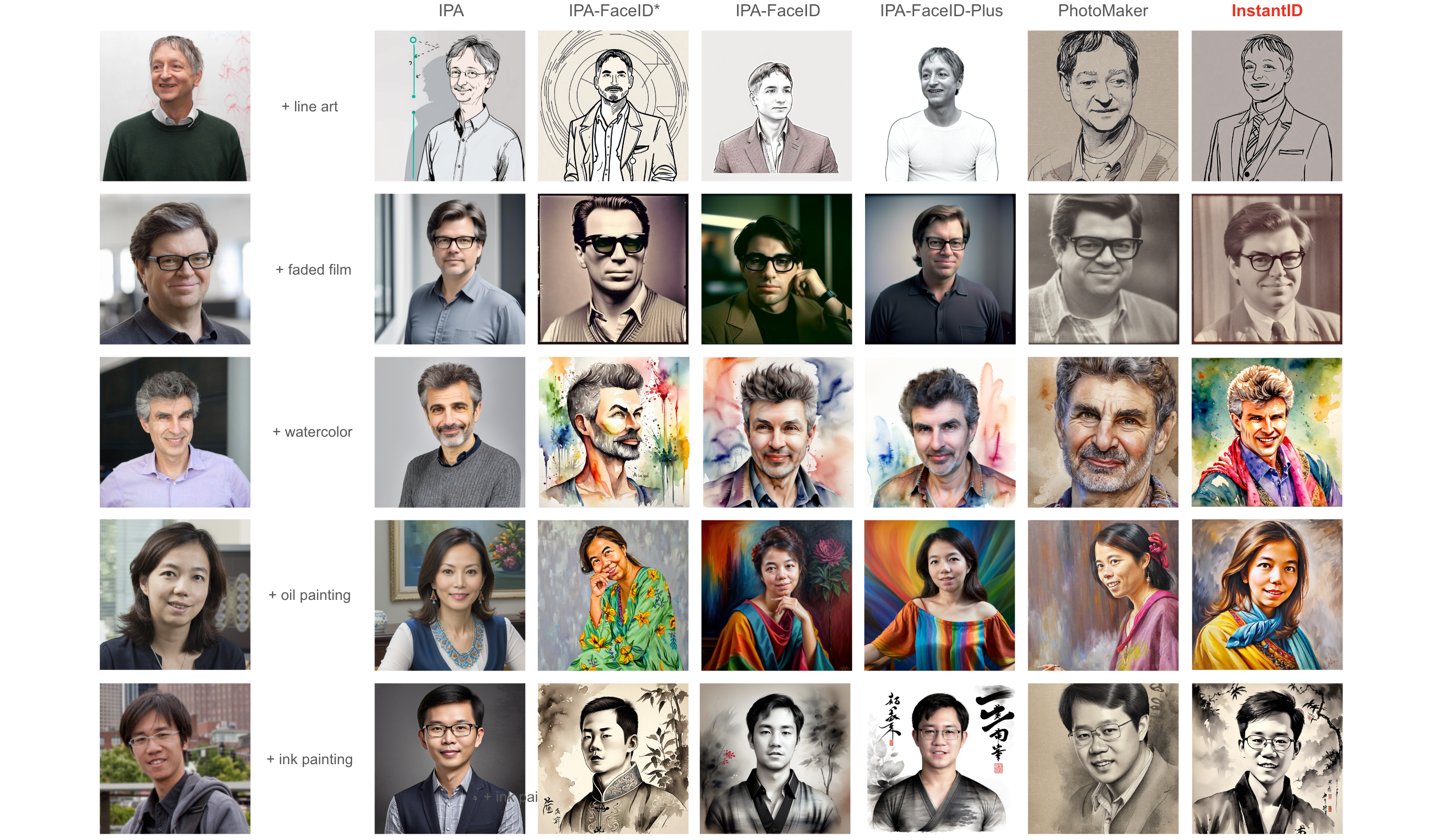

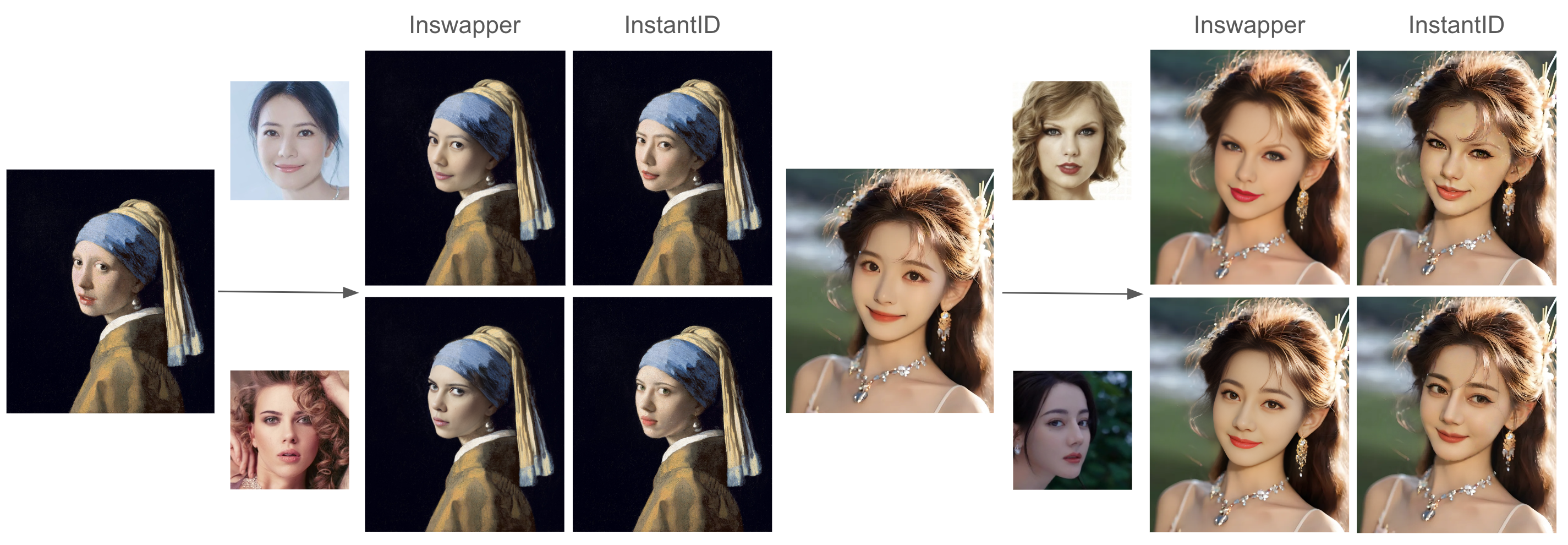

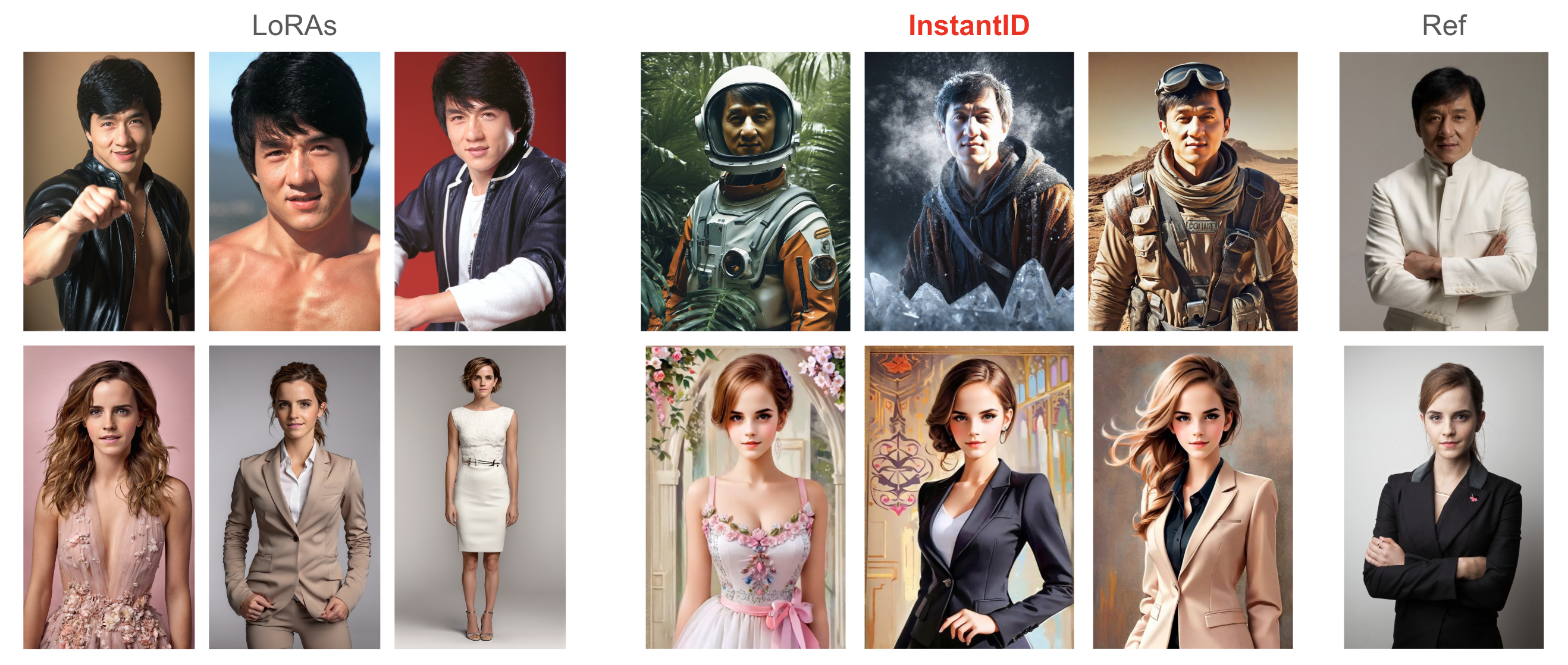

assets/compare-a.png

0 → 100644

5.84 MB

assets/compare-b.png

0 → 100644

3.46 MB

assets/compare-c.png

0 → 100644

4.32 MB

cog.yaml

0 → 100644

cog/README.md

0 → 100644

cog/predict.py

0 → 100644

docs/.DS_Store

0 → 100644

File added

docs/technical-report.pdf

0 → 100644

File added

examples/kaifu_resize.png

0 → 100644

1.01 MB

examples/musk_resize.jpeg

0 → 100644

352 KB

examples/poses/pose.jpg

0 → 100644

102 KB

examples/poses/pose2.jpg

0 → 100644

94.9 KB

examples/poses/pose3.jpg

0 → 100644

103 KB