Merge pull request #3083 from WenmuZhou/table1

[DO NOT MERGE]Table

Showing

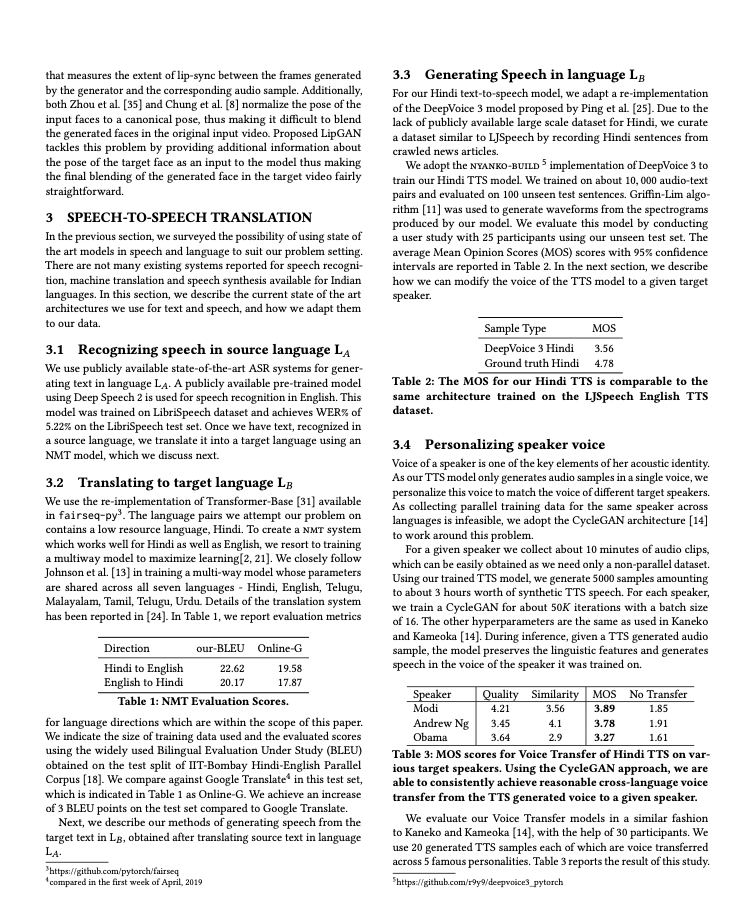

doc/table/1.png

0 → 100644

263 KB

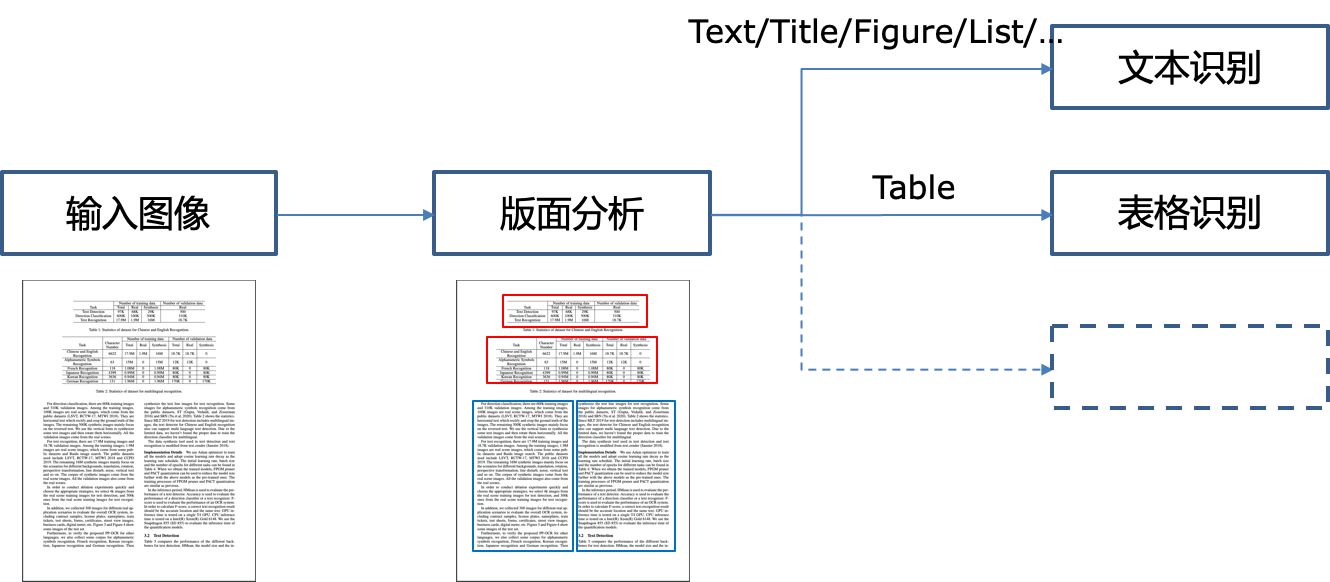

doc/table/pipeline.png

0 → 100644

116 KB

26.1 KB

This diff is collapsed.

ppocr/utils/network.py

0 → 100644