Feat/impl cli (#264)

* feat: refractor cli command

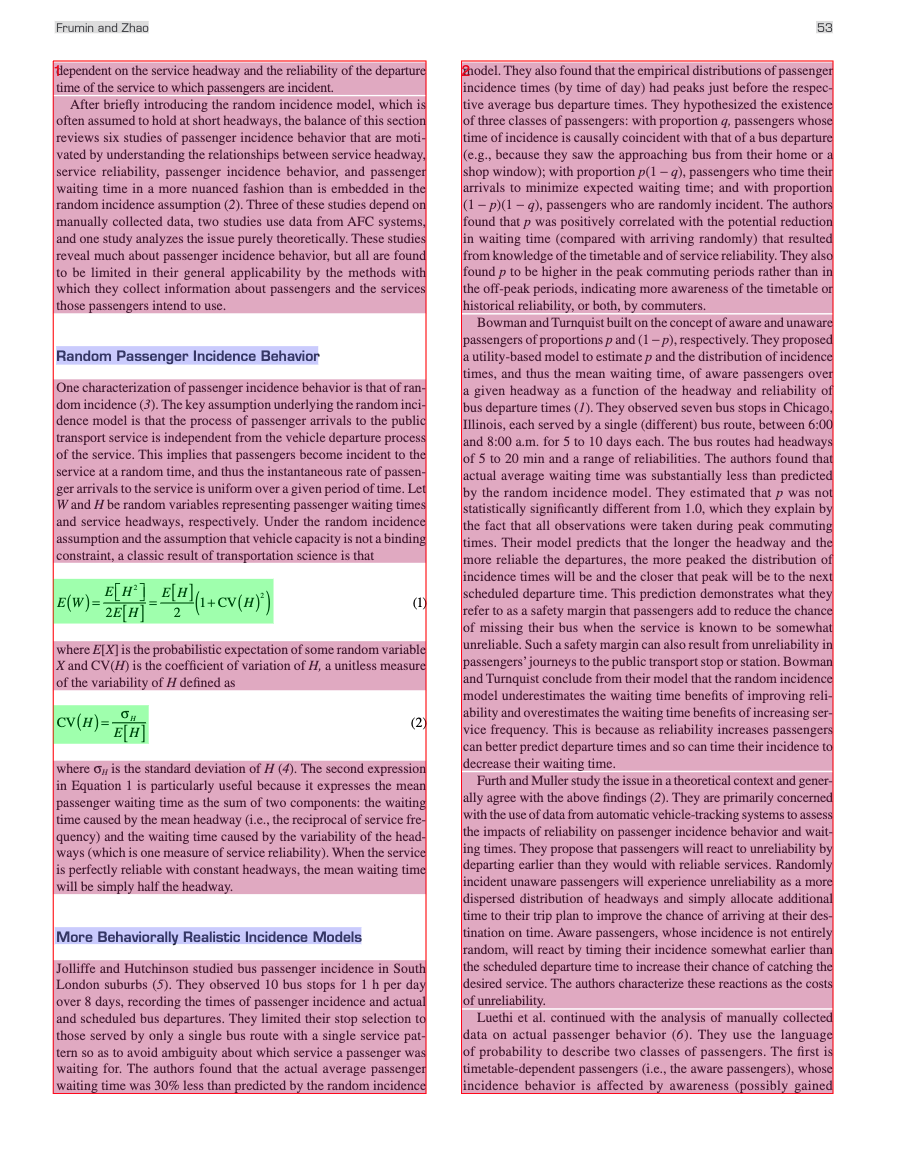

* feat: add docs to describe the output files of cli

* feat: resove review comments

* feat: updat docs about middle.json

---------

Co-authored-by:  shenguanlin <shenguanlin@pjlab.org.cn>

shenguanlin <shenguanlin@pjlab.org.cn>

Showing

559 KB

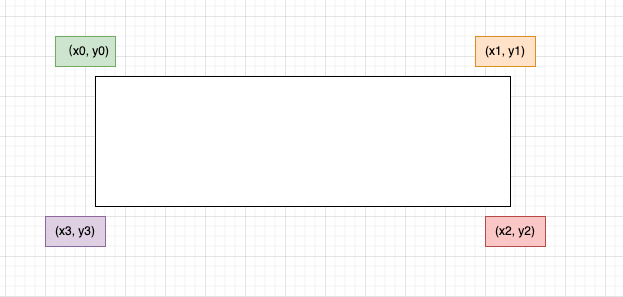

docs/images/poly.png

0 → 100644

12.9 KB

550 KB

docs/output_file_zh_cn.md

0 → 100644

magic_pdf/tools/cli.py

0 → 100644

magic_pdf/tools/cli_dev.py

0 → 100644

magic_pdf/tools/common.py

0 → 100644

tests/test_tools/__init__.py

0 → 100644

File added

File added