Docs - Update README and version for v0.2.0 release (#111)

Update README and version for v0.2 release.

Showing

108 KB

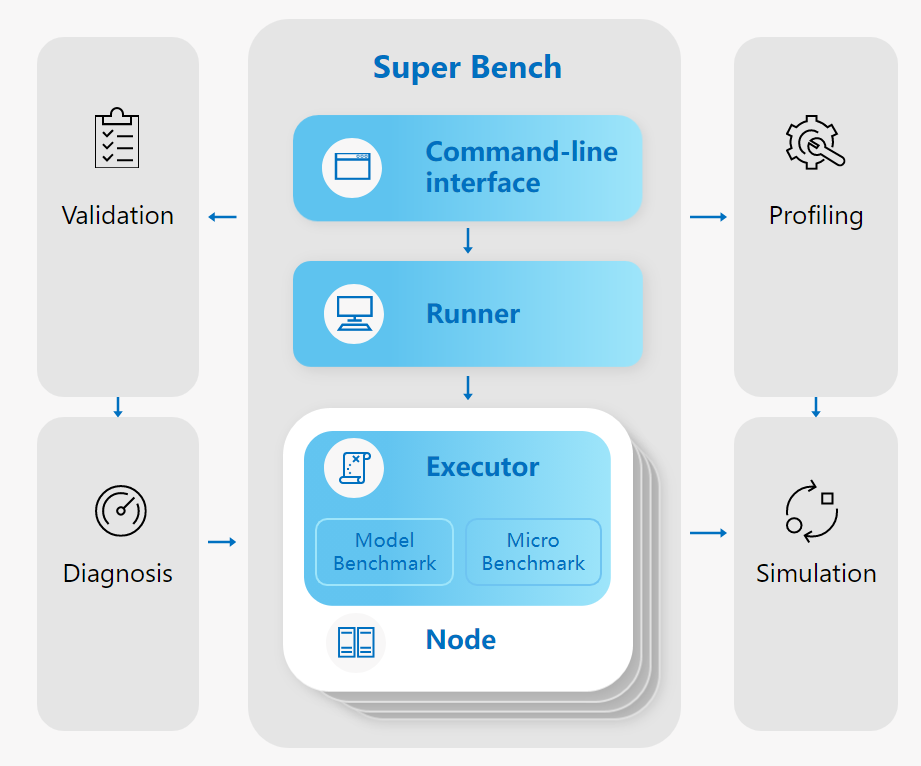

docs/assets/architecture.svg

0 → 100644

This diff is collapsed.

Update README and version for v0.2 release.

108 KB