Docs - Release Note and Introduction (#107)

* Add introduction and release documents. * Fix some typos in documents.

Showing

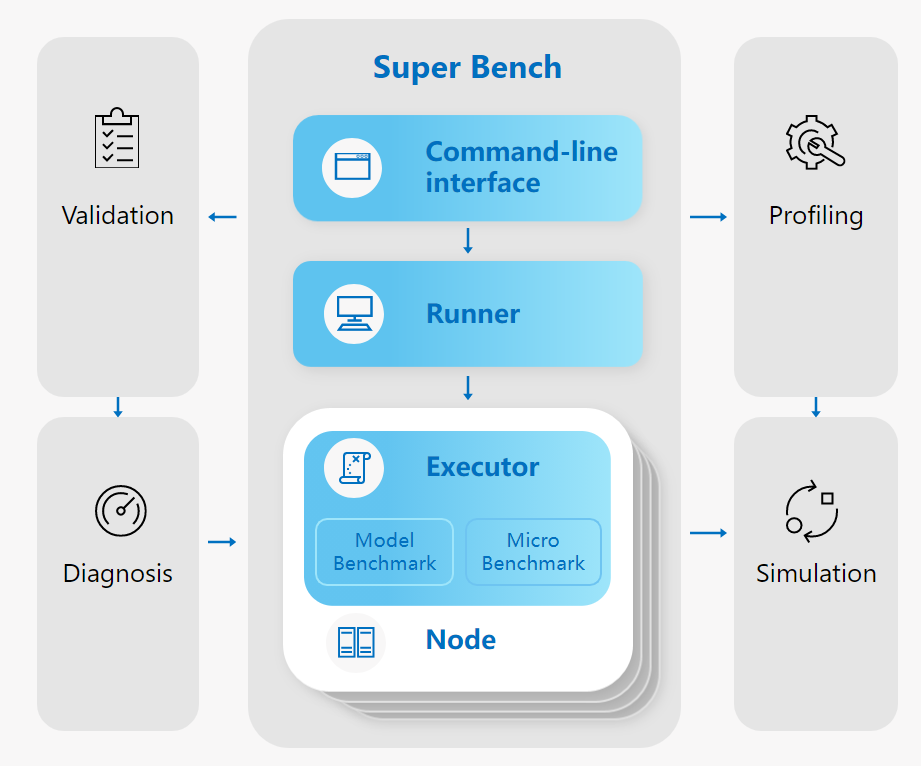

docs/assets/architecture.png

0 → 100644

108 KB

docs/assets/bar.png

deleted

100644 → 0

517 Bytes

83.9 KB

51.5 KB

39.6 KB