"superbench/common/vscode:/vscode.git/clone" did not exist on "91b44bc5a170ae6715dc02ed84a8b9bb8022f562"

Docs - Add design document (#125)

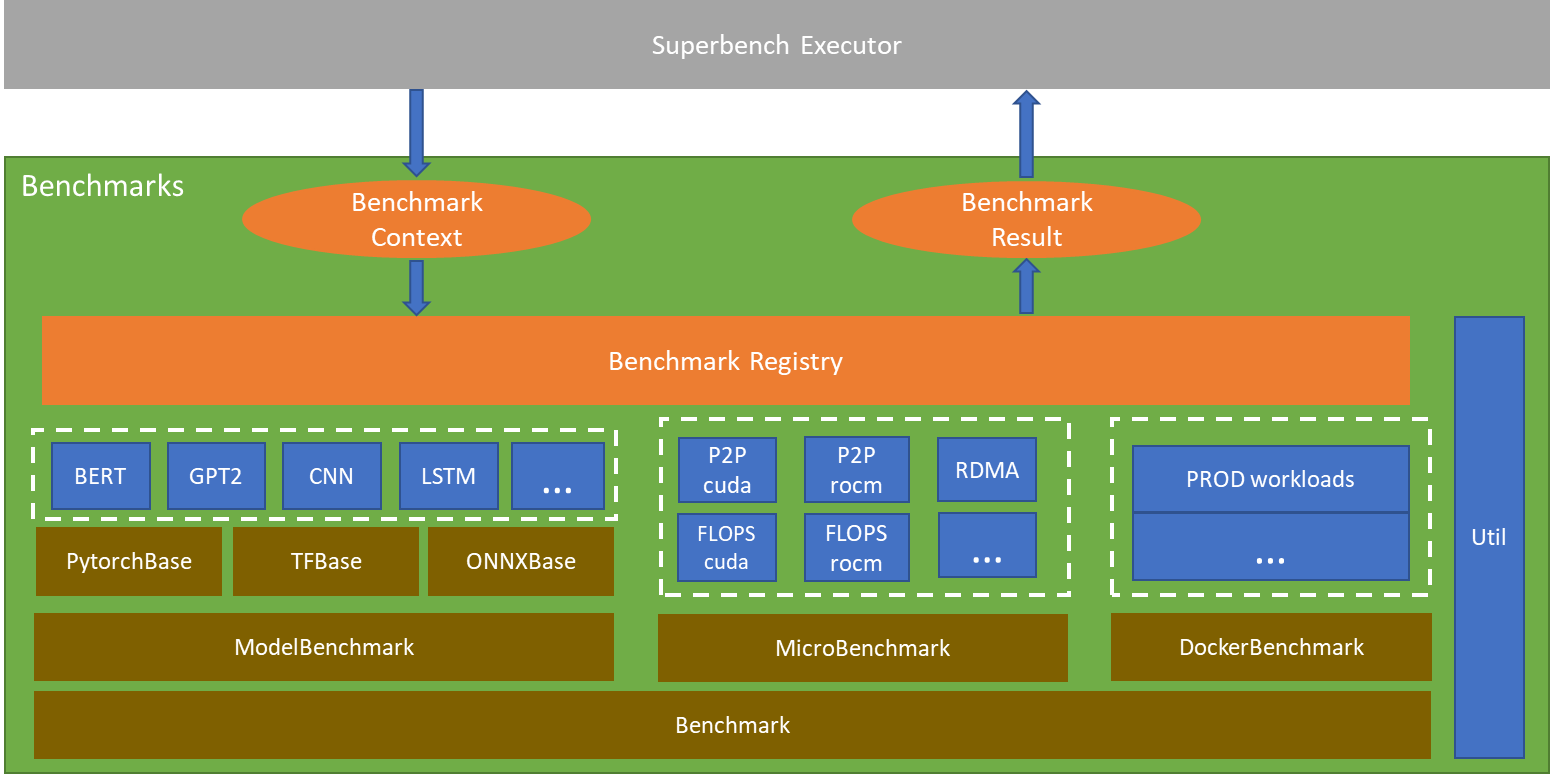

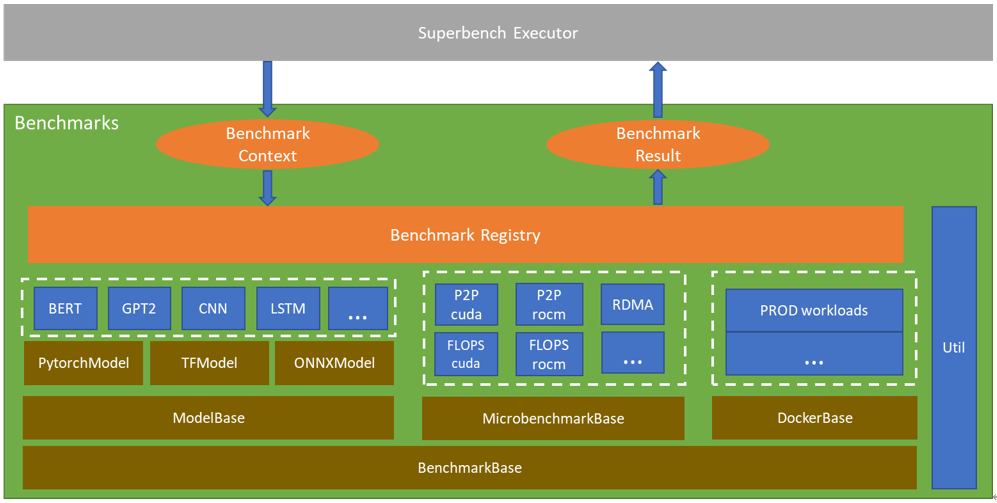

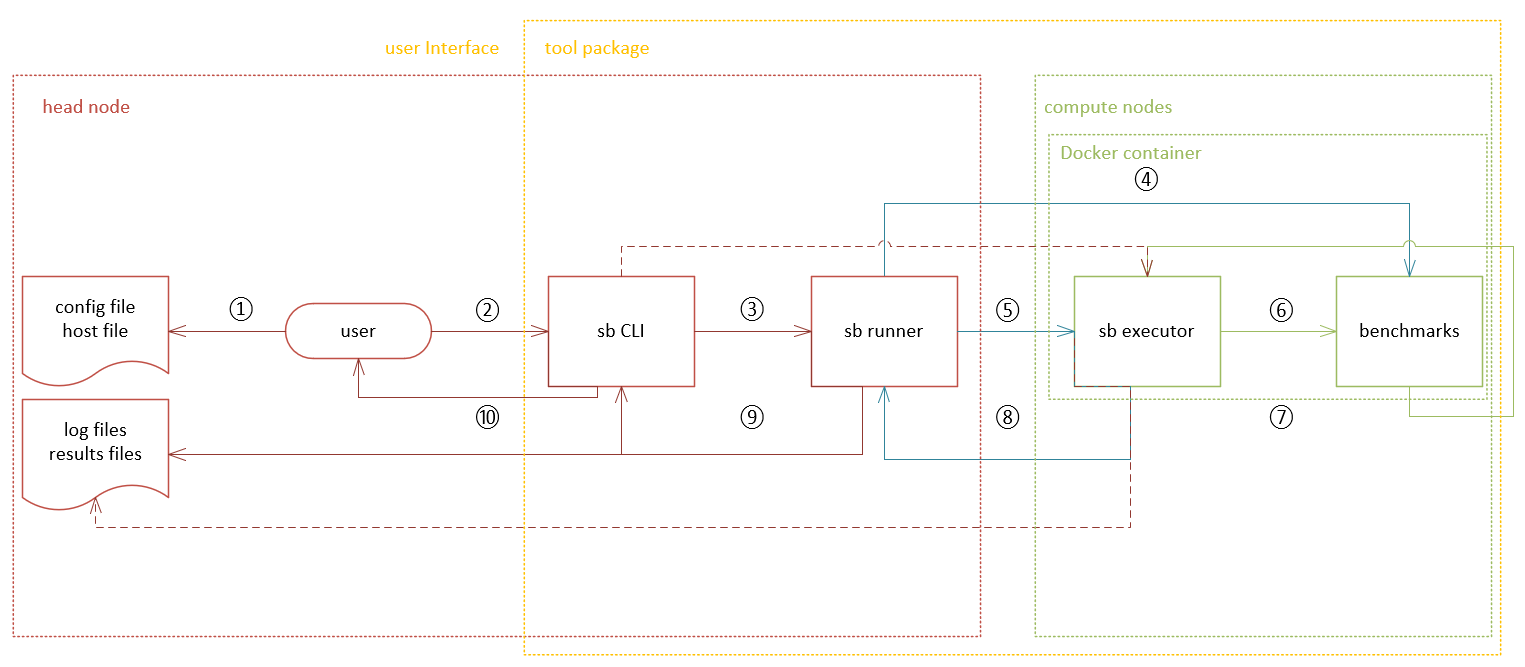

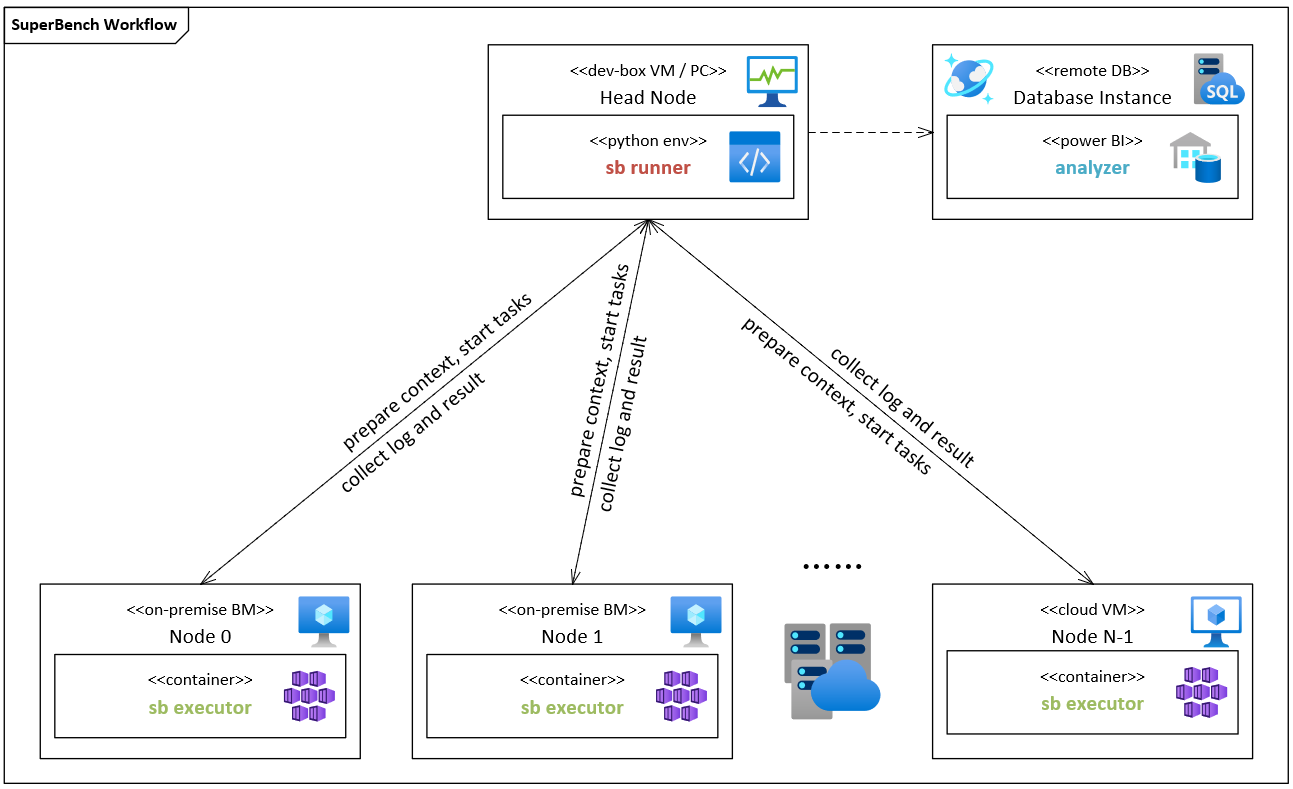

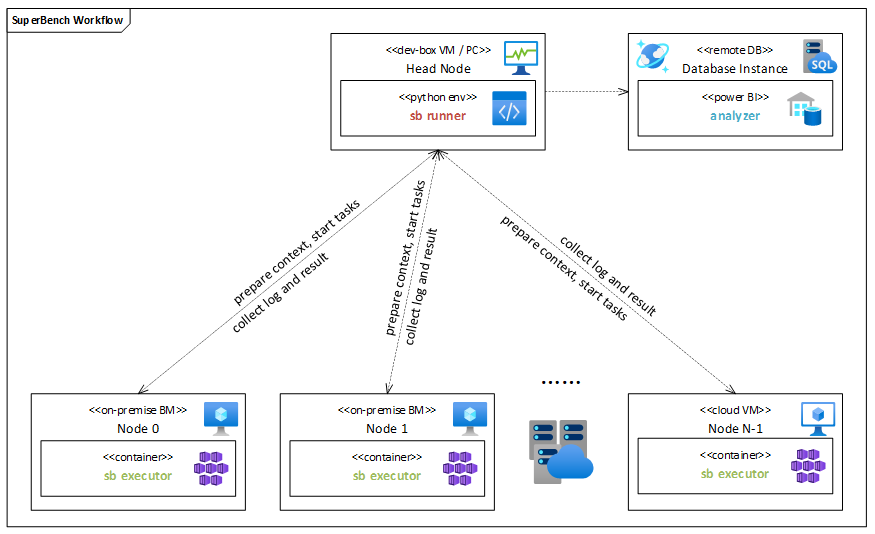

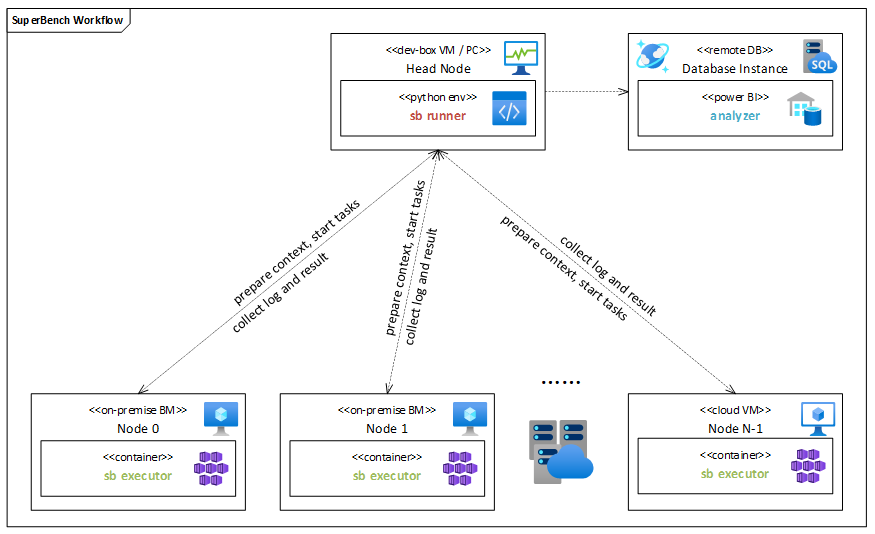

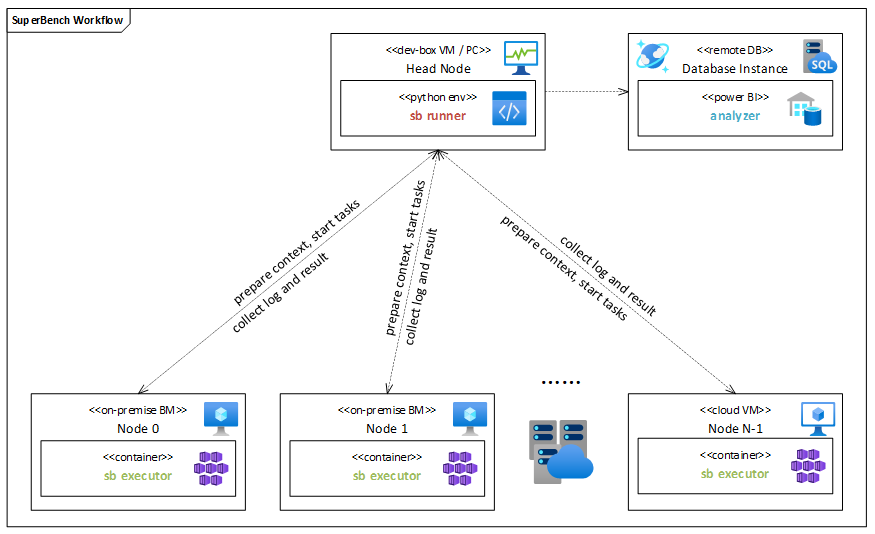

**Description** Add Executor and Benchmarks design doc **Major Revision** - Add Executor design doc - Add Benchmarks design doc

Showing

48 KB

83.9 KB

This diff is collapsed.

67.4 KB

| W: | H:

| W: | H:

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

docs/design-docs/overview.md

0 → 100644