init

Showing

.gitignore

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

README_origin.md

0 → 100644

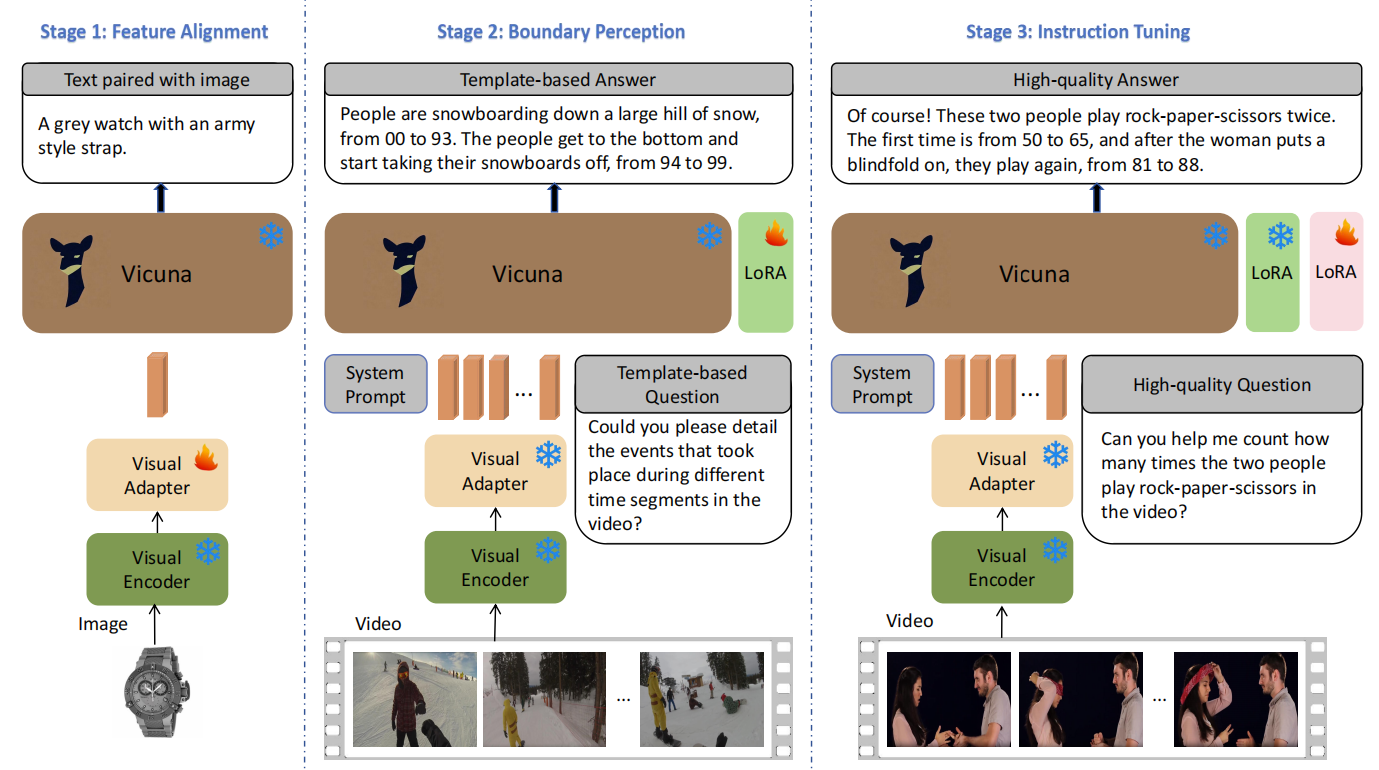

doc/VTimeLLM.PNG

0 → 100644

305 KB

docs/data.md

0 → 100644

docs/eval.md

0 → 100644

docs/inference.ipynb

0 → 100644

docs/inference_for_glm.ipynb

0 → 100644

docs/offline_demo.md

0 → 100644

docs/train.md

0 → 100644

images/demo.mp4

0 → 100644

File added

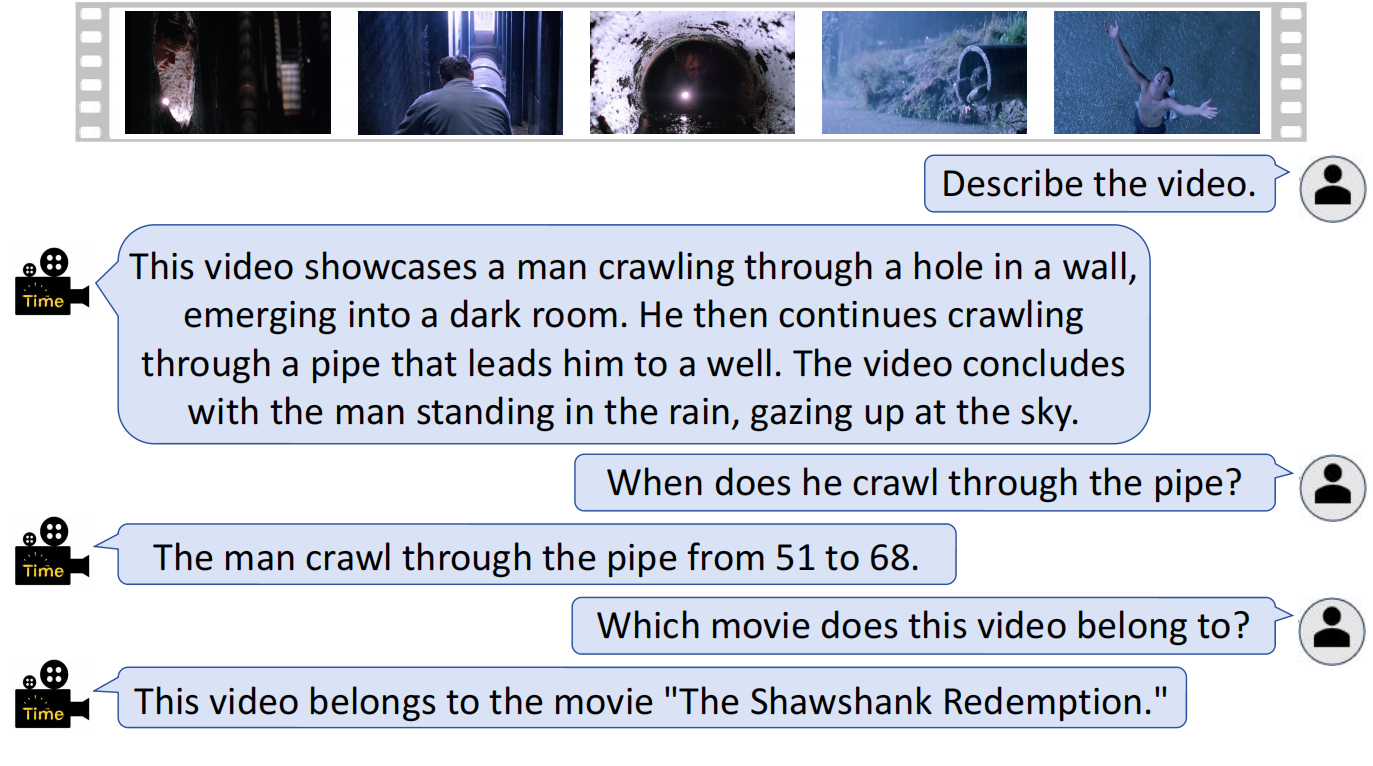

images/ex.png

0 → 100644

406 KB

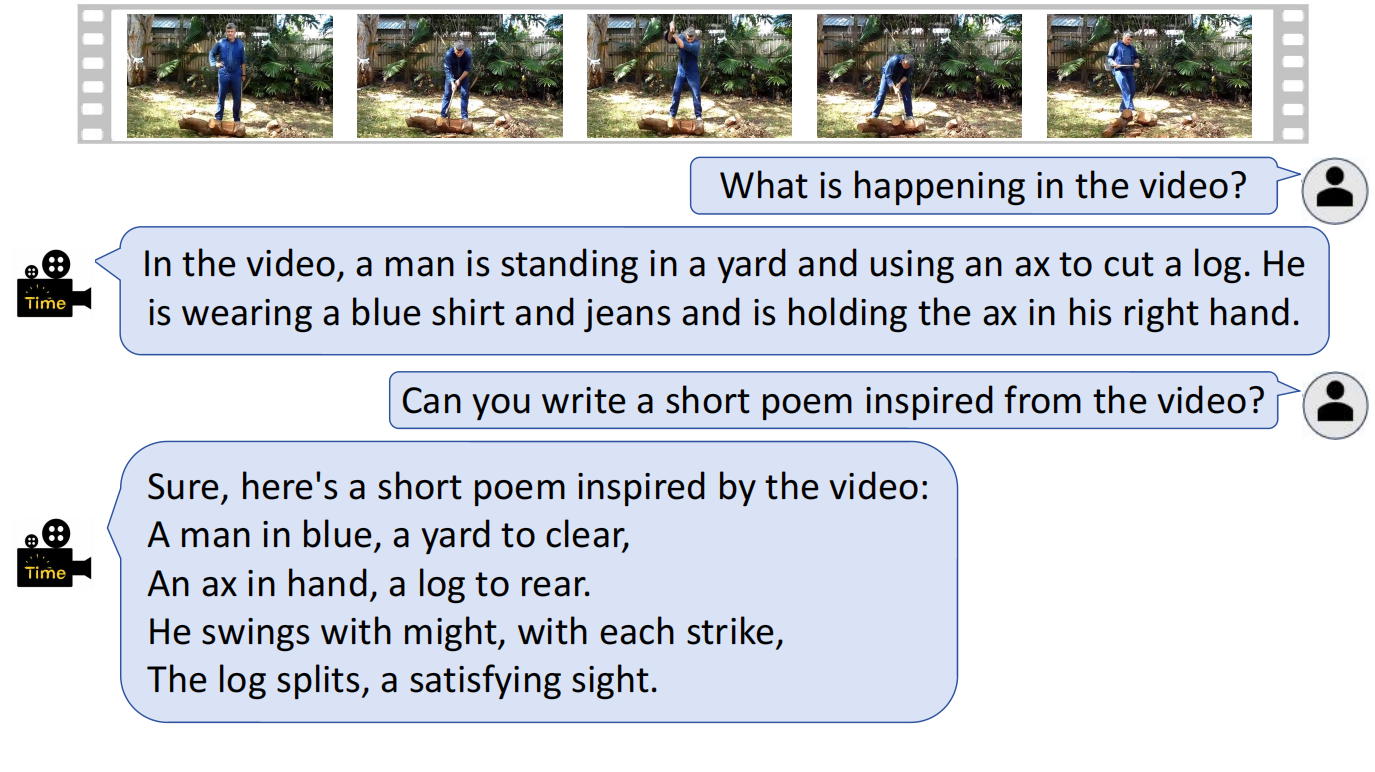

images/ex1.png

0 → 100644

487 KB

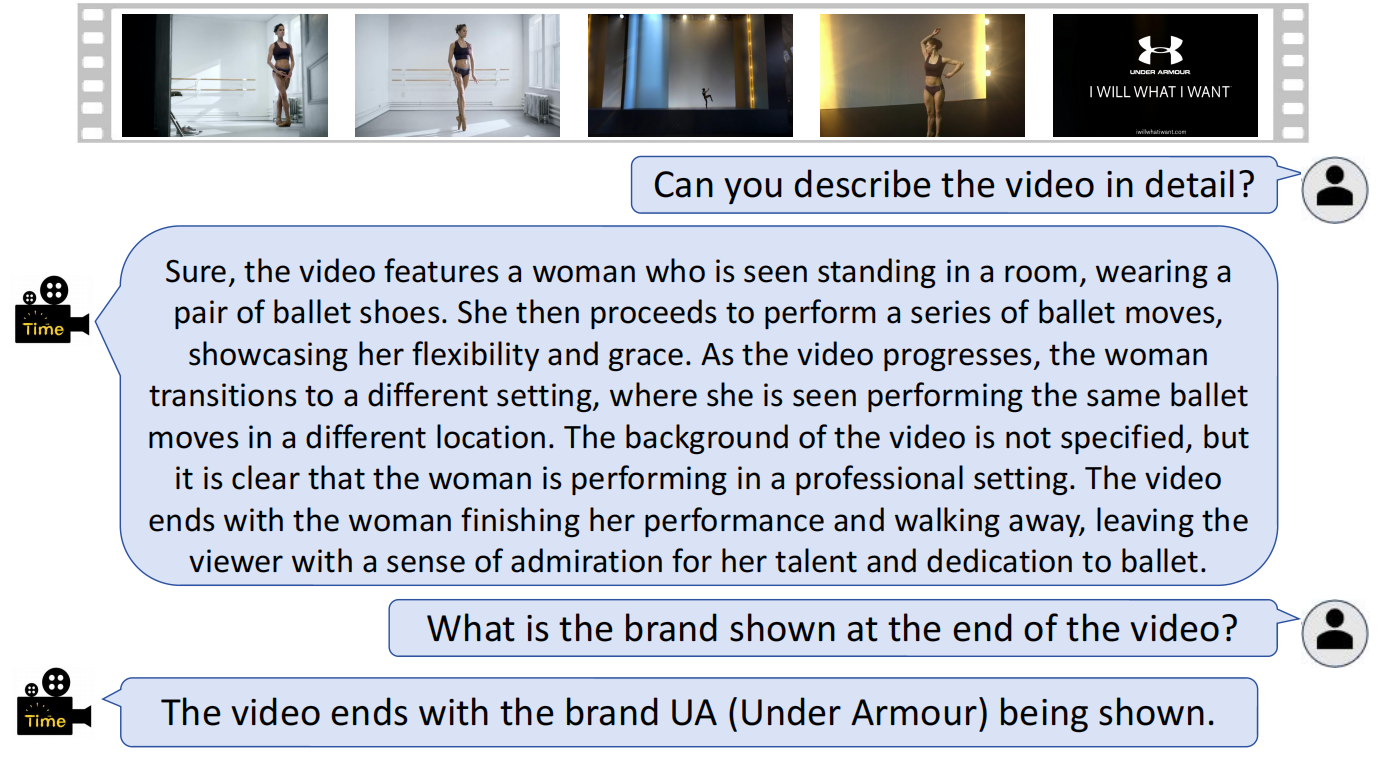

images/ex2.png

0 → 100644

306 KB

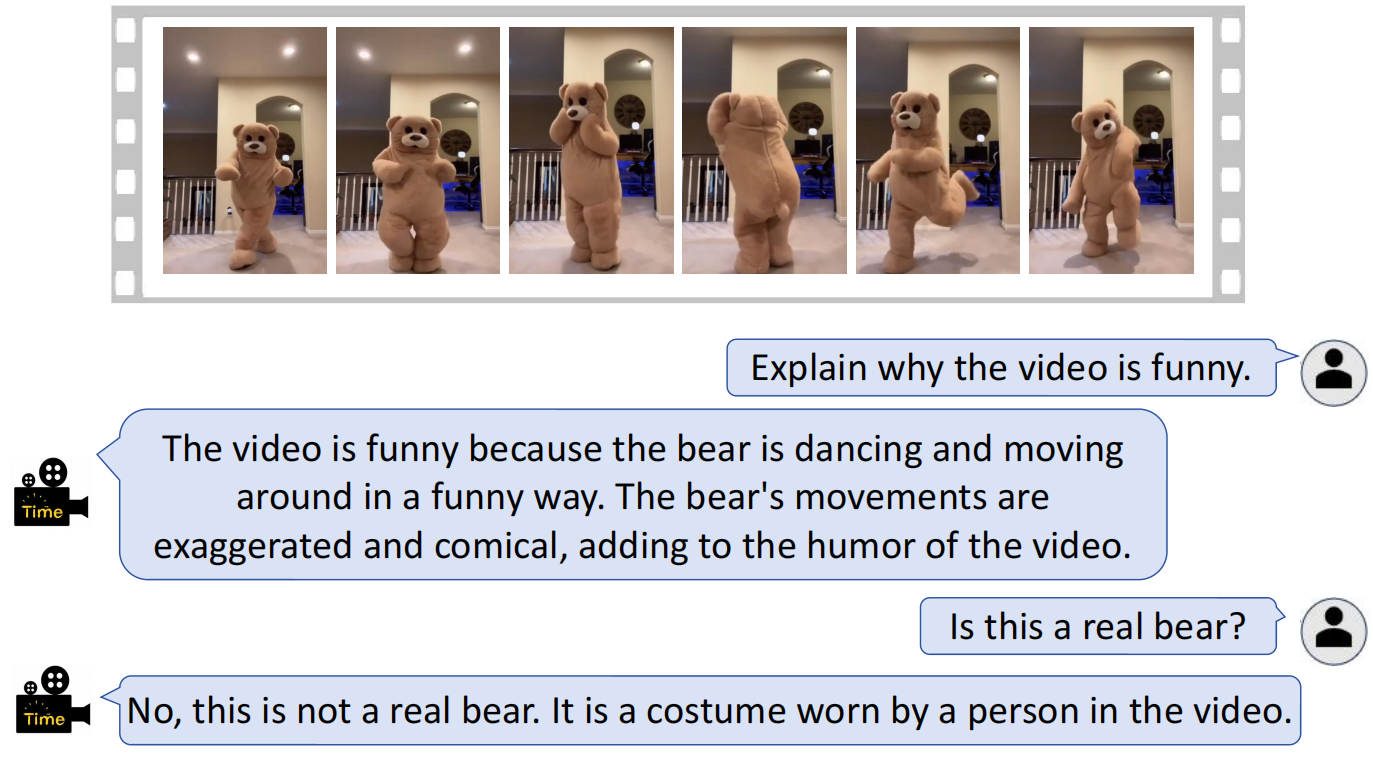

images/ex3.png

0 → 100644

426 KB

images/framework.png

0 → 100644

325 KB

requirements.txt

0 → 100644

| # torch | |||

| # flash-attn | |||

| # torchvision | |||

| # deepspeed | |||

| decord | |||

| easydict | |||

| einops | |||

| gradio | |||

| numpy | |||

| pandas>=2.0.3 | |||

| peft>=0.4.0 | |||

| Pillow | |||

| tqdm | |||

| transformers==4.31.0 | |||

| git+https://github.com/openai/CLIP.git | |||

| sentencepiece | |||

| protobuf | |||

| wandb | |||

| ninja | |||

| huggingface_hub |

scripts/stage1.sh

0 → 100644

scripts/stage1_glm.sh

0 → 100755