update

parents

Showing

LICENSE

0 → 100644

README.md

0 → 100644

README_origin.md

0 → 100644

doc/example.png

0 → 100644

1.27 MB

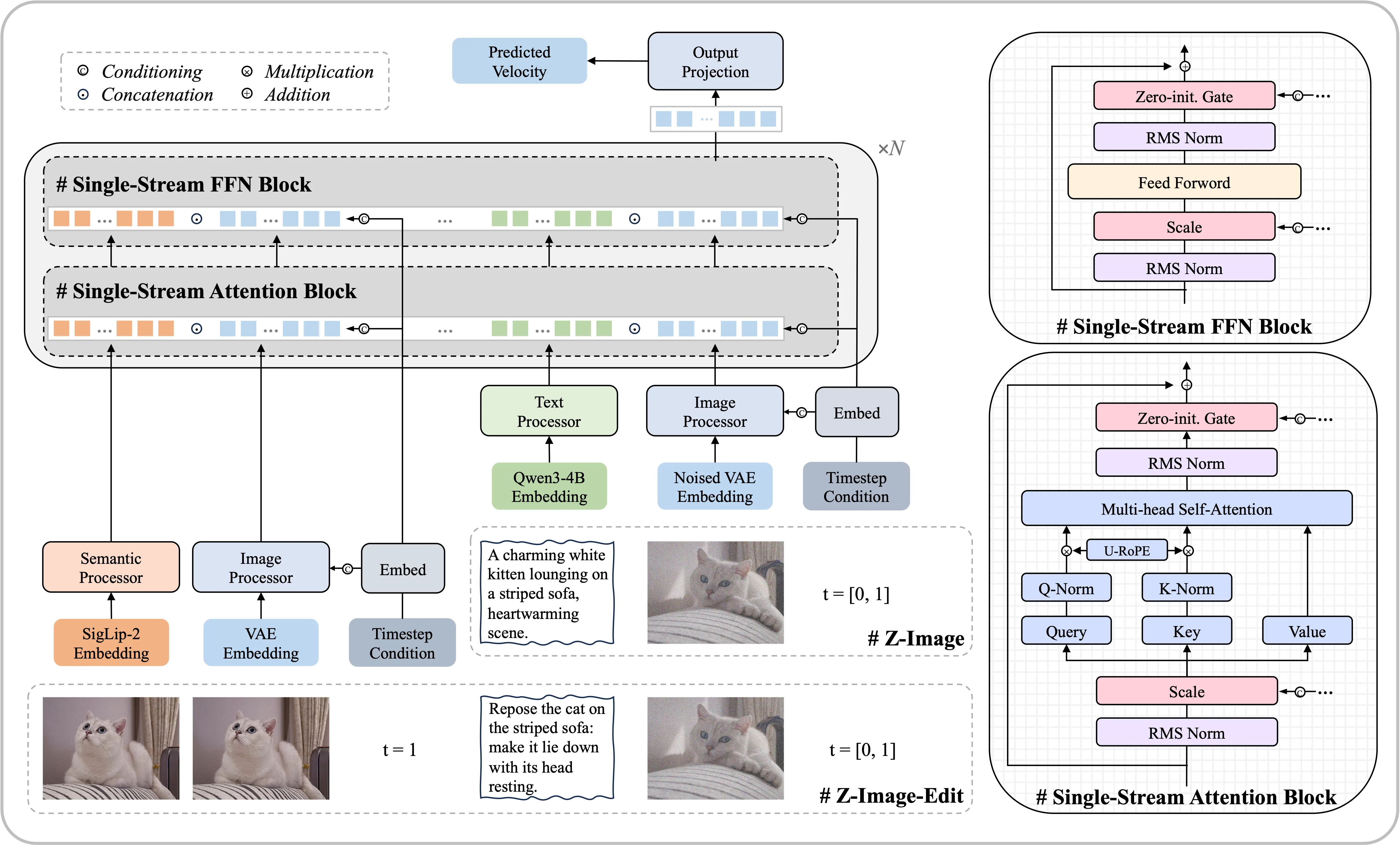

doc/model.png

0 → 100644

3.76 MB

icon.png

0 → 100644

64.5 KB

model.properties

0 → 100644

run.py

0 → 100644

run.sh

0 → 100644