taming-transformer

Showing

.gitignore

0 → 100644

Dockerfile

0 → 100644

License.txt

0 → 100644

README.md

0 → 100644

README_official.md

0 → 100644

assets/birddrawnbyachild.png

0 → 100644

1.53 MB

assets/drin.jpg

0 → 100644

279 KB

assets/faceshq.jpg

0 → 100644

300 KB

1.29 MB

1.36 MB

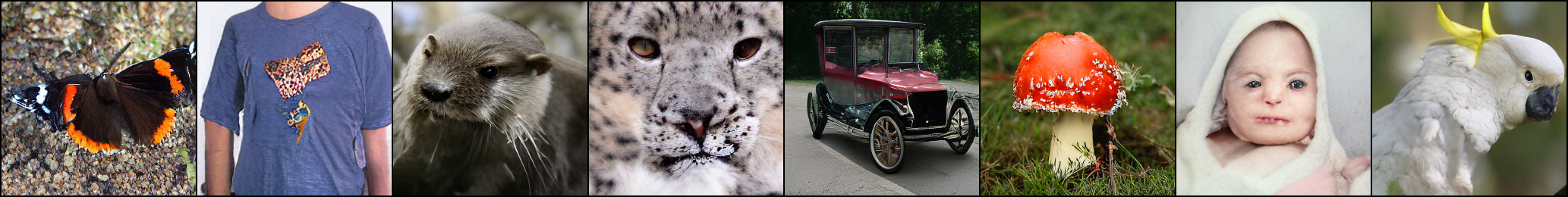

assets/imagenet.png

0 → 100644

1000 KB

552 KB

assets/mountain.jpeg

0 → 100644

426 KB

This source diff could not be displayed because it is too large. You can view the blob instead.

assets/stormy.jpeg

0 → 100644

701 KB

assets/sunset_and_ocean.jpg

0 → 100644

314 KB

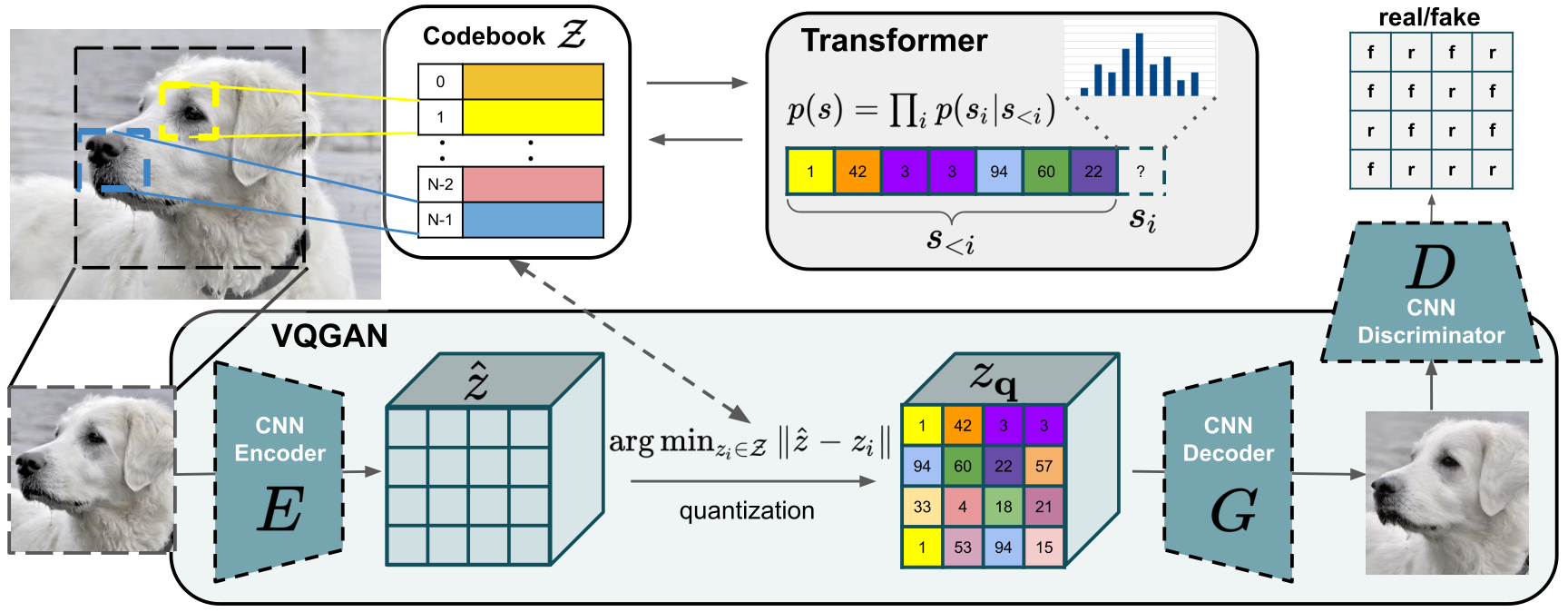

assets/teaser.png

0 → 100644

350 KB

configs/coco_cond_stage.yaml

0 → 100644