test

Showing

.gitignore

0 → 100644

LICENSE

0 → 100644

LICENSE-FID

0 → 100644

LICENSE-LPIPS

0 → 100644

LICENSE-NVIDIA

0 → 100644

README.md

0 → 100644

apply_factor.py

0 → 100644

assets/000000.png

0 → 100644

7.58 MB

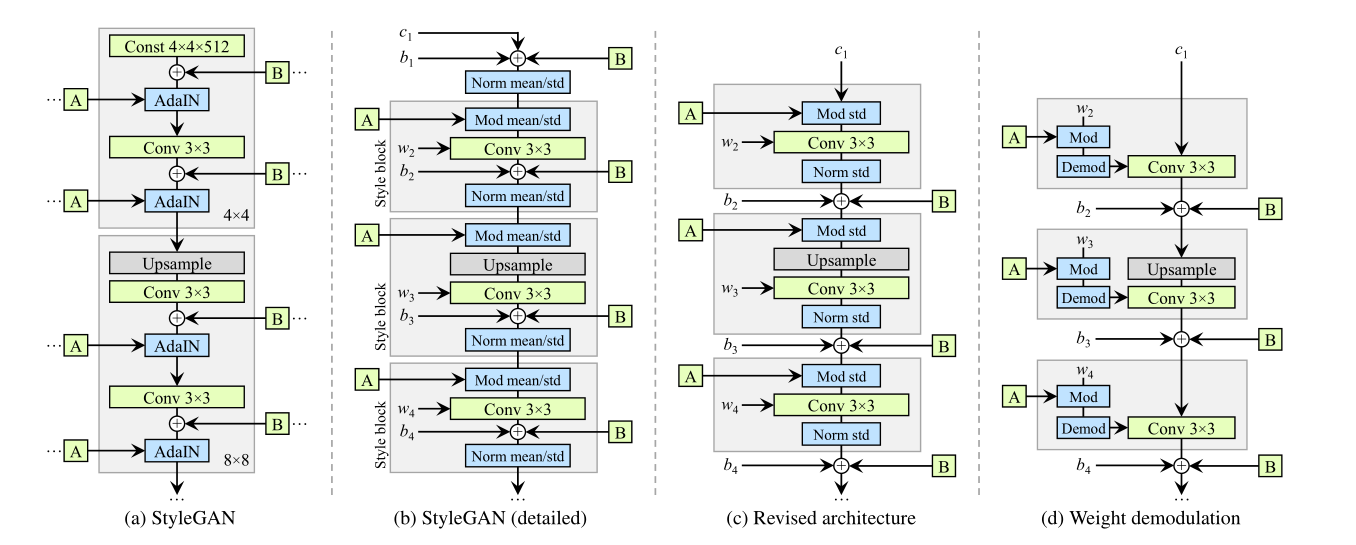

assets/AdaIN.png

0 → 100644

140 KB

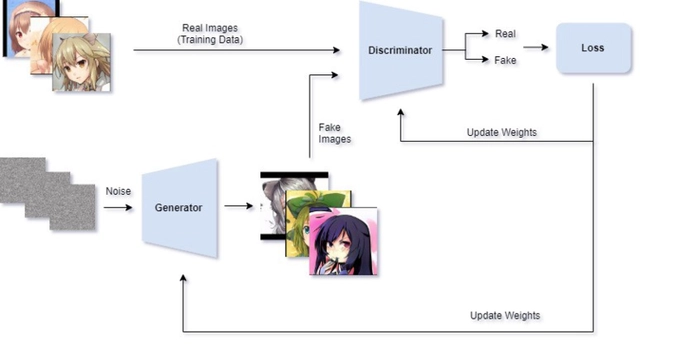

assets/GAN.png

0 → 100644

105 KB

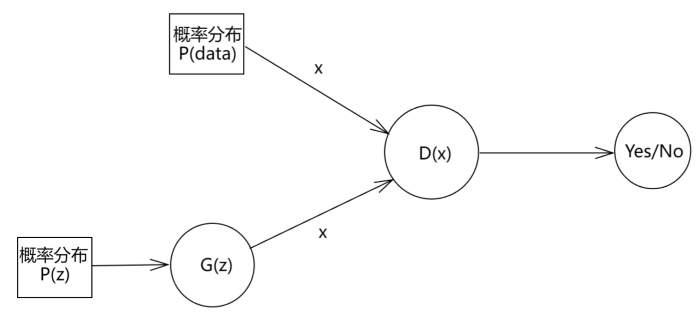

assets/GAN2.png

0 → 100644

21.7 KB

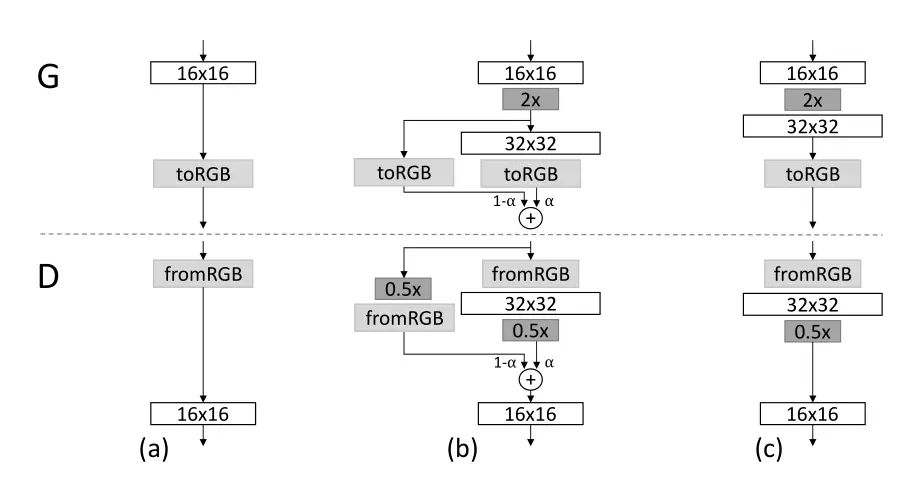

assets/PGGAN.png

0 → 100644

80.3 KB

assets/styleGAN.png

0 → 100644

68.7 KB

calc_inception.py

0 → 100644

checkpoint/.gitignore

0 → 100644

closed_form_factorization.py

0 → 100644

convert_weight.py

0 → 100644

dataset.py

0 → 100644

distributed.py

0 → 100644

doc/sample-metfaces.png

0 → 100644

5.19 MB