Update README.md

Signed-off-by:  lijian <lijian6@sugon.com>

lijian <lijian6@sugon.com>

Showing

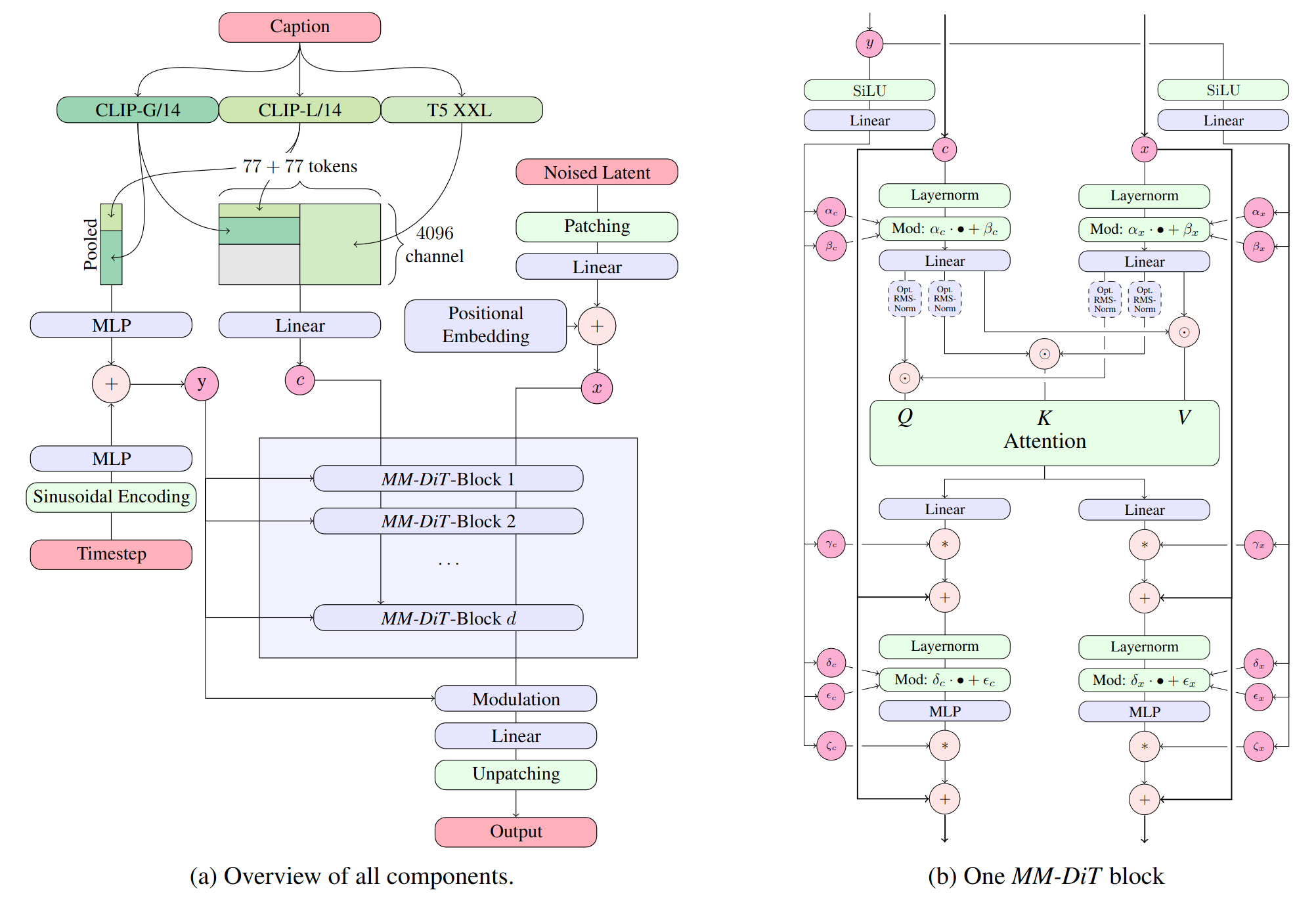

docs/mmdit.png

0 → 100644

260 KB

docs/result.png

0 → 100644

1.04 MB

model.properties

0 → 100644

Signed-off-by:  lijian <lijian6@sugon.com>

lijian <lijian6@sugon.com>

260 KB

1.04 MB