init

Showing

LICENSE

0 → 100644

README.md

0 → 100644

README_origin.md

0 → 100644

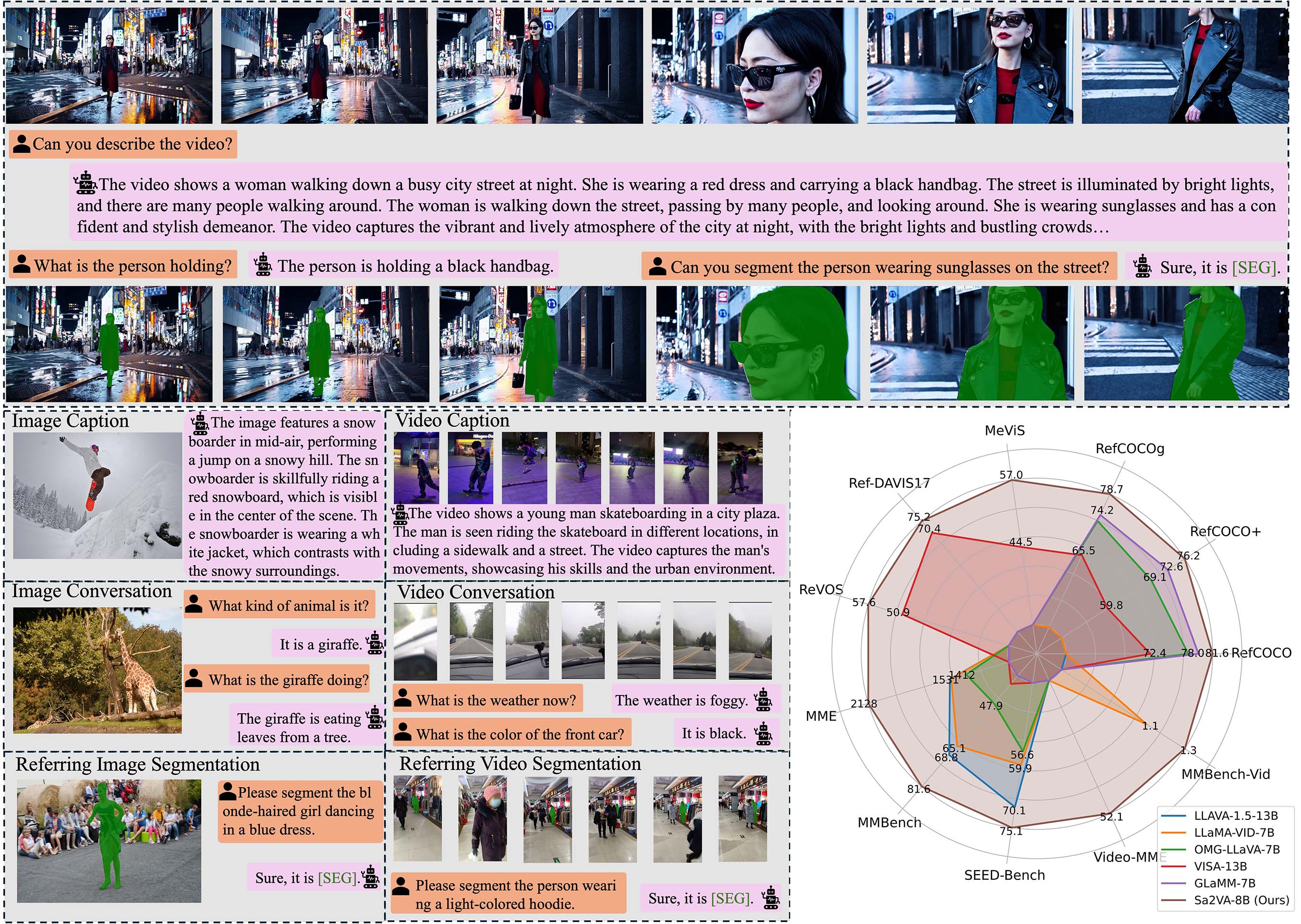

assets/images/teaser.jpg

0 → 100644

743 KB

1.06 MB

1.17 MB

2.82 MB

assets/videos/exp_1.gif

0 → 100644

4.06 MB

assets/videos/exp_2.gif

0 → 100644

3.66 MB

assets/videos/gf_exp1.gif

0 → 100644

4.6 MB

assets/videos/gf_exp1.mp4

0 → 100644

File added

29.1 KB

36.7 KB

35.3 KB

34.1 KB

27.5 KB

24.2 KB

21.6 KB

20.5 KB

19.9 KB