v1.0

Showing

docker/requirements.txt

0 → 100644

docker_start.sh

0 → 100644

docker_startnv.sh

0 → 100644

engine.py

0 → 100644

eval.sh

0 → 100644

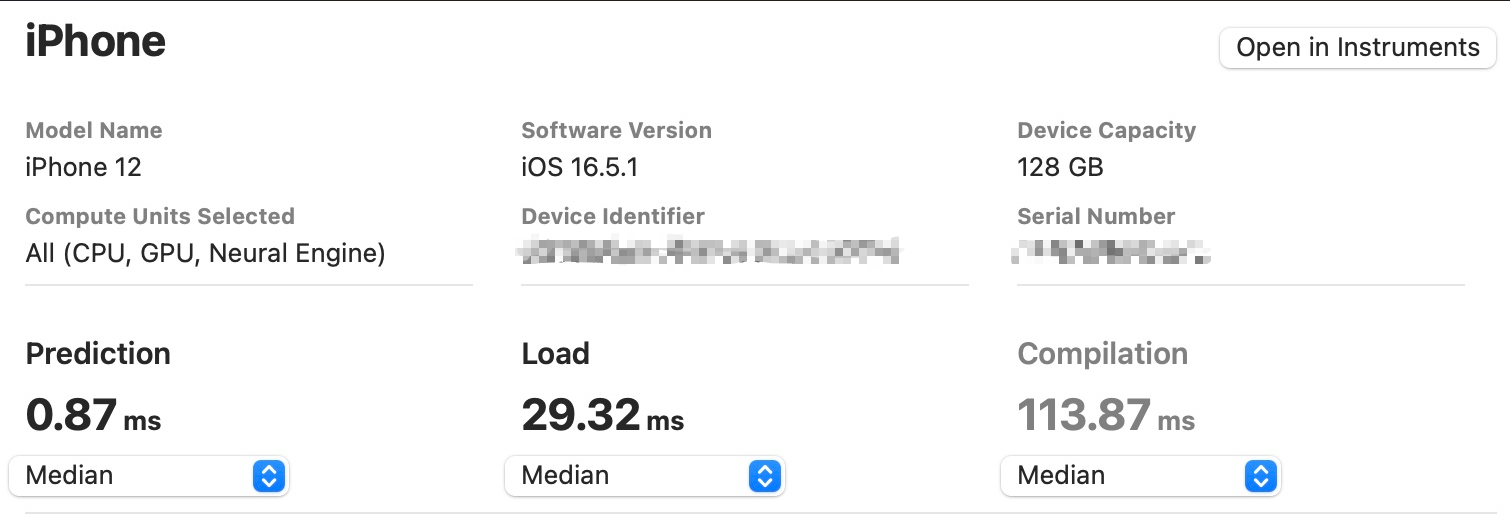

export_coreml.py

0 → 100644

exportonnx.sh

0 → 100644

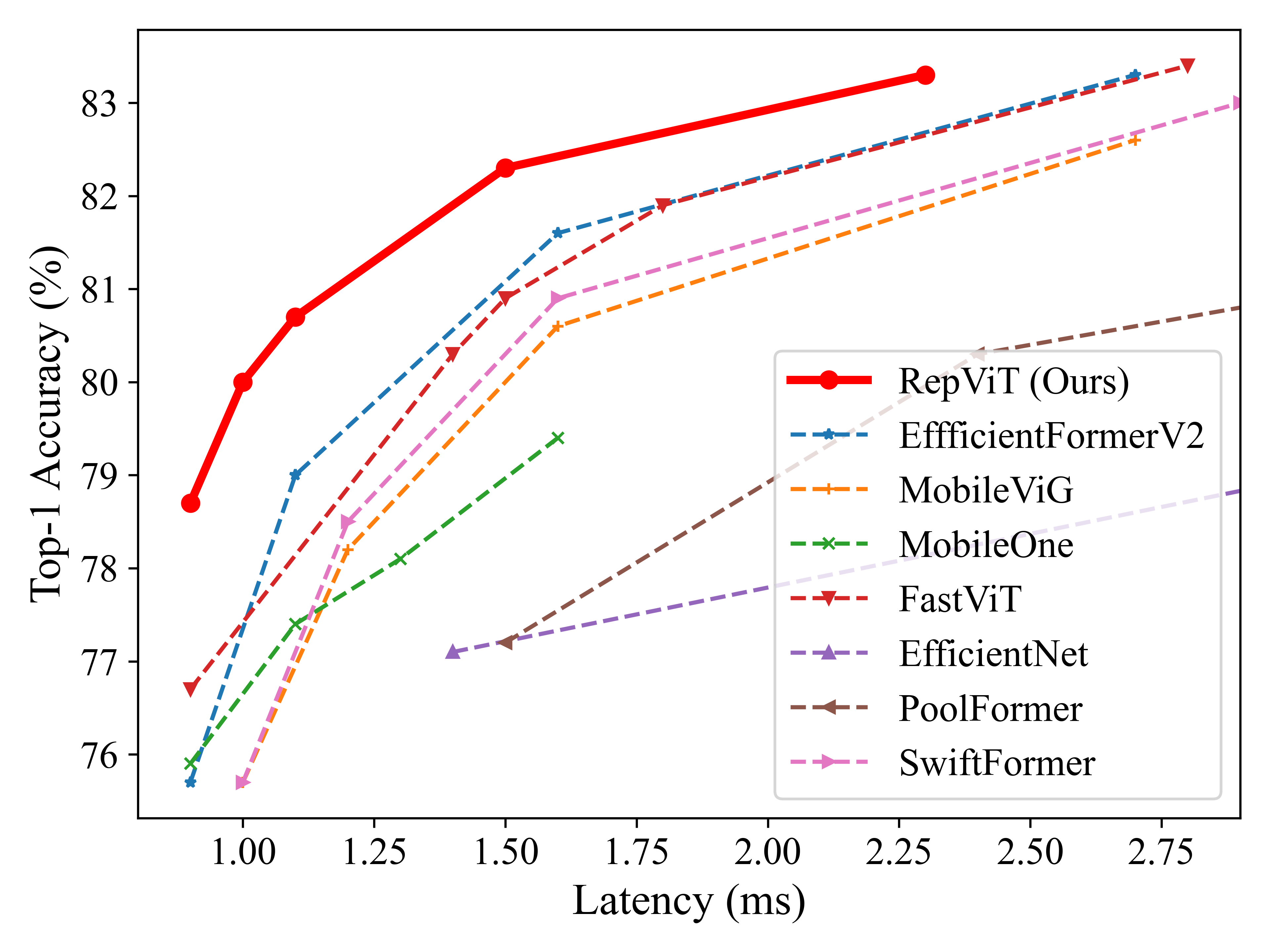

figures/latency.png

0 → 100644

615 KB

118 KB

flops.py

0 → 100644

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.