edit

Showing

Contributors.md

0 → 100755

LICENSE

0 → 100755

doc/MMDIT.png

0 → 100644

199 KB

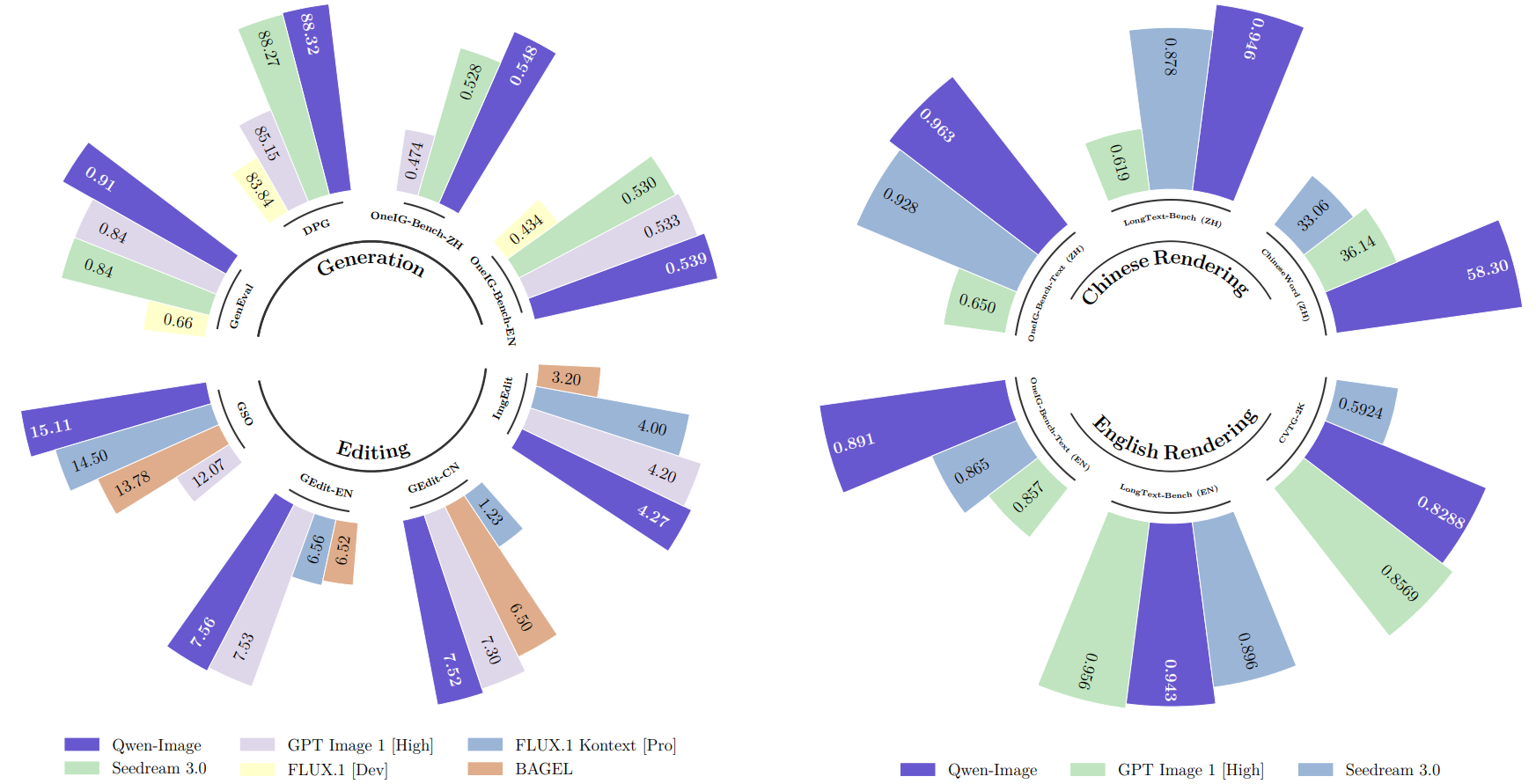

doc/qwen-image.png

0 → 100644

266 KB

docker/Dockerfile

0 → 100755

icon.png

0 → 100755

67.5 KB

infer/acc.py

0 → 100755

infer/comparison_report.csv

0 → 100644

infer/infer.json

0 → 100644

infer/infer_hf.py

0 → 100644

550 KB

197 KB

331 KB

273 KB

269 KB

1.16 MB

767 KB

922 KB