readme

Showing

data/scannet/intrinsics.npz

0 → 100644

File added

data/scannet/test/.gitignore

0 → 100644

data/scannet/train

0 → 120000

demo/demo_loftr.py

0 → 100644

demo/run_demo.sh

0 → 100755

docs/TRAINING.md

0 → 100644

environment.yaml

0 → 100644

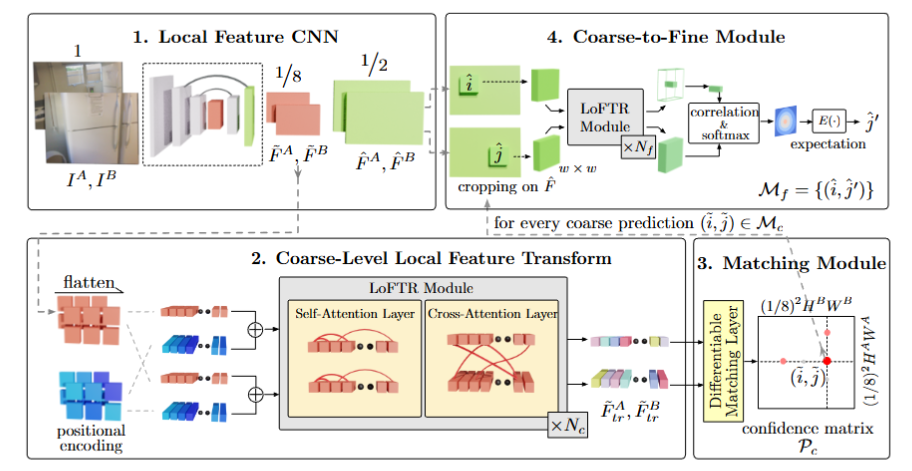

image-1.png

0 → 100644

245 KB

This diff is collapsed.