first add

Showing

.gitignore

0 → 100644

CODE_OF_CONDUCT.md

0 → 100644

CONTRIBUTING.md

0 → 100644

LICENSE

0 → 100644

Llama3_Repo.jpeg

0 → 100644

446 KB

MODEL_CARD.md

0 → 100644

This diff is collapsed.

README.md

0 → 100644

README_ori.md

0 → 100644

USE_POLICY.md

0 → 100644

249 KB

doc/Meta-Llama-3-8B.png

0 → 100644

82.8 KB

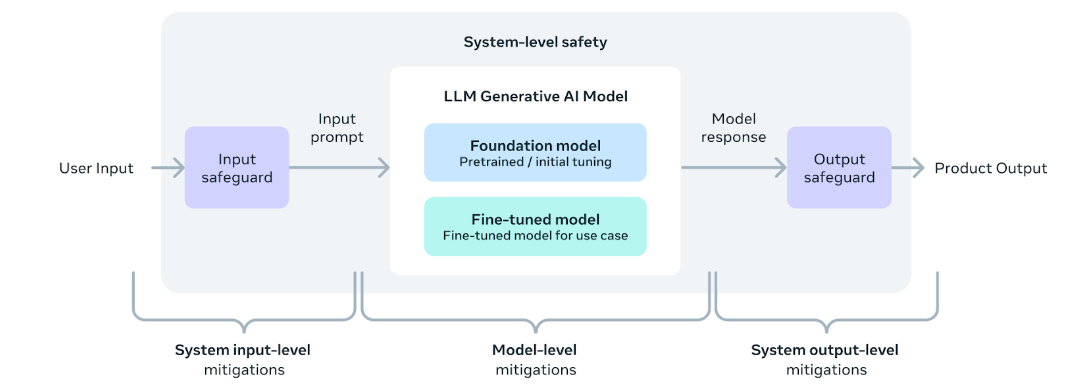

doc/method.png

0 → 100644

70 KB

docker/Dockerfile

0 → 100644

download.sh

0 → 100644

eval_details.md

0 → 100644

example_chat_completion.py

0 → 100644

example_text_completion.py

0 → 100644

llama/__init__.py

0 → 100644

llama/generation.py

0 → 100644

llama/model.py

0 → 100644