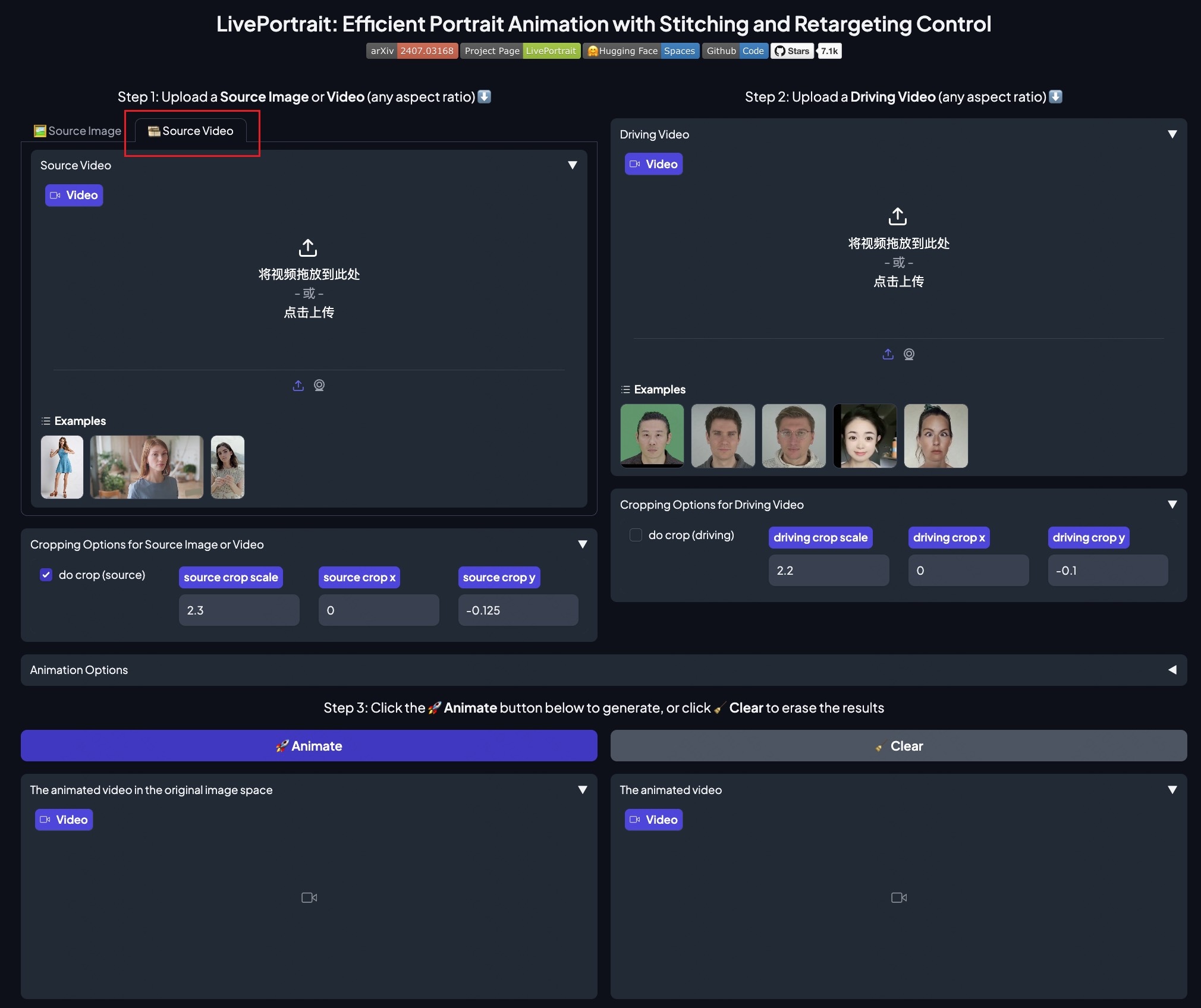

liveportrait

Showing

.gitignore

0 → 100644

.vscode/settings.json

0 → 100644

Dockerfile

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

app.py

0 → 100644

assets/.gitignore

0 → 100644

364 KB

assets/docs/inference.gif

0 → 100644

801 KB

assets/docs/showcase.gif

0 → 100644

6.32 MB

assets/docs/showcase2.gif

0 → 100644

2.75 MB

File added

File added

File added

File added

File added

File added

File added