init

Showing

Dockerfile

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

README_ori.md

0 → 100644

assets/000000.png

0 → 100644

395 KB

assets/acc.png

0 → 100644

32 KB

assets/astronaut.png

0 → 100644

1.48 MB

assets/captions.txt

0 → 100644

This diff is collapsed.

1.57 MB

This diff is collapsed.

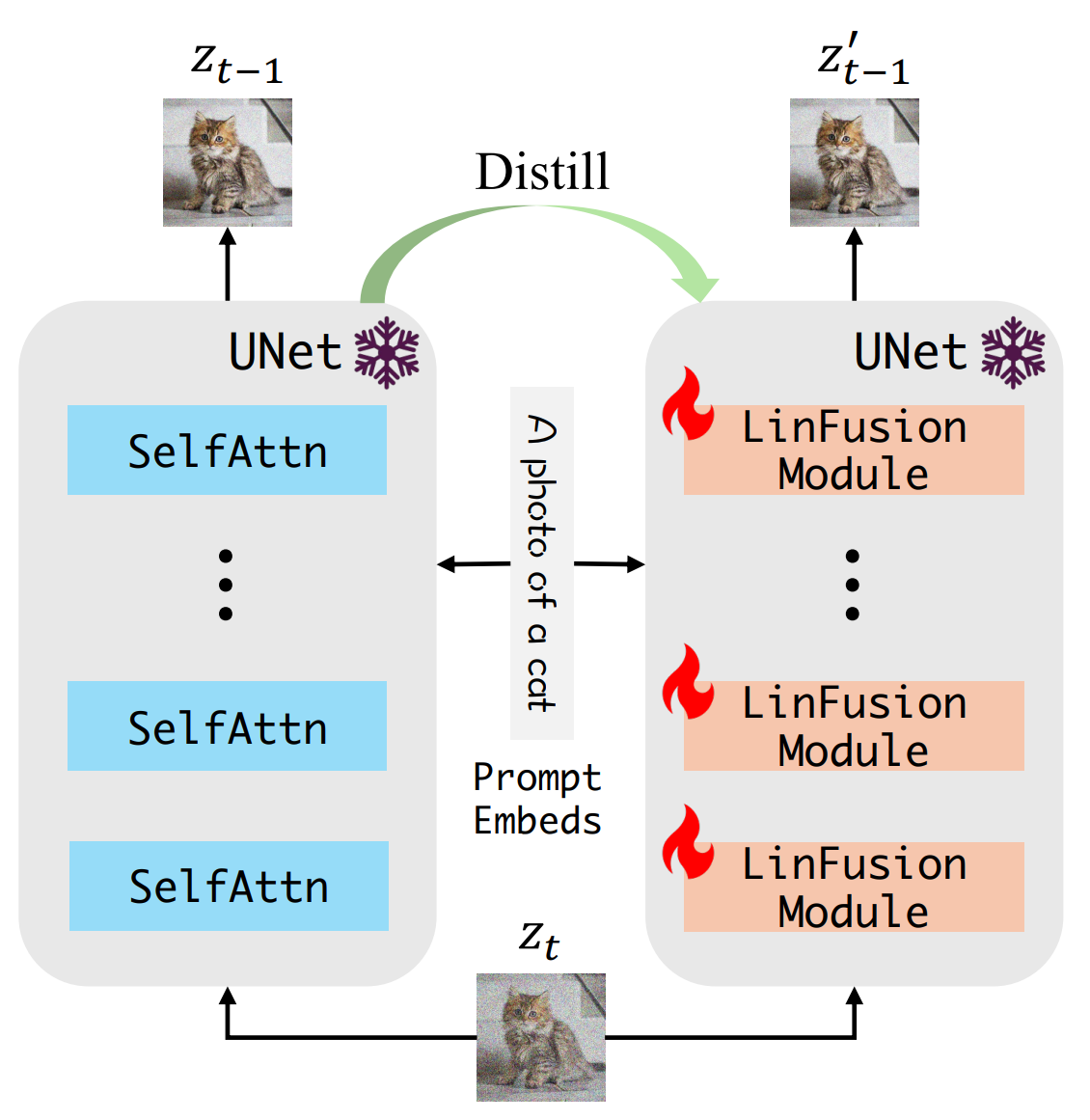

assets/linfusin_overview.png

0 → 100644

236 KB

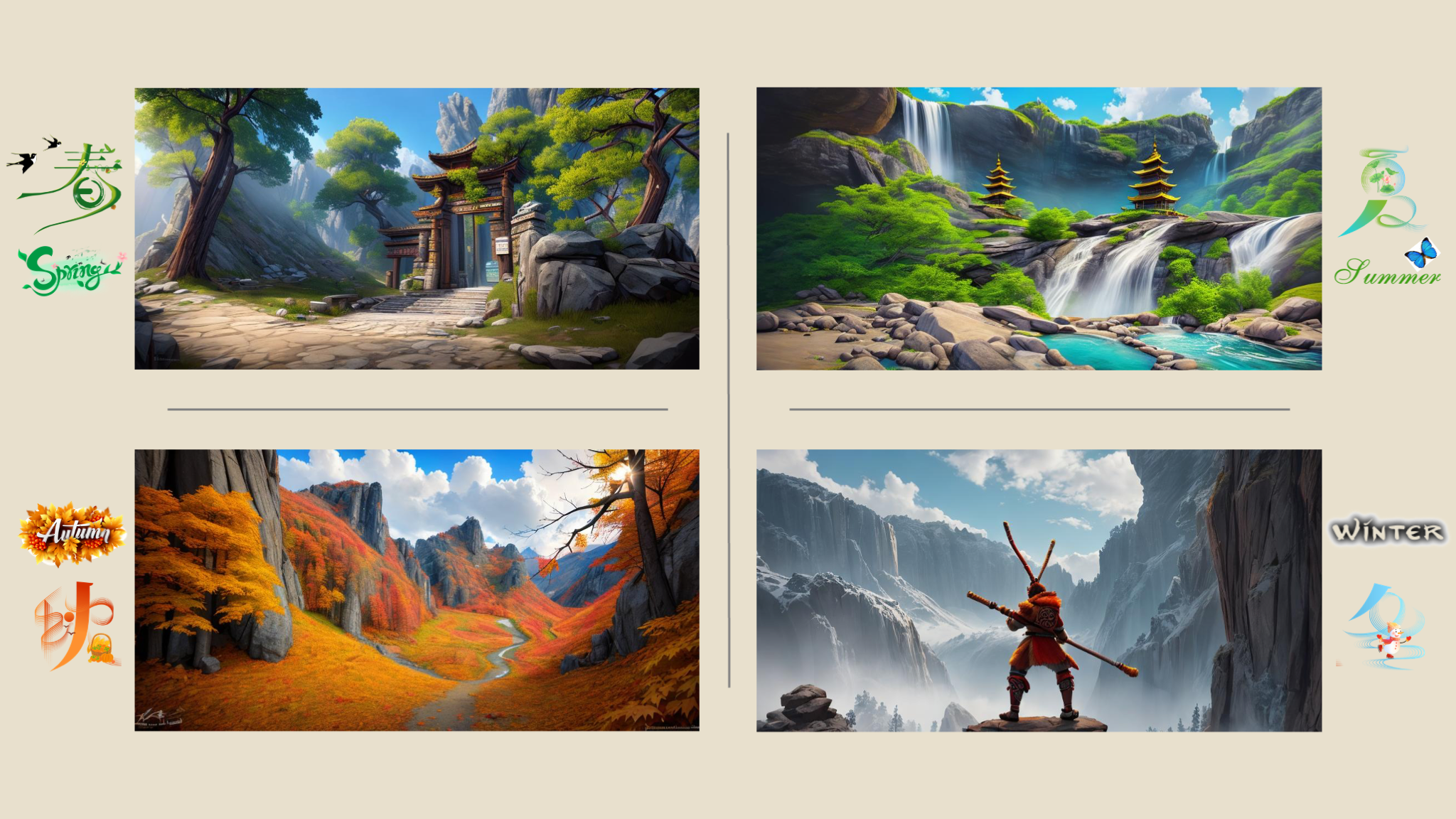

assets/picture.png

0 → 100644

2.35 MB

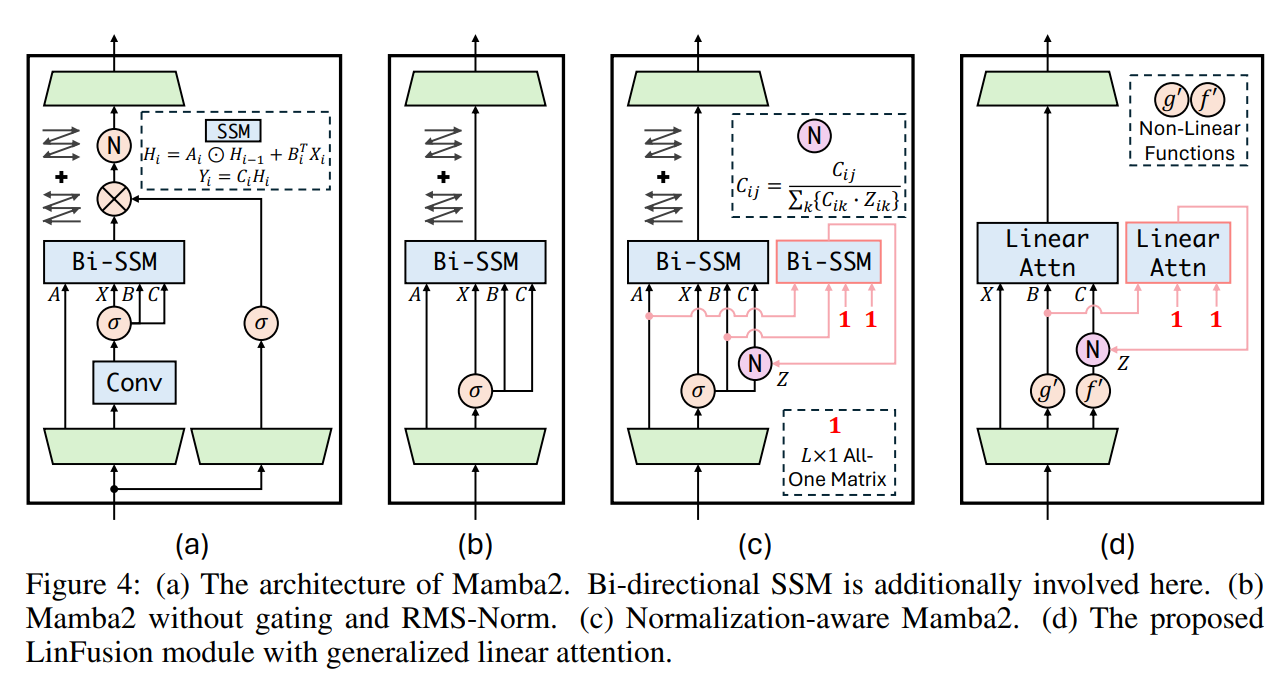

assets/principle.png

0 → 100644

148 KB

examples/eval/eval.sh

0 → 100644