Initial commit

parents

Showing

LICENSE

0 → 100644

README.md

0 → 100644

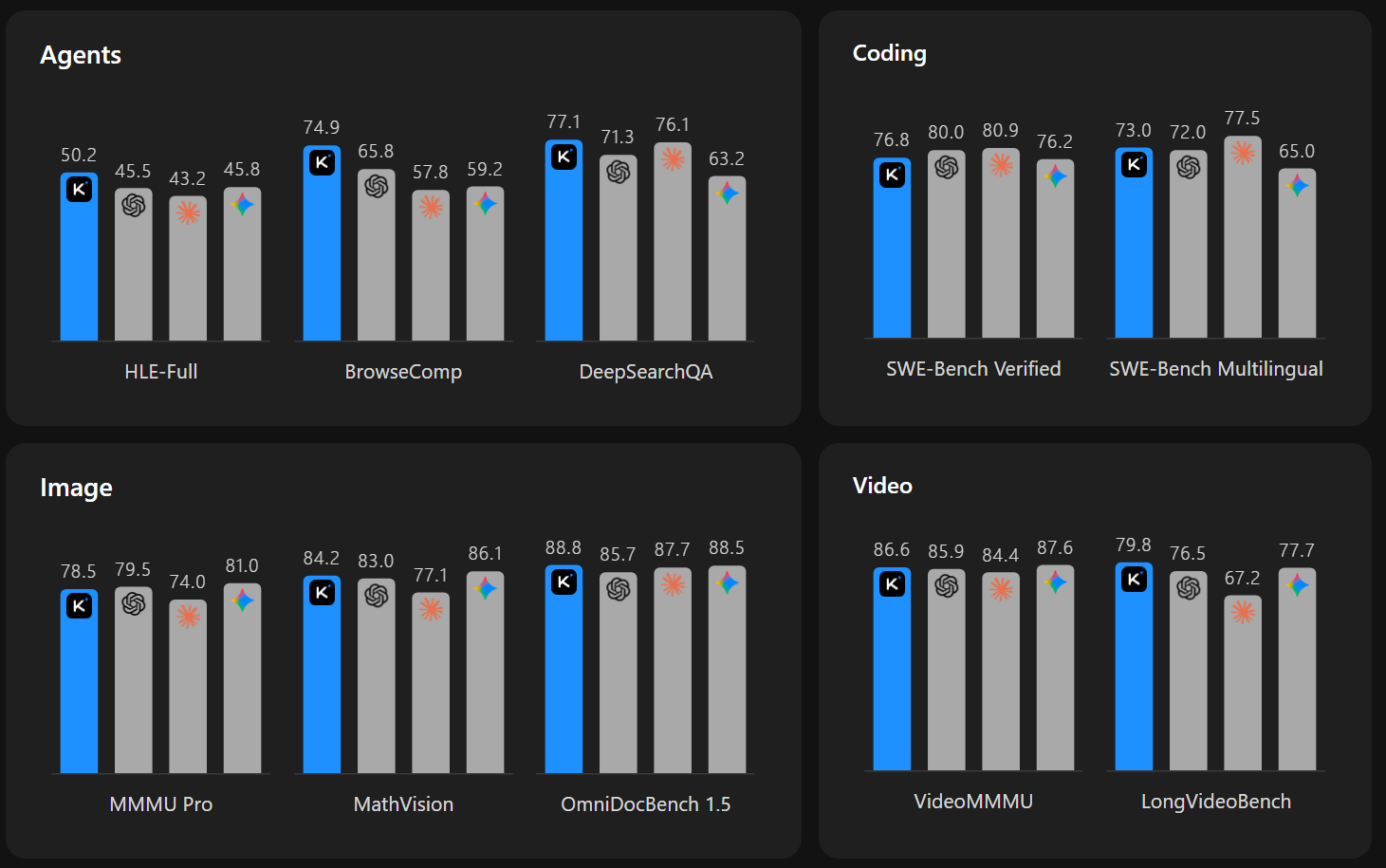

doc/kimi-bar-chart.png

0 → 100644

100 KB

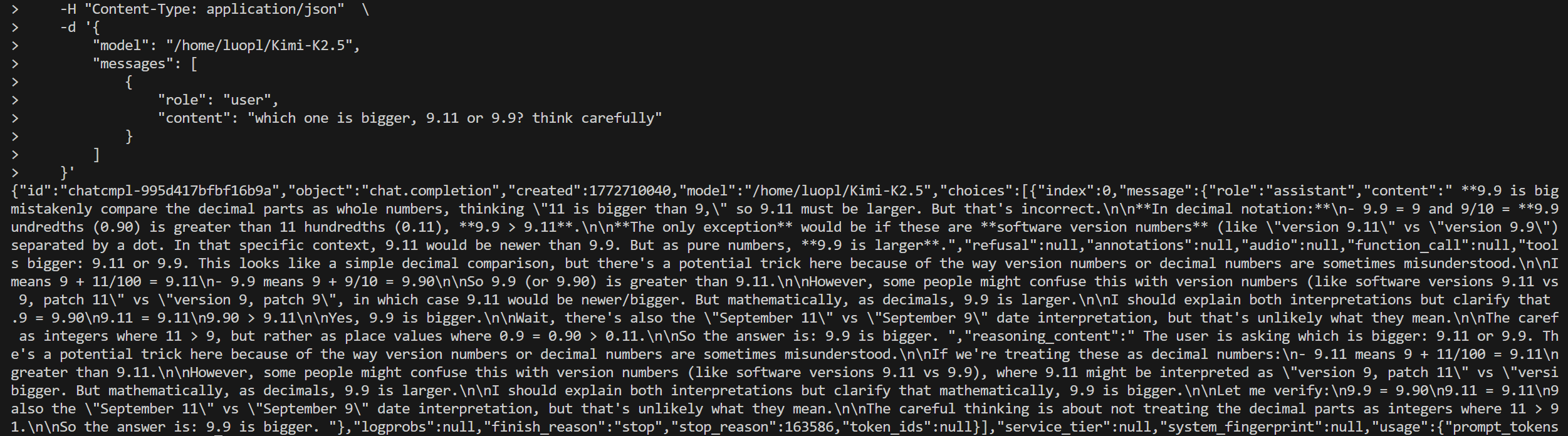

doc/result-dcu.png

0 → 100644

144 KB

icon.png

0 → 100644

53.8 KB

model.properties

0 → 100644