v1.0

Showing

CONTRIBUTING.md

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

README_origin.md

0 → 100644

doc/gemma.png

0 → 100644

161 KB

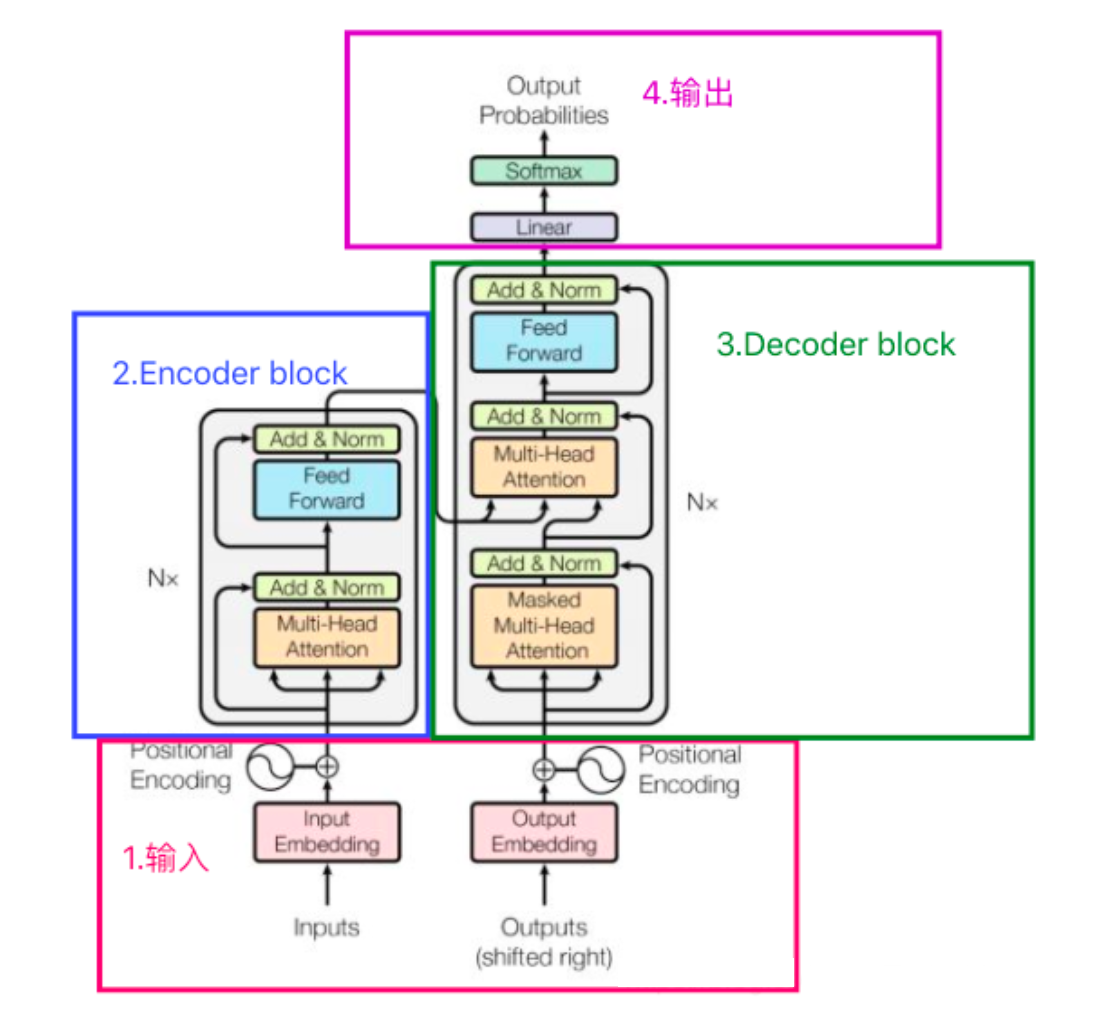

doc/transformer.png

0 → 100644

396 KB

docker/Dockerfile

0 → 100644

docker/requirements.txt

0 → 100644

docker_gpu/Dockerfile

0 → 100644

docker_gpu/xla.Dockerfile

0 → 100644

docker_start.sh

0 → 100644

gemma-2b-pytorch/README.md

0 → 100644

gemma-2b-pytorch/config.json

0 → 100644

File added

gemma/__init__.py

0 → 100644

File added

File added

File added