v1.0

Showing

.gitignore

0 → 100644

.gitmodules

0 → 100644

.pre-commit-config.yaml

0 → 100644

Dockerfile

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

README_origin.md

0 → 100644

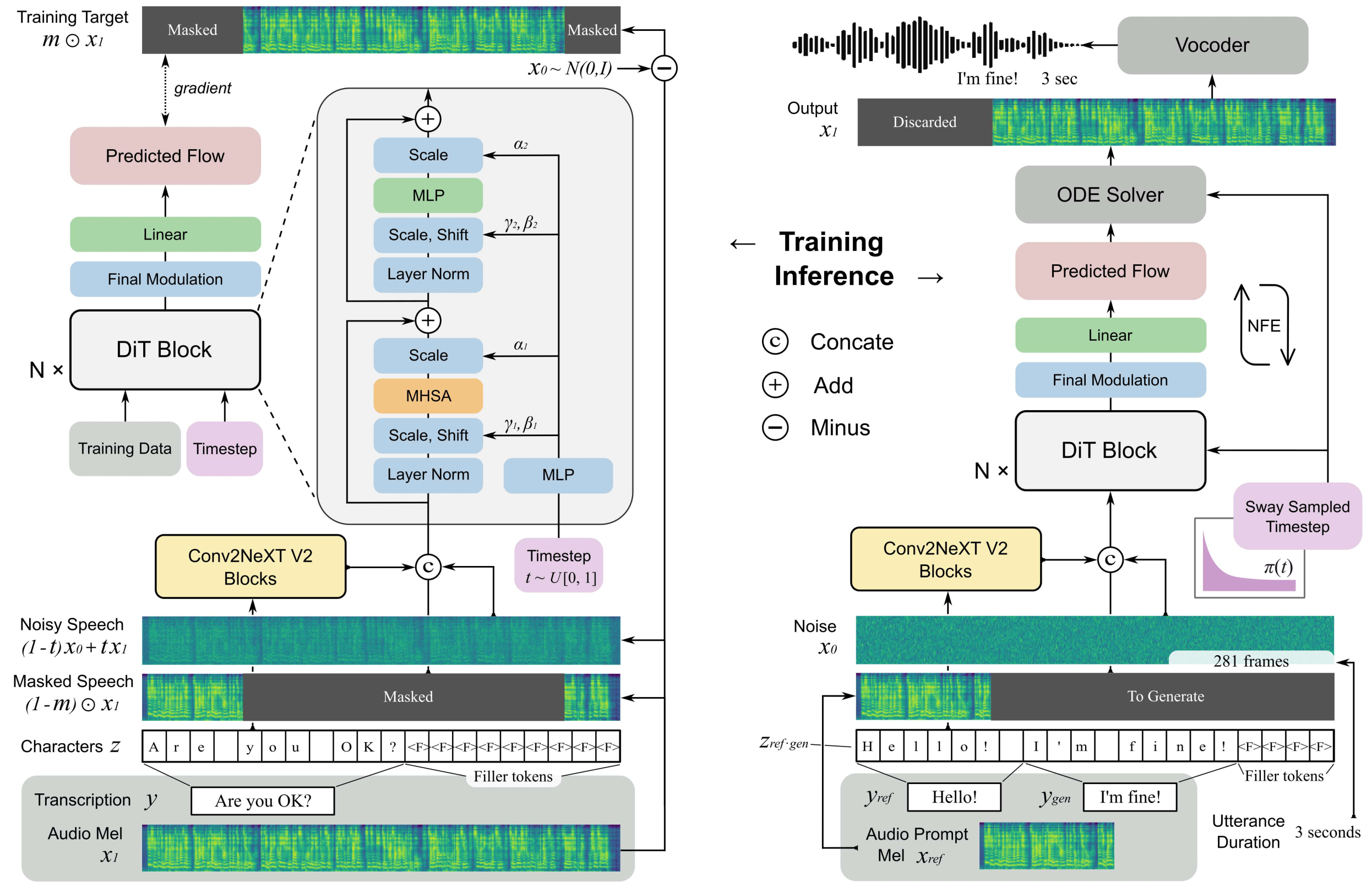

doc/algorithm.png

0 → 100644

83.2 KB

doc/f5tts.png

0 → 100644

1.37 MB

docker/Dockerfile

0 → 100644

docker/requirements.txt

0 → 100644

docker_start.sh

0 → 100644

frpc_linux_amd64

0 → 100644

File added

icon.png

0 → 100644

64.4 KB

infer.sh

0 → 100644

input.wav

0 → 100644

File added