update

Showing

Dockerfile

0 → 100644

DragGAN.gif

0 → 100644

This image diff could not be displayed because it is too large. You can view the blob instead.

LICENSE.txt

0 → 100644

README.md

0 → 100644

README_origin.md

0 → 100644

File added

arial.ttf

0 → 100644

File added

dnnlib/__init__.py

0 → 100644

File added

File added

dnnlib/util.py

0 → 100644

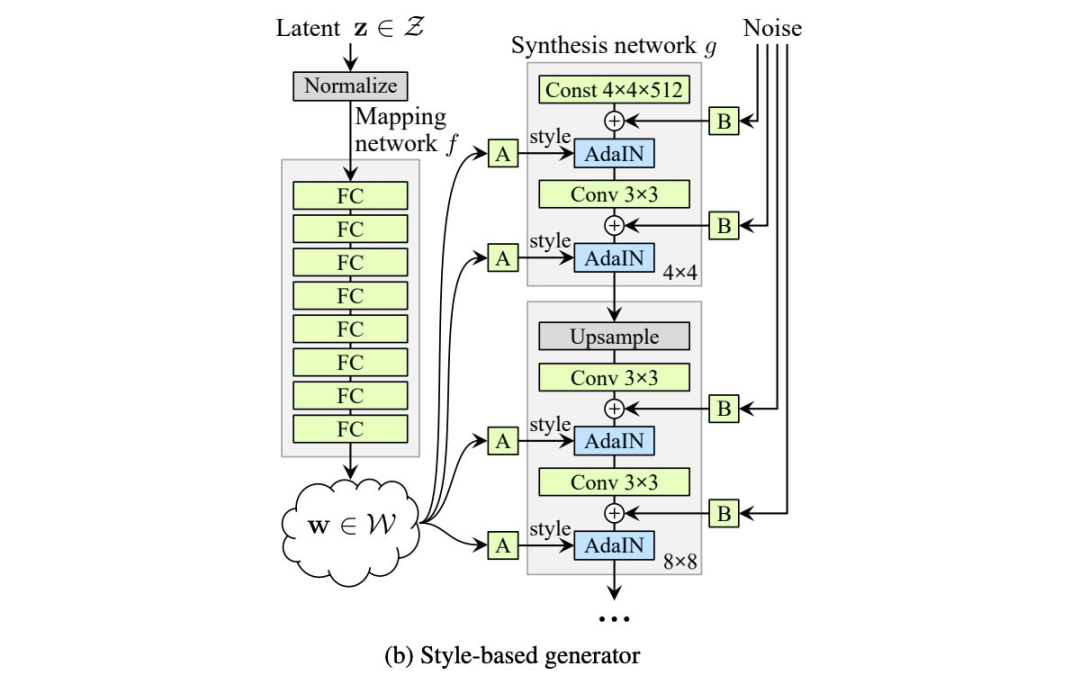

doc/StyleGan.png

0 → 100644

239 KB

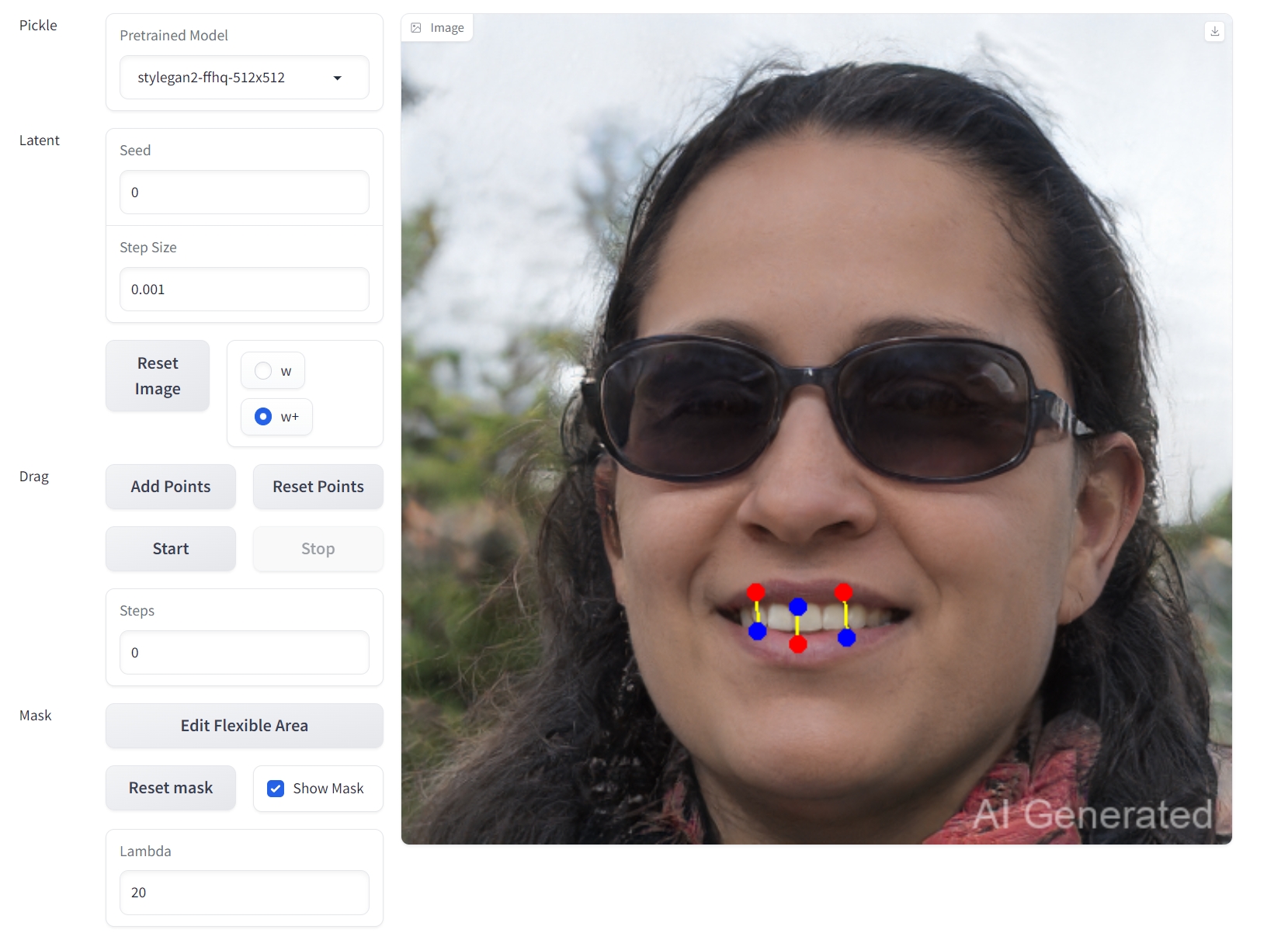

doc/gradio.png

0 → 100644

625 KB

doc/image (1).png

0 → 100644

441 KB

doc/image.png

0 → 100644

442 KB

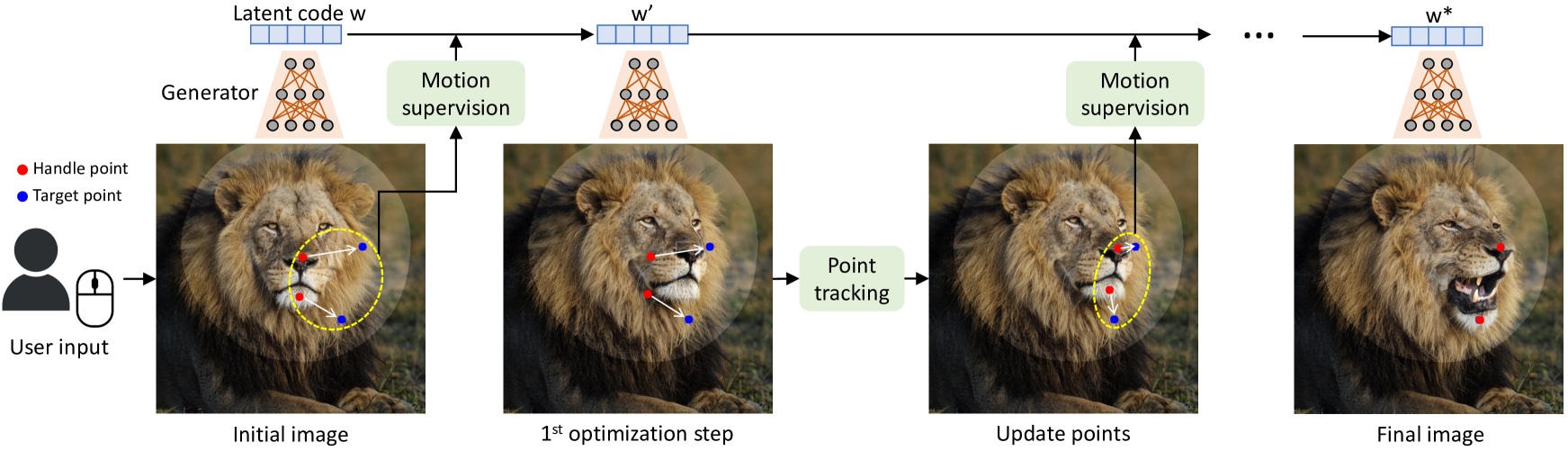

doc/pipeline.png

0 → 100644

856 KB

docker/Dockerfile

0 → 100644

environment.yml

0 → 100644

gen_images.py

0 → 100644

gradio_utils/__init__.py

0 → 100644

.png)