init

parents

Showing

.gitignore

0 → 100644

GETTING_STARTED.md

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

README_ori.md

0 → 100644

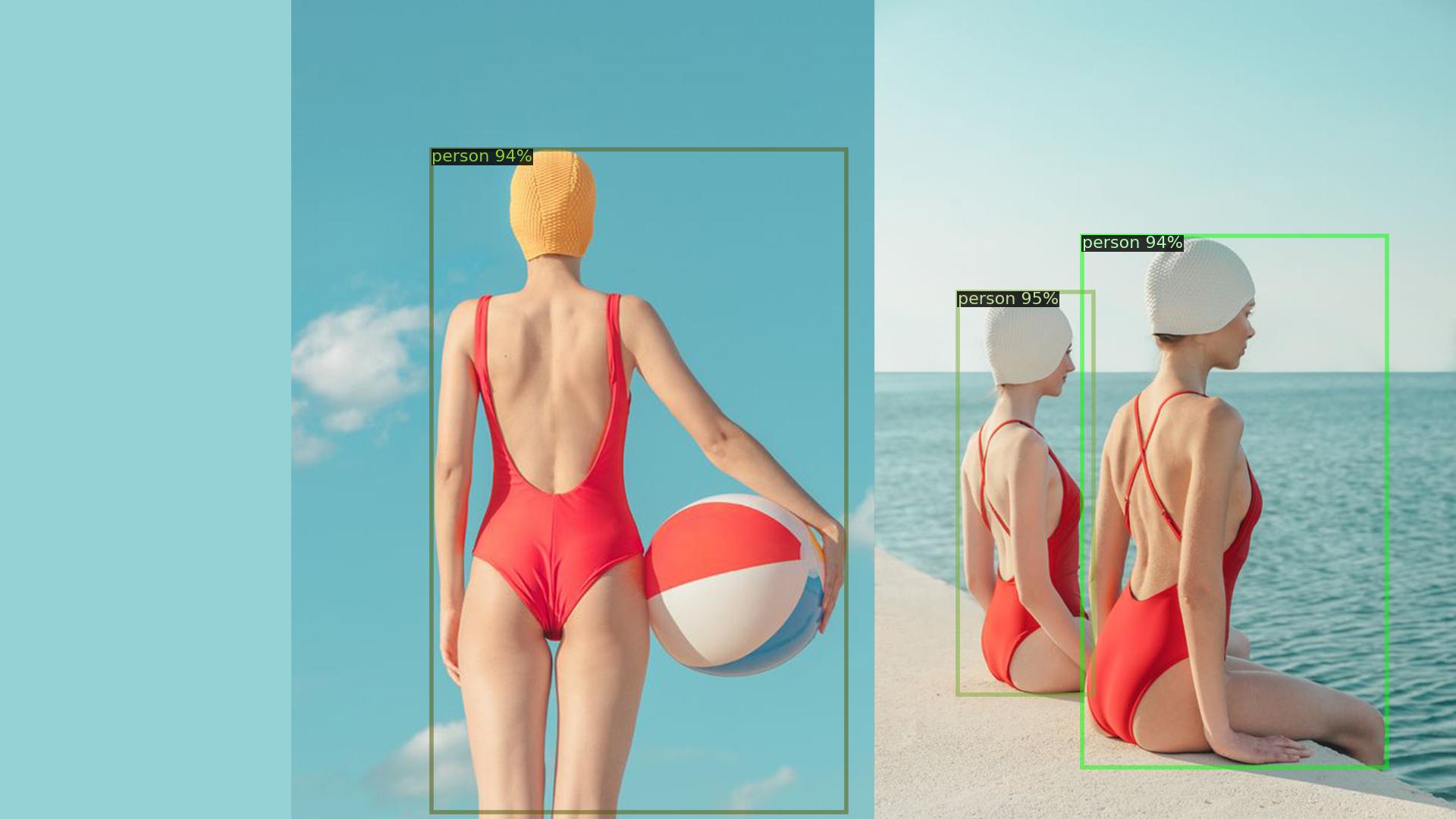

assets/demo.jpg

0 → 100644

120 KB

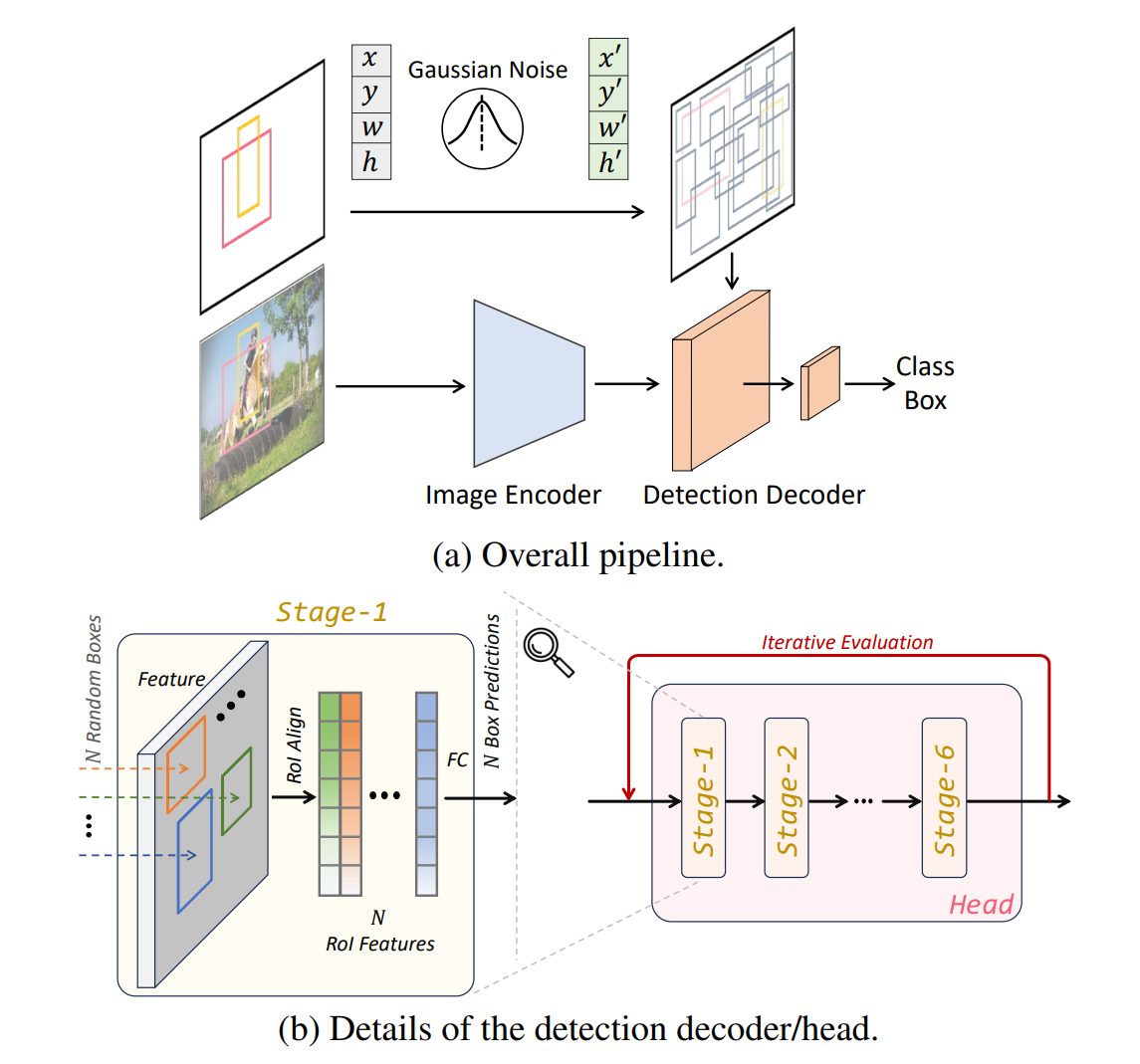

assets/framework.png

0 → 100644

232 KB

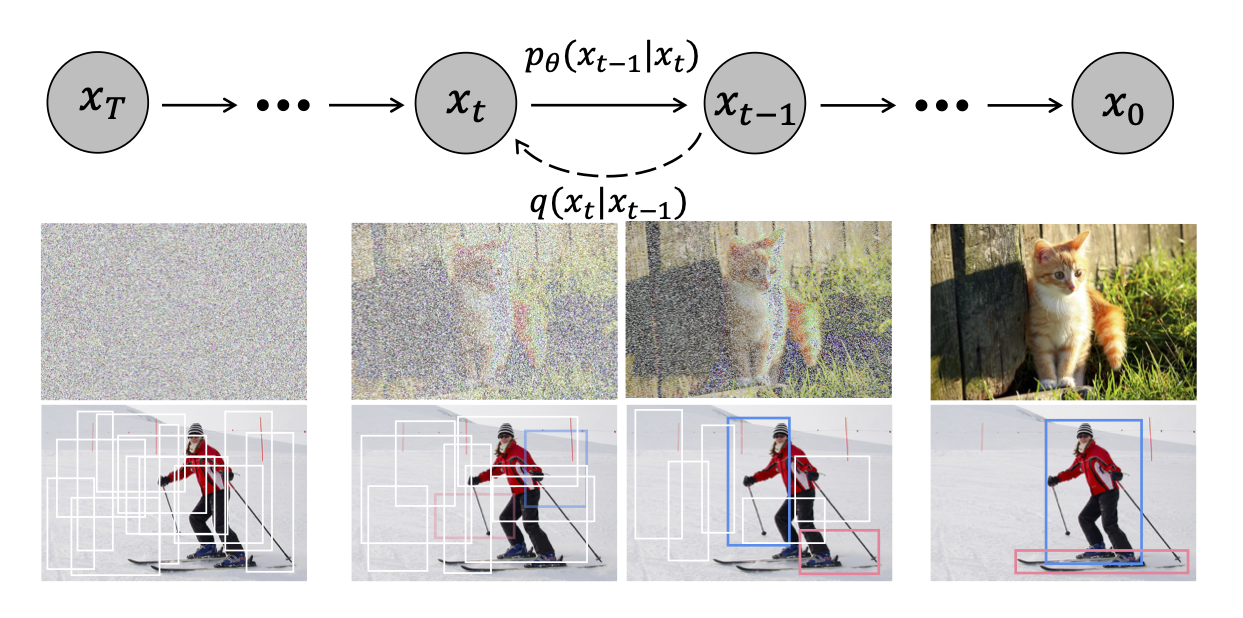

assets/teaser.png

0 → 100644

869 KB

demo.jpg

0 → 100644

478 KB

demo.py

0 → 100644

diffusiondet/__init__.py

0 → 100644

diffusiondet/config.py

0 → 100644