"vscode:/vscode.git/clone" did not exist on "1b7c791d60629453030de1600e756a8ba555455e"

update readme & add run_img.py

Showing

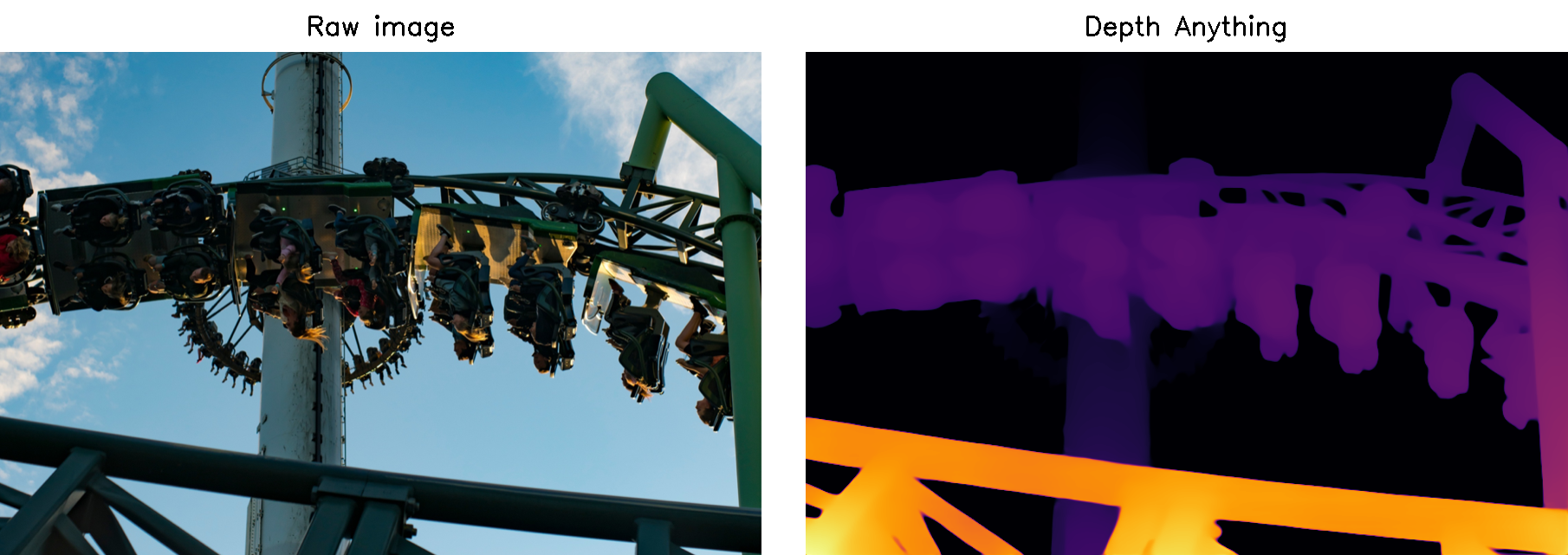

doc/demo1_img_depth.png

0 → 100644

888 KB

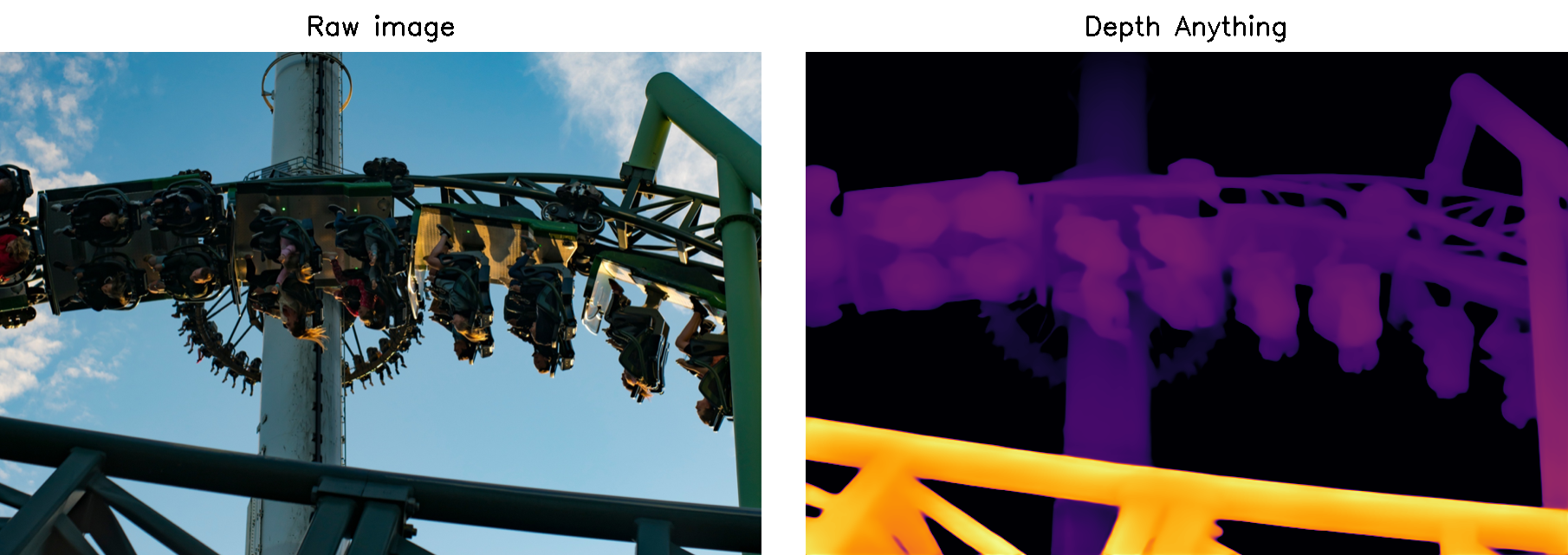

doc/demo1_img_depth_dcu.png

0 → 100644

955 KB

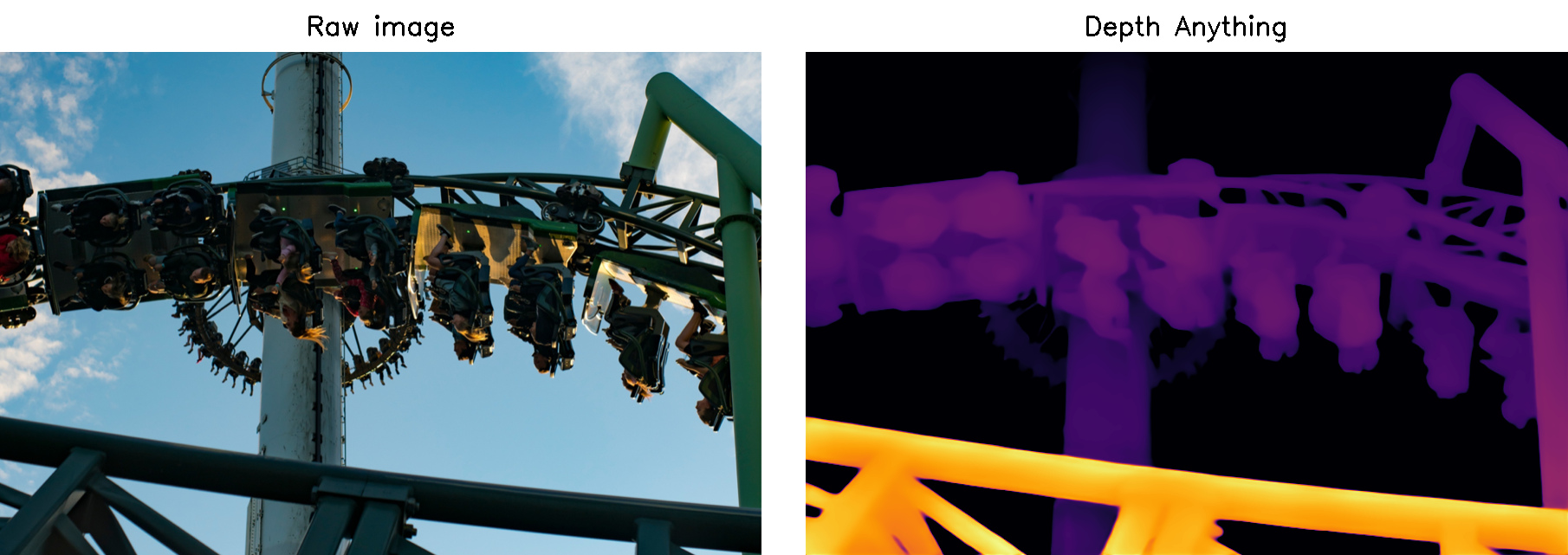

doc/demo1_img_depth_gpu.png

0 → 100644

953 KB

doc/test1.png

deleted

100644 → 0

547 KB

run_img.py

0 → 100644