add baichuan2 model TGI infer toturial

parents

Showing

.gitmodules

0 → 100644

README.md

0 → 100644

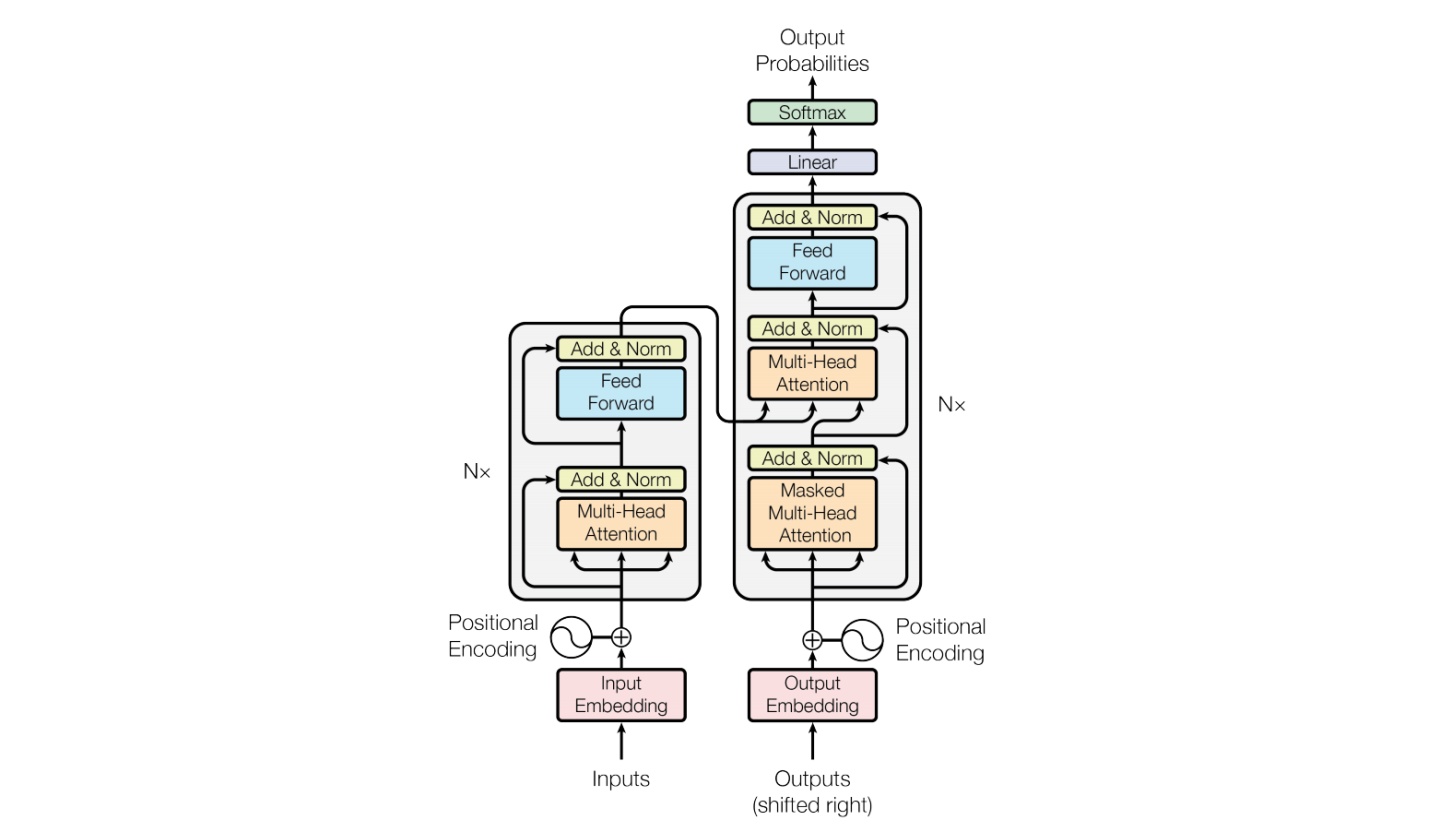

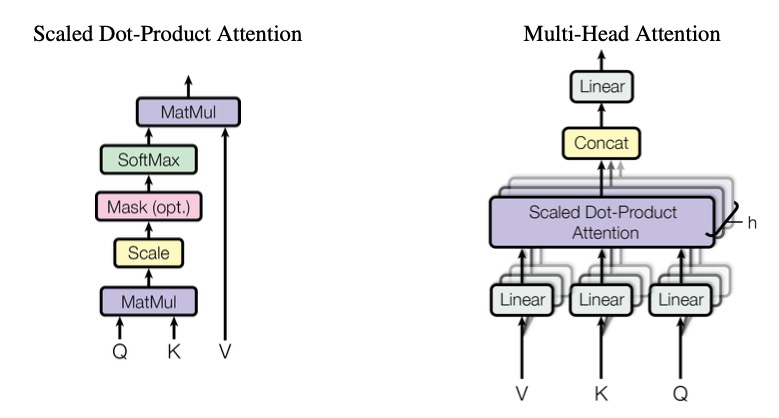

docs/transformer.jpg

0 → 100644

87.9 KB

docs/transformer.png

0 → 100644

112 KB

text-generation-inference @ 6e6d3c1a