first commit

parents

Showing

LICENSE

0 → 100644

README.md

0 → 100644

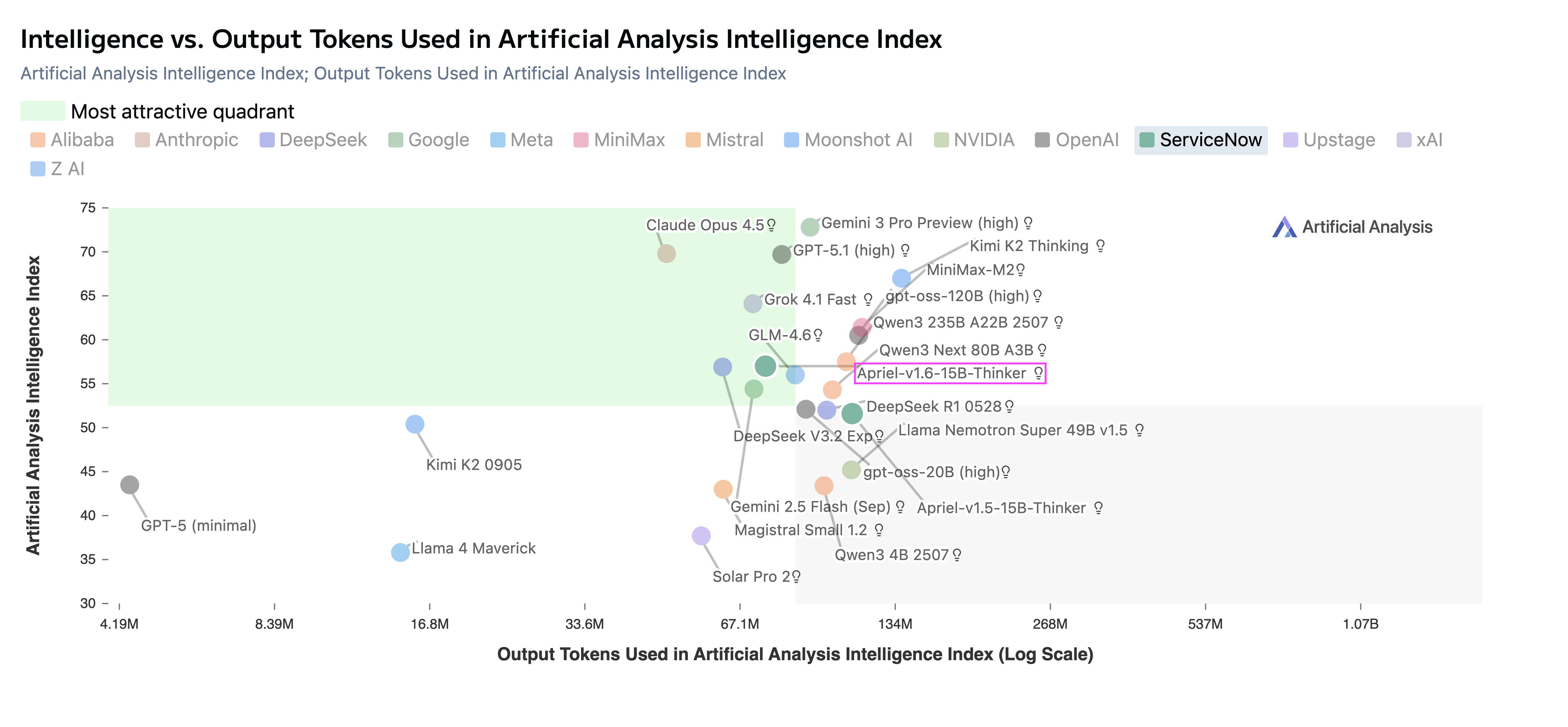

doc/perf.png

0 → 100644

778 KB

doc/result.png

0 → 100644

46.4 KB

icon.png

0 → 100644

50.3 KB

model.properties

0 → 100644

vllm_cilent.sh

0 → 100644

vllm_serve.sh

0 → 100644