v1.0

Showing

CODE_OF_CONDUCT.md

0 → 100644

Dockerfile_nv

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

assets/Chinese_prompt.npy

0 → 100644

File added

assets/Chinese_prompt.wav

0 → 100644

File added

assets/English_prompt.npy

0 → 100644

File added

assets/English_prompt.wav

0 → 100644

File added

assets/fig/Hi.gif

0 → 100644

285 KB

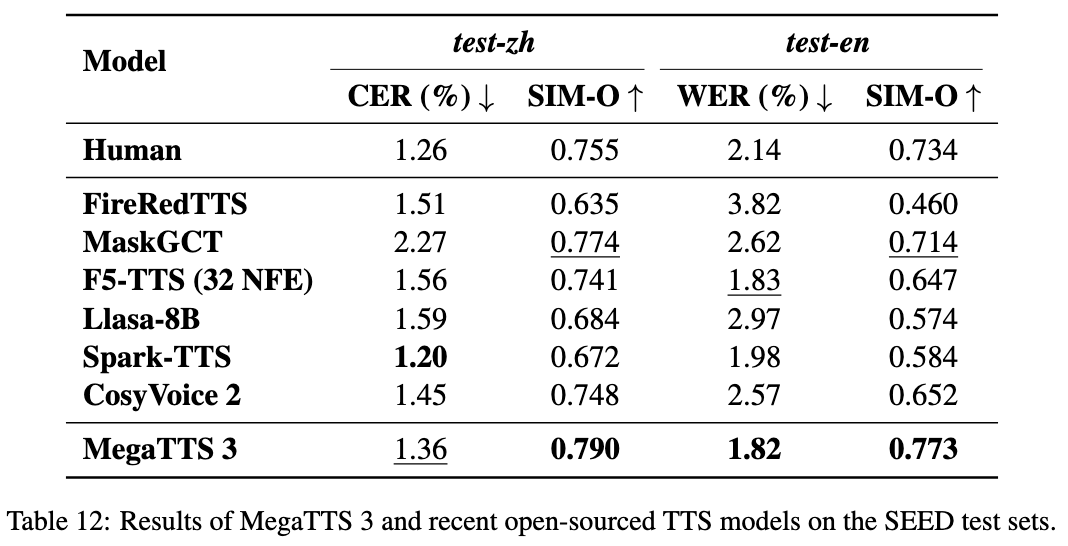

assets/fig/table_tts.png

0 → 100644

102 KB

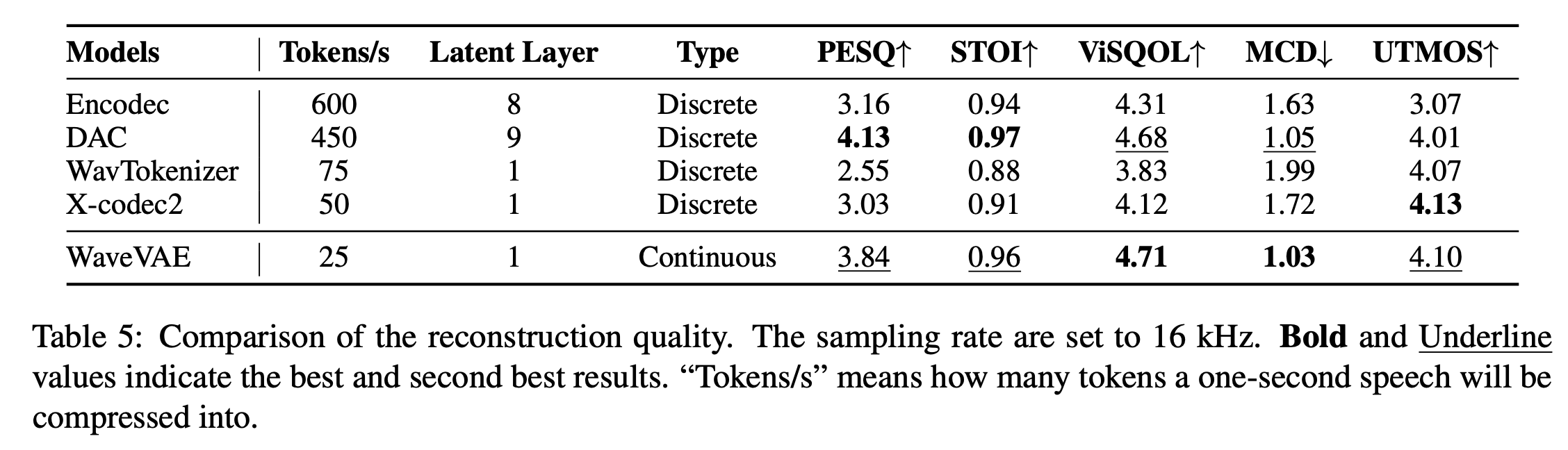

assets/fig/table_wavvae.png

0 → 100644

149 KB

checkpoints/README.md

0 → 100644

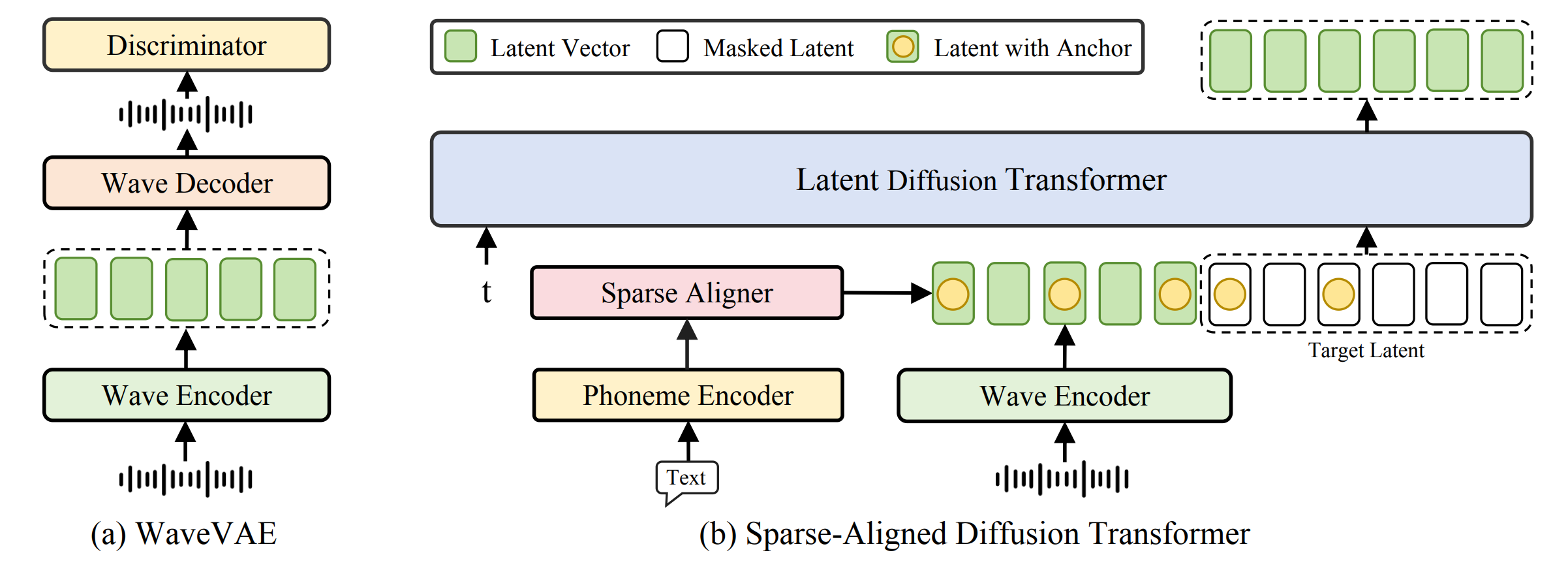

doc/MegaTTS3.png

0 → 100644

178 KB

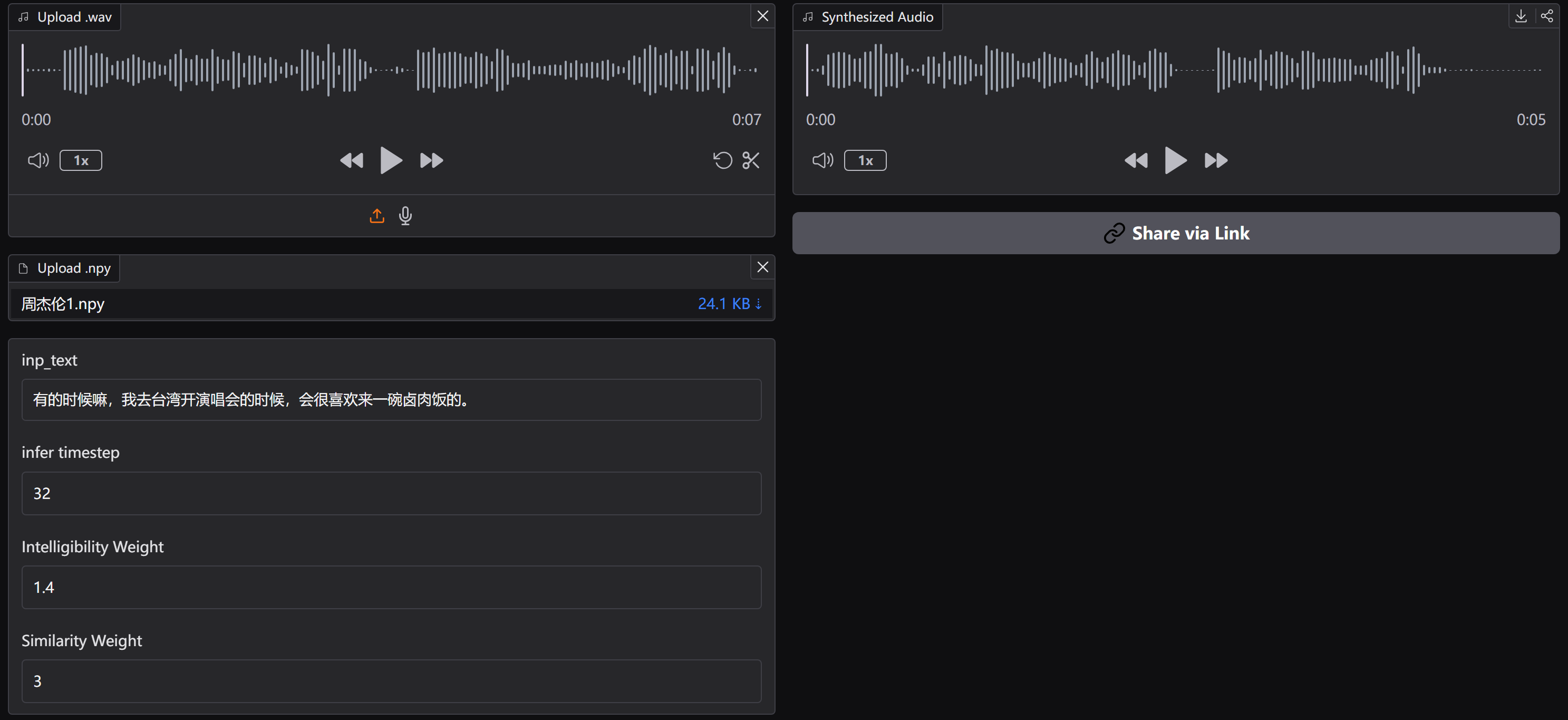

doc/result.png

0 → 100644

99 KB

docker/Dockerfile

0 → 100644

docker/requirements.txt

0 → 100644

ffmpeg-4.4.4.tar.gz

0 → 100644

File added

ffmpeg_apt.sh

0 → 100644

File added

icon.png

0 → 100644

64.4 KB