v1.0

Showing

LICENSE

0 → 100644

README.md

0 → 100644

README_origin.md

0 → 100644

assets/bear.png

0 → 100644

1.09 MB

assets/book.png

0 → 100644

650 KB

assets/car.png

0 → 100644

372 KB

assets/castle.png

0 → 100644

1.3 MB

assets/demo/video1.gif

0 → 100644

This image diff could not be displayed because it is too large. You can view the blob instead.

assets/demo/video2.gif

0 → 100644

This image diff could not be displayed because it is too large. You can view the blob instead.

assets/devil.png

0 → 100644

734 KB

assets/dragon.png

0 → 100644

599 KB

assets/earth.png

0 → 100644

1.03 MB

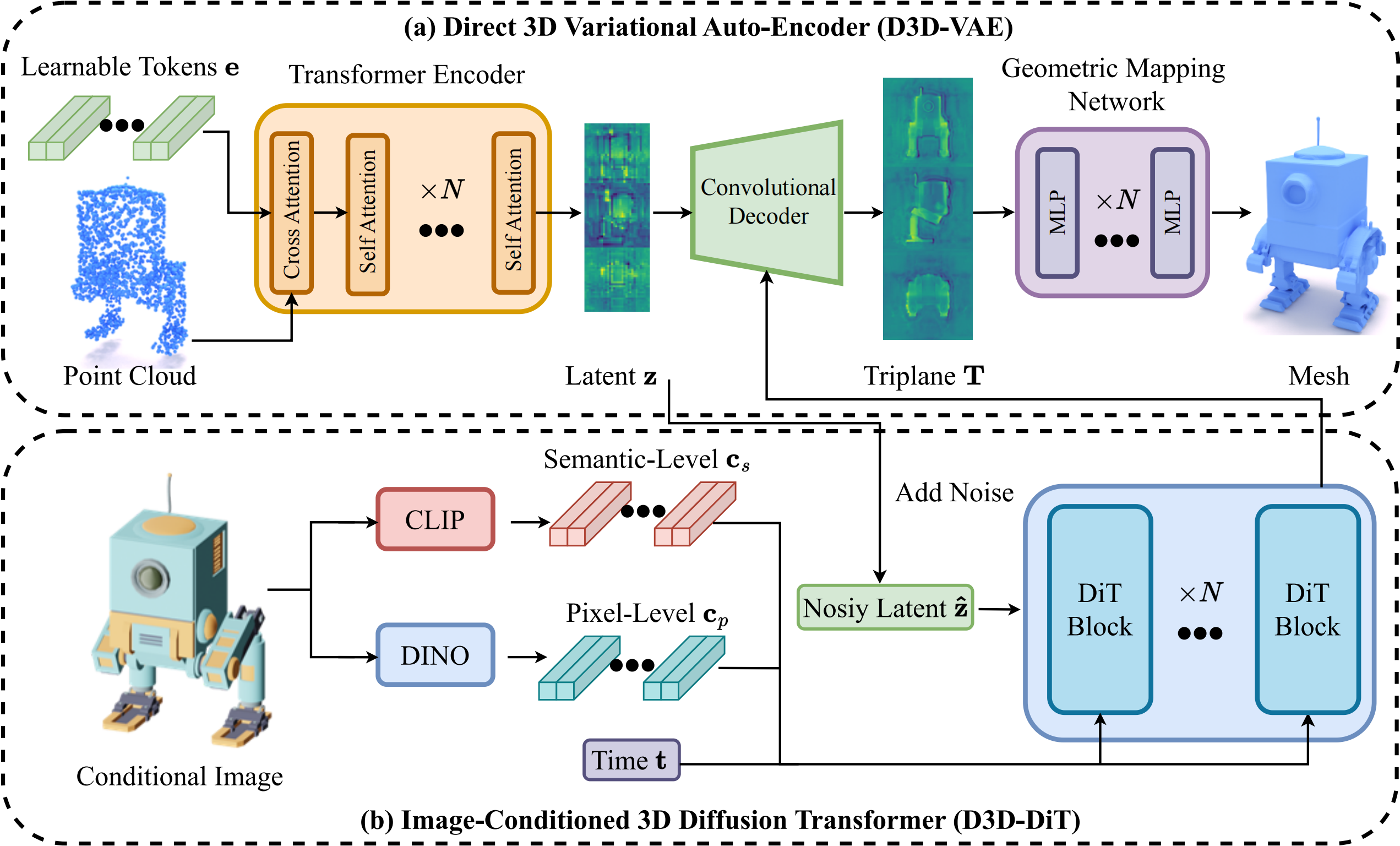

assets/figure/framework.png

0 → 100644

733 KB

assets/figure/teaser.gif

0 → 100644

This image diff could not be displayed because it is too large. You can view the blob instead.

assets/fish.png

0 → 100644

484 KB

assets/girl.png

0 → 100644

740 KB

assets/hamburger.png

0 → 100644

958 KB

assets/man.png

0 → 100644

490 KB

assets/panda.png

0 → 100644

625 KB