First commit

Showing

.gitignore

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

d2/README.md

0 → 100644

d2/converter.py

0 → 100644

d2/detr/__init__.py

0 → 100644

d2/detr/config.py

0 → 100644

d2/detr/dataset_mapper.py

0 → 100644

d2/detr/detr.py

0 → 100644

d2/train_net.py

0 → 100644

datasets/__init__.py

0 → 100644

datasets/coco.py

0 → 100644

datasets/coco_eval.py

0 → 100644

datasets/coco_panoptic.py

0 → 100644

datasets/panoptic_eval.py

0 → 100644

datasets/transforms.py

0 → 100644

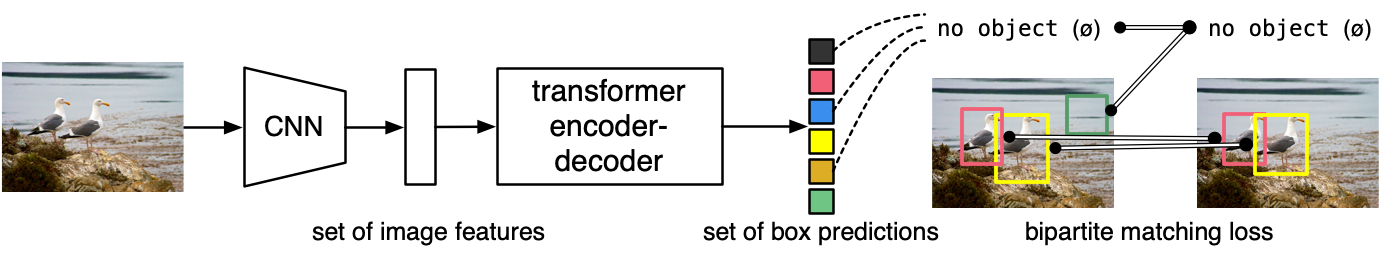

doc/DETR.png

0 → 100644

172 KB

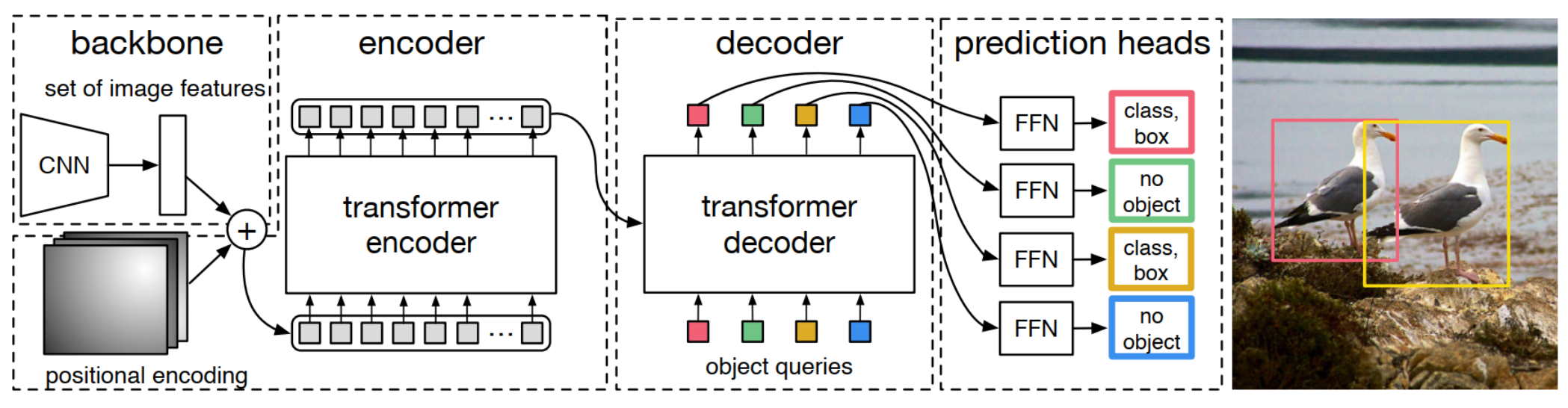

doc/models.png

0 → 100644

661 KB