"host/driver_offline/conv_bwd_driver_offline.cpp" did not exist on "0a72e4df949d889cc5ae492405f4ec9b9b829c11"

feat: 初始提交

Showing

.gitignore

0 → 100644

Dockerfile

0 → 100644

JanusFlow-1.3B/README.md

0 → 100644

JanusFlow-1.3B/app.py

0 → 100644

JanusFlow-1.3B/doge.png

0 → 100644

269 KB

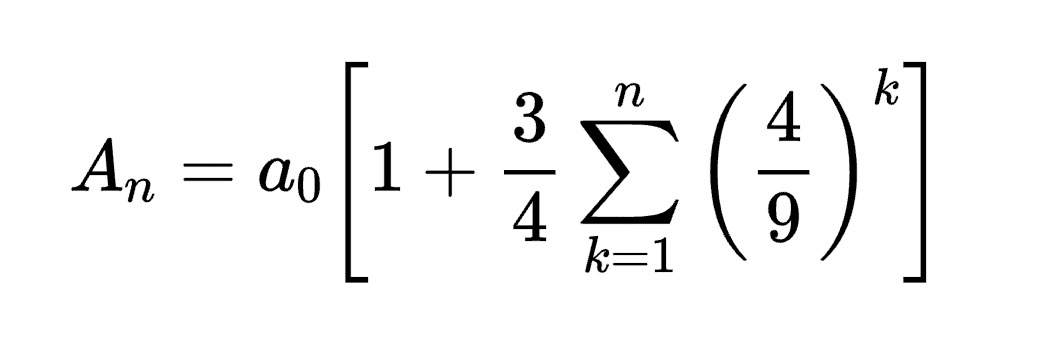

JanusFlow-1.3B/equation.png

0 → 100644

30.8 KB

hf_down.py

0 → 100644

start.sh

0 → 100644

启动器.ipynb

0 → 100644