Initial commit

fbshipit-source-id: afc575e8e7d8e2796a3f77d8b1c6c4fcb999558d

parents

Showing

.flake8

0 → 100644

CODE_OF_CONDUCT.md

0 → 100644

CONTRIBUTING.md

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

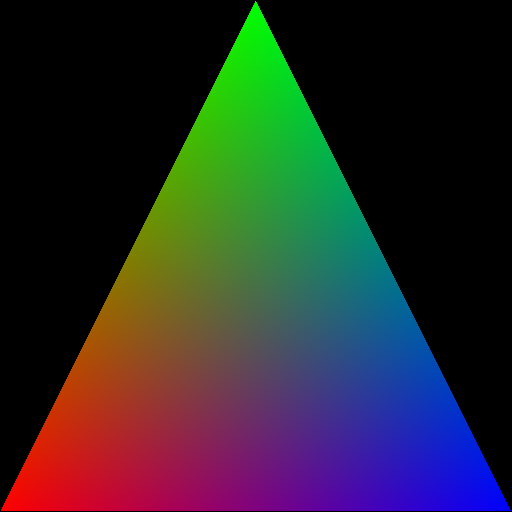

doc/hellow_triangle.png

0 → 100644

8.97 KB

drtk/__init__.py

0 → 100644

drtk/edge_grad_estimator.py

0 → 100644

drtk/edge_grad_ext.pyi

0 → 100644

drtk/interpolate.py

0 → 100644

drtk/interpolate_ext.pyi

0 → 100644

drtk/mipmap_grid_sample.py

0 → 100644

drtk/msi.py

0 → 100644

drtk/msi_ext.pyi

0 → 100644

drtk/rasterize.py

0 → 100644

drtk/rasterize_ext.pyi

0 → 100644

drtk/render.py

0 → 100644

drtk/render_ext.pyi

0 → 100644