Skip to content

GitLab

Menu

Projects

Groups

Snippets

Loading...

Help

Help

Support

Community forum

Keyboard shortcuts

?

Submit feedback

Contribute to GitLab

Sign in / Register

Toggle navigation

Menu

Open sidebar

OpenDAS

ColossalAI

Commits

155e2023

Unverified

Commit

155e2023

authored

Nov 11, 2022

by

binmakeswell

Committed by

GitHub

Nov 11, 2022

Browse files

[example] update auto_parallel img path (#1910)

parent

d5c5bc21

Changes

4

Hide whitespace changes

Inline

Side-by-side

Showing

4 changed files

with

6 additions

and

6 deletions

+6

-6

examples/tutorial/README.md

examples/tutorial/README.md

+4

-4

examples/tutorial/auto_parallel/README.md

examples/tutorial/auto_parallel/README.md

+2

-2

examples/tutorial/auto_parallel/imgs/gpt2_benchmark.png

examples/tutorial/auto_parallel/imgs/gpt2_benchmark.png

+0

-0

examples/tutorial/auto_parallel/imgs/resnet50_benchmark.png

examples/tutorial/auto_parallel/imgs/resnet50_benchmark.png

+0

-0

No files found.

examples/tutorial/README.md

View file @

155e2023

...

@@ -29,14 +29,14 @@ quickly deploy large AI model training and inference, reducing large AI model tr

...

@@ -29,14 +29,14 @@ quickly deploy large AI model training and inference, reducing large AI model tr

-

Try sequence parallelism with BERT

-

Try sequence parallelism with BERT

-

Combination of data/pipeline/sequence parallelism

-

Combination of data/pipeline/sequence parallelism

-

Faster training and longer sequence length

-

Faster training and longer sequence length

-

Large Batch Training Optimization

-

Comparison of small/large batch size with SGD/LARS optimizer

-

Acceleration from a larger batch size

-

Auto-Parallelism

-

Auto-Parallelism

-

Parallelism with normal non-distributed training code

-

Parallelism with normal non-distributed training code

-

Model tracing + solution solving + runtime communication inserting all in one auto-parallelism system

-

Model tracing + solution solving + runtime communication inserting all in one auto-parallelism system

-

Try single program, multiple data (SPMD) parallel with auto-parallelism SPMD solver on ResNet50

-

Try single program, multiple data (SPMD) parallel with auto-parallelism SPMD solver on ResNet50

-

Large Batch Training Optimization

-

Fine-tuning and Serving for OPT

-

Comparison of small/large batch size with SGD/LARS optimizer

-

Acceleration from a larger batch size

-

Fine-tuning and Serving for OPT from Hugging Face

-

Try OPT model imported from Hugging Face with Colossal-AI

-

Try OPT model imported from Hugging Face with Colossal-AI

-

Fine-tuning OPT with limited hardware using ZeRO, Gemini and parallelism

-

Fine-tuning OPT with limited hardware using ZeRO, Gemini and parallelism

-

Deploy the fine-tuned model to inference service

-

Deploy the fine-tuned model to inference service

...

...

examples/tutorial/auto_parallel/README.md

View file @

155e2023

...

@@ -66,10 +66,10 @@ python demo_gpt2_medium.py

...

@@ -66,10 +66,10 @@ python demo_gpt2_medium.py

There are some results for your reference

There are some results for your reference

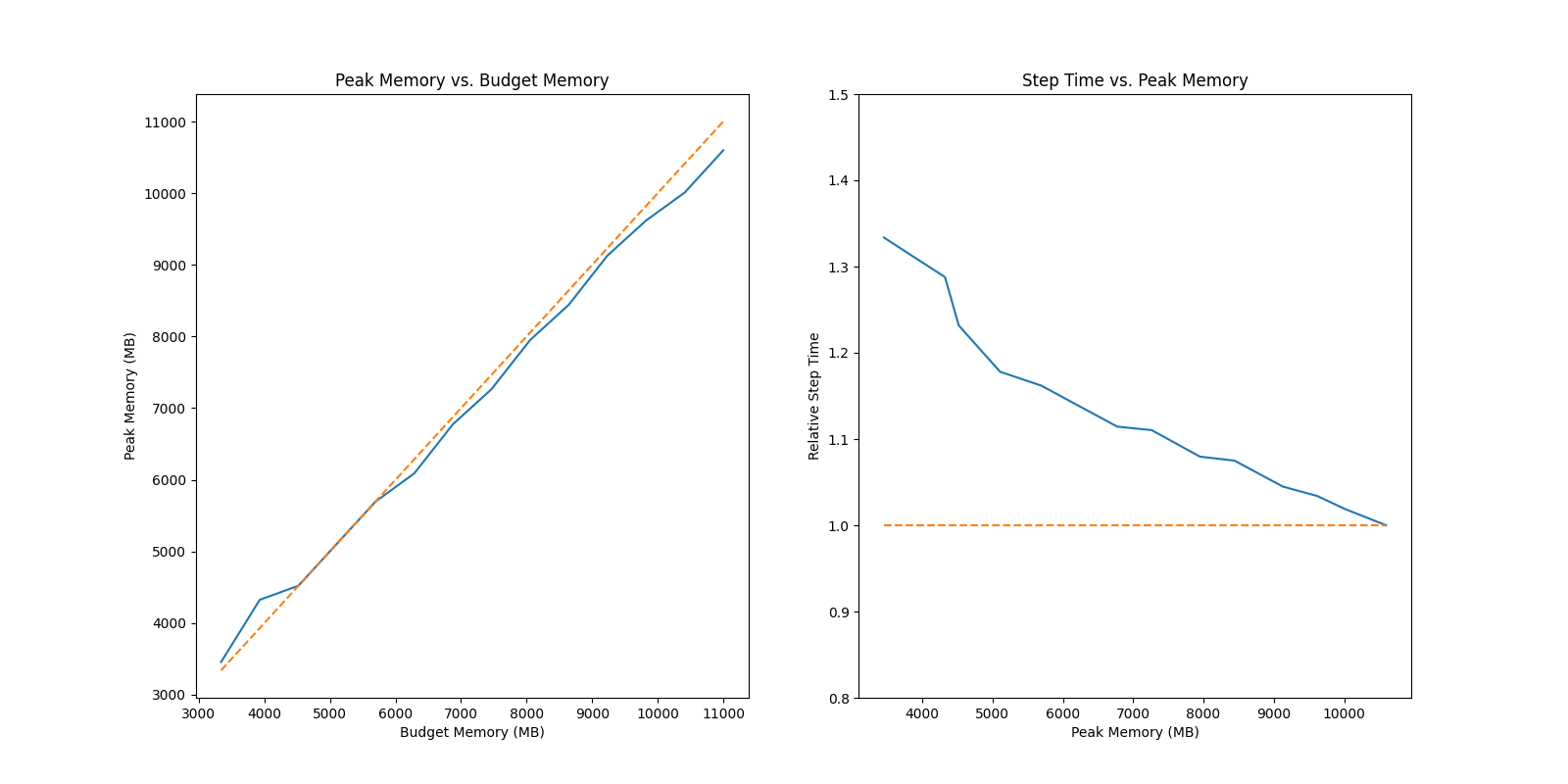

### ResNet 50

### ResNet 50

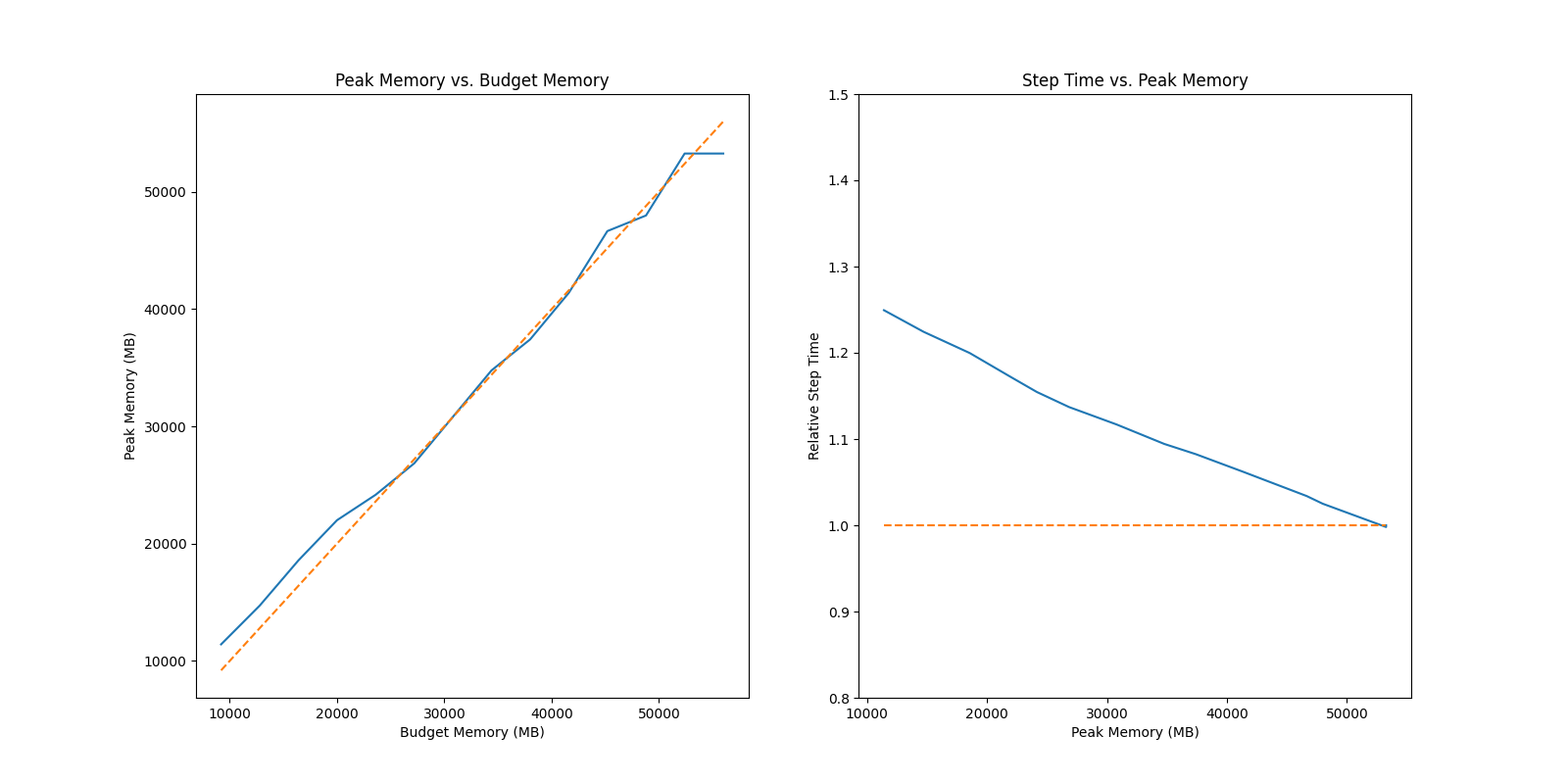

### GPT2 Medium

### GPT2 Medium

We also prepare the demo

`demo_resnet152.py`

to manifest the benefit of auto activation with large batch, the usage is listed as follows

We also prepare the demo

`demo_resnet152.py`

to manifest the benefit of auto activation with large batch, the usage is listed as follows

```

bash

```

bash

...

...

examples/tutorial/auto_parallel/imgs/gpt2_benchmark.png

deleted

100644 → 0

View file @

d5c5bc21

65.3 KB

examples/tutorial/auto_parallel/imgs/resnet50_benchmark.png

deleted

100644 → 0

View file @

d5c5bc21

70.8 KB

Write

Preview

Markdown

is supported

0%

Try again

or

attach a new file

.

Attach a file

Cancel

You are about to add

0

people

to the discussion. Proceed with caution.

Finish editing this message first!

Cancel

Please

register

or

sign in

to comment