Merge branch 'dygraph' into dygraph

Showing

doc/table/ppstructure.GIF

0 → 100644

2.49 MB

doc/table/result_all.jpg

0 → 100644

521 KB

doc/table/result_text.jpg

0 → 100644

146 KB

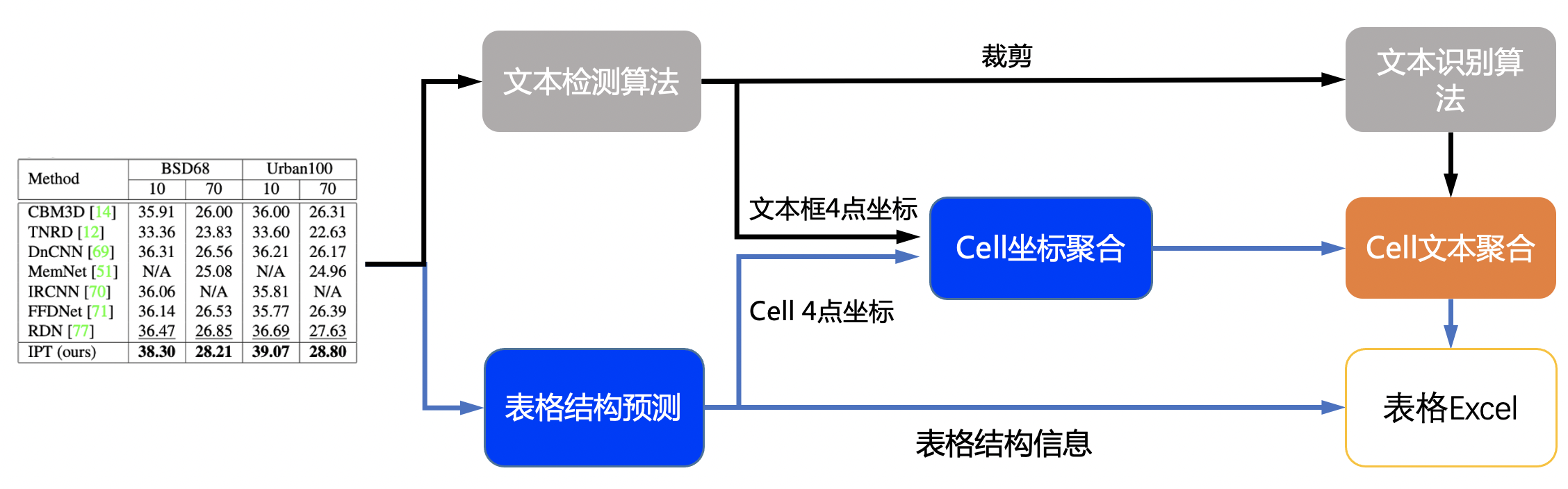

doc/table/table.jpg

0 → 100644

24.1 KB

552 KB

416 KB

ppocr/data/pubtab_dataset.py

0 → 100644