Merge remote-tracking branch 'origin/dygraph' into dy1

Showing

921 KB

| W: | H:

| W: | H:

| W: | H:

| W: | H:

doc/pgnet_framework.png

0 → 100644

242 KB

doc/table/1.png

0 → 100644

758 KB

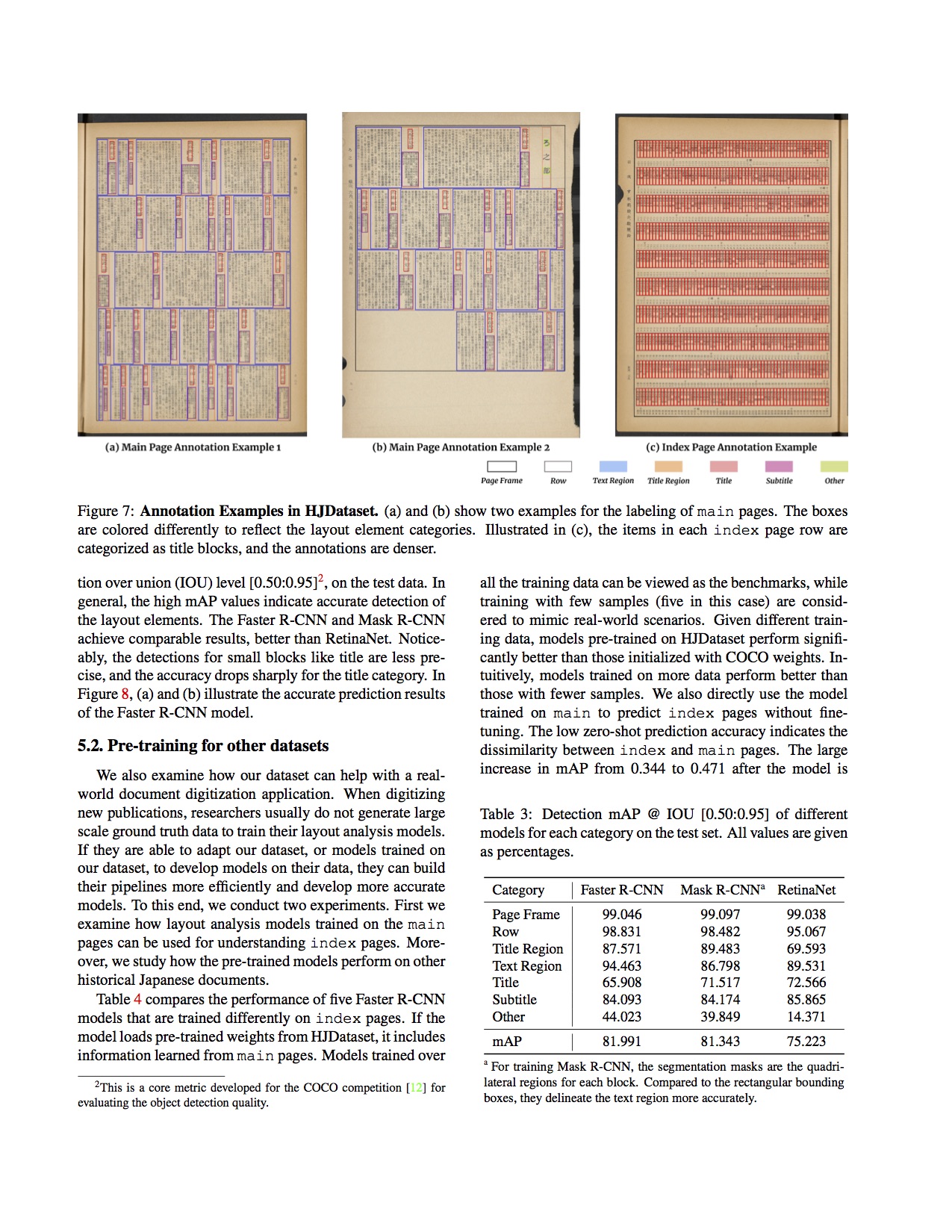

doc/table/layout.jpg

0 → 100644

671 KB

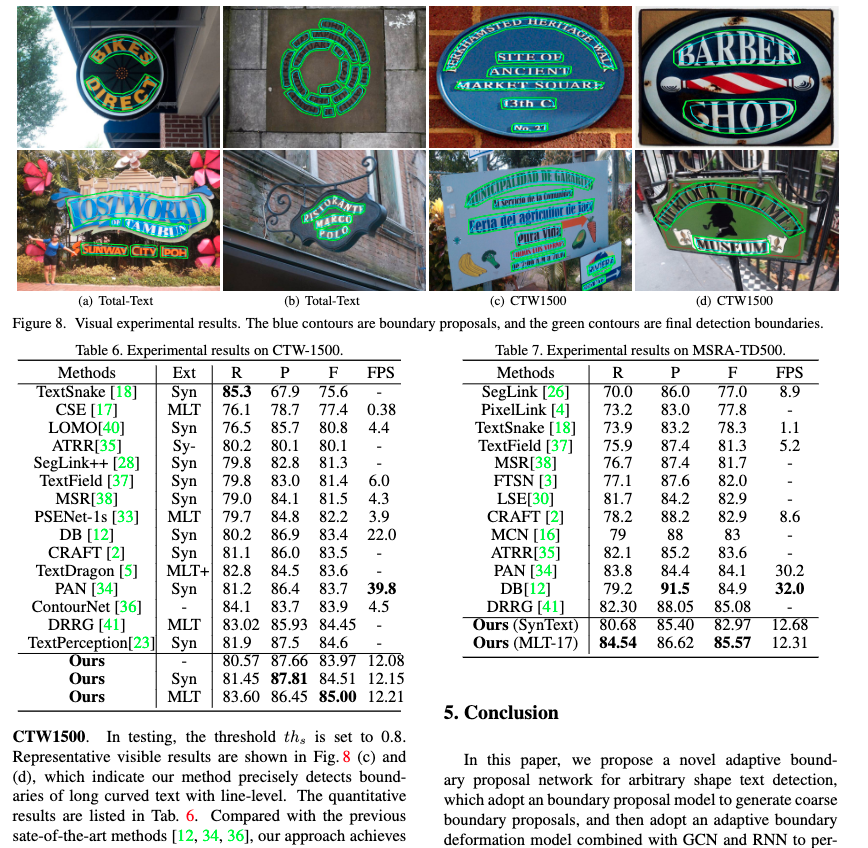

doc/table/paper-image.jpg

0 → 100644

671 KB

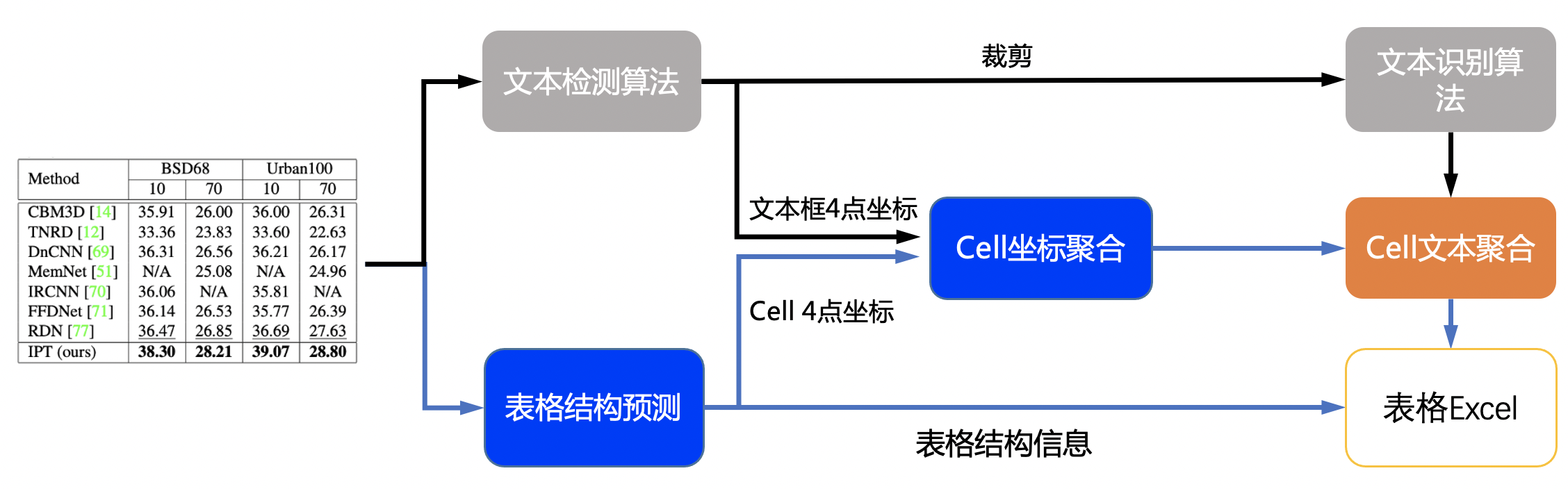

doc/table/pipeline.jpg

0 → 100644

1.46 MB

doc/table/pipeline_en.jpg

0 → 100644

1.45 MB

doc/table/ppstructure.GIF

0 → 100644

2.49 MB

doc/table/result_all.jpg

0 → 100644

521 KB

doc/table/result_text.jpg

0 → 100644

146 KB

doc/table/table.jpg

0 → 100644

58 KB

552 KB

416 KB