Fixed merge conflict in README.md

Showing

This diff is collapsed.

deploy/lite/readme_ch.md

0 → 100644

deploy/paddlejs/README.md

0 → 100644

deploy/paddlejs/README_ch.md

0 → 100644

554 KB

doc/PPOCR.pdf

deleted

100644 → 0

File deleted

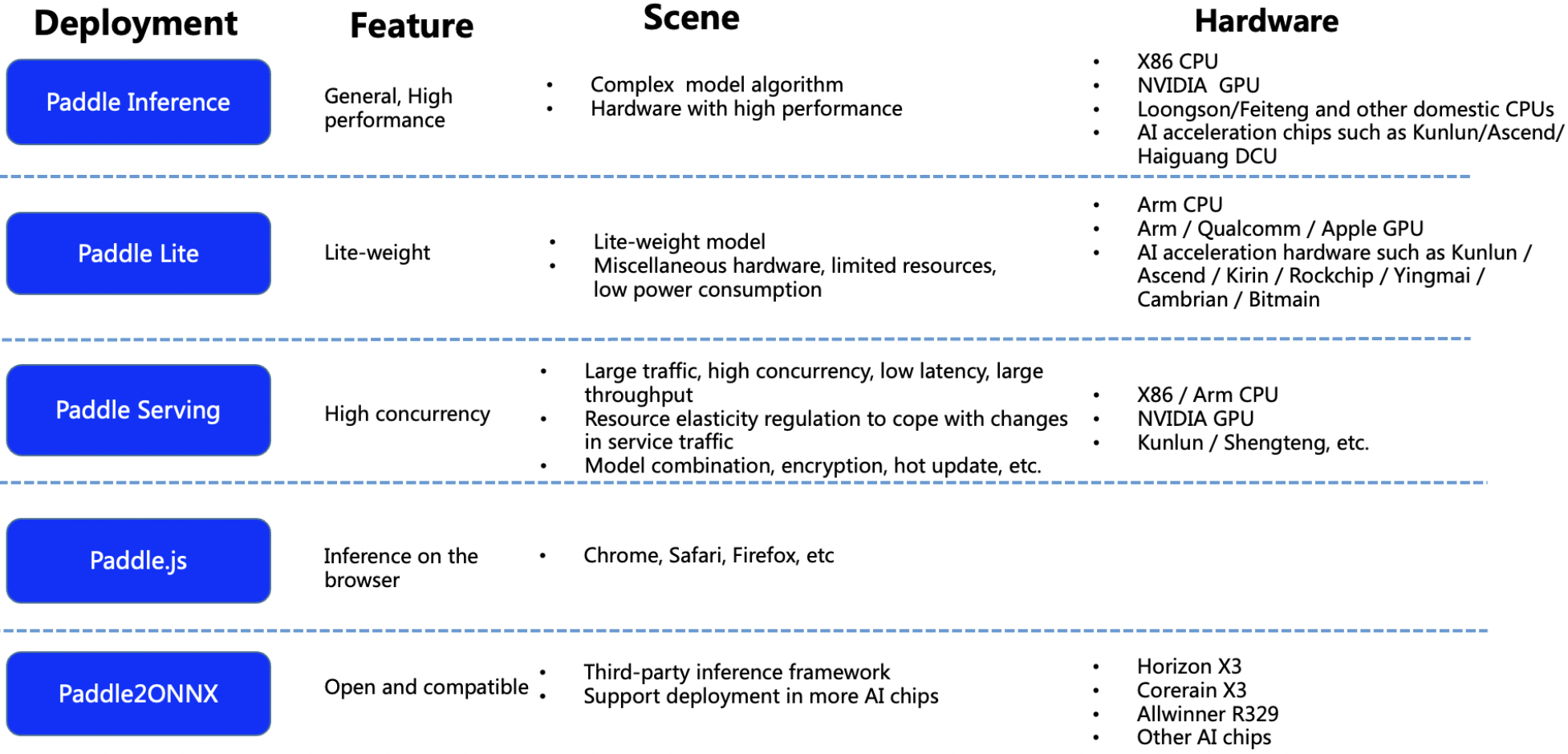

doc/deployment.png

0 → 100644

992 KB

doc/deployment_en.png

0 → 100644

650 KB

doc/doc_ch/algorithm.md

0 → 100644

doc/doc_ch/application.md

0 → 100644