Fixed merge conflict in README.md

Showing

doc/doc_ch/ocr_book.md

0 → 100644

doc/doc_ch/table_datasets.md

0 → 100644

doc/doc_en/algorithm_en.md

0 → 100644

doc/doc_en/ocr_book_en.md

0 → 100644

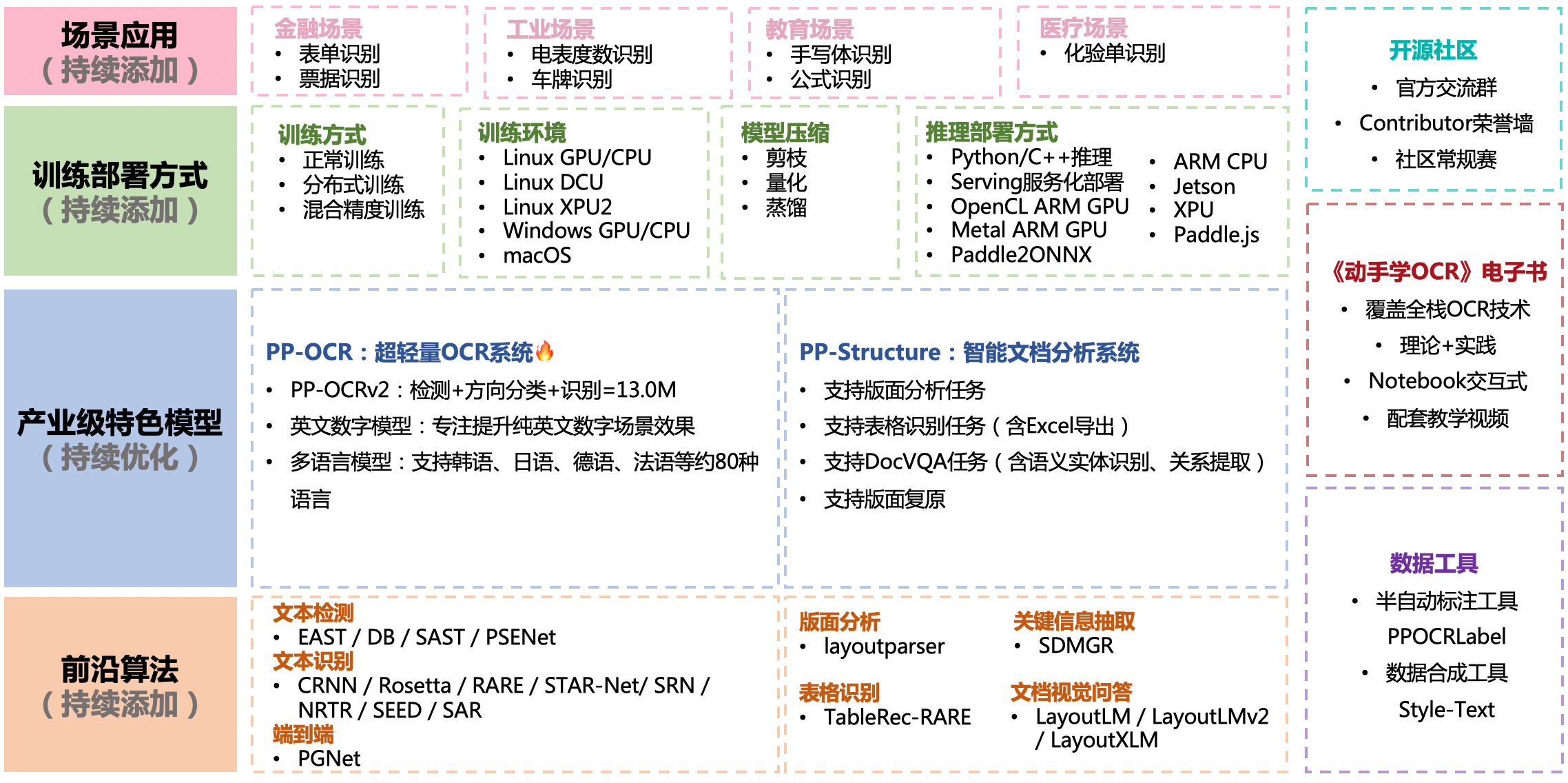

doc/features.png

0 → 100644

1.15 MB

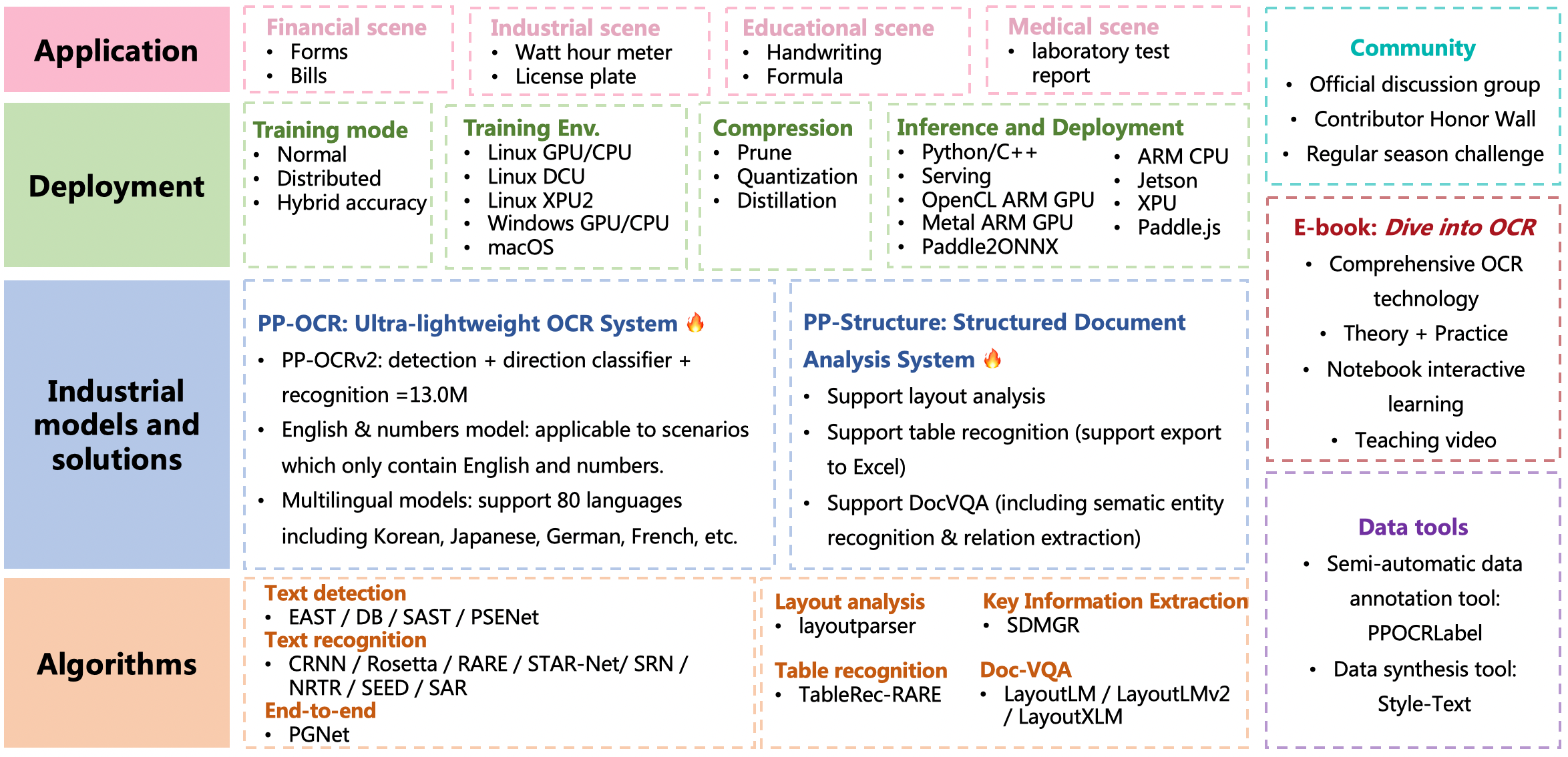

doc/features_en.png

0 → 100644

1.19 MB

| W: | H:

| W: | H: