Skip to content

GitLab

Menu

Projects

Groups

Snippets

Loading...

Help

Help

Support

Community forum

Keyboard shortcuts

?

Submit feedback

Contribute to GitLab

Sign in / Register

Toggle navigation

Menu

Open sidebar

ModelZoo

ResNet50_tensorflow

Commits

7adc6ec1

Commit

7adc6ec1

authored

May 11, 2021

by

Dan Kondratyuk

Committed by

A. Unique TensorFlower

May 11, 2021

Browse files

Internal change

PiperOrigin-RevId: 373155894

parent

6d6cd4ac

Changes

28

Hide whitespace changes

Inline

Side-by-side

Showing

20 changed files

with

3441 additions

and

0 deletions

+3441

-0

official/vision/beta/projects/movinet/README.google.md

official/vision/beta/projects/movinet/README.google.md

+13

-0

official/vision/beta/projects/movinet/README.md

official/vision/beta/projects/movinet/README.md

+163

-0

official/vision/beta/projects/movinet/configs/movinet.py

official/vision/beta/projects/movinet/configs/movinet.py

+138

-0

official/vision/beta/projects/movinet/configs/movinet_test.py

...cial/vision/beta/projects/movinet/configs/movinet_test.py

+42

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a0_k600_8x8.yaml

...ta/projects/movinet/configs/yaml/movinet_a0_k600_8x8.yaml

+73

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a0_k600_cpu_local.yaml

...jects/movinet/configs/yaml/movinet_a0_k600_cpu_local.yaml

+58

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a0_stream_k600_8x8.yaml

...ects/movinet/configs/yaml/movinet_a0_stream_k600_8x8.yaml

+74

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a1_k600_8x8.yaml

...ta/projects/movinet/configs/yaml/movinet_a1_k600_8x8.yaml

+73

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a1_stream_k600_8x8.yaml

...ects/movinet/configs/yaml/movinet_a1_stream_k600_8x8.yaml

+74

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a2_k600_8x8.yaml

...ta/projects/movinet/configs/yaml/movinet_a2_k600_8x8.yaml

+73

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a2_stream_k600_8x8.yaml

...ects/movinet/configs/yaml/movinet_a2_stream_k600_8x8.yaml

+74

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a3_k600_8x8.yaml

...ta/projects/movinet/configs/yaml/movinet_a3_k600_8x8.yaml

+73

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a3_stream_k600_8x8.yaml

...ects/movinet/configs/yaml/movinet_a3_stream_k600_8x8.yaml

+73

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a4_k600_8x8.yaml

...ta/projects/movinet/configs/yaml/movinet_a4_k600_8x8.yaml

+73

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_a5_k600_8x8.yaml

...ta/projects/movinet/configs/yaml/movinet_a5_k600_8x8.yaml

+73

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_t0_k600_8x8.yaml

...ta/projects/movinet/configs/yaml/movinet_t0_k600_8x8.yaml

+72

-0

official/vision/beta/projects/movinet/configs/yaml/movinet_t0_stream_k600_8x8.yaml

...ects/movinet/configs/yaml/movinet_t0_stream_k600_8x8.yaml

+72

-0

official/vision/beta/projects/movinet/export_saved_model.py

official/vision/beta/projects/movinet/export_saved_model.py

+151

-0

official/vision/beta/projects/movinet/modeling/movinet.py

official/vision/beta/projects/movinet/modeling/movinet.py

+529

-0

official/vision/beta/projects/movinet/modeling/movinet_layers.py

...l/vision/beta/projects/movinet/modeling/movinet_layers.py

+1470

-0

No files found.

official/vision/beta/projects/movinet/README.google.md

0 → 100644

View file @

7adc6ec1

# Mobile Video Networks (MoViNets)

Design doc: go/movinet

## Getting Started

```

shell

bash third_party/tensorflow_models/official/vision/beta/projects/movinet/google/run_train.sh

```

## Results

Results are tracked at go/movinet-experiments.

official/vision/beta/projects/movinet/README.md

0 → 100644

View file @

7adc6ec1

# Mobile Video Networks (MoViNets)

[

](https://colab.research.google.com/github/tensorflow/models/tree/master/official/vision/beta/projects/movinet/movinet_tutorial.ipynb)

[

](https://tfhub.dev/google/collections/movinet)

[

](https://arxiv.org/abs/2103.11511)

This repository is the official implementation of

[

MoViNets: Mobile Video Networks for Efficient Video

Recognition

](

https://arxiv.org/abs/2103.11511

)

.

## Description

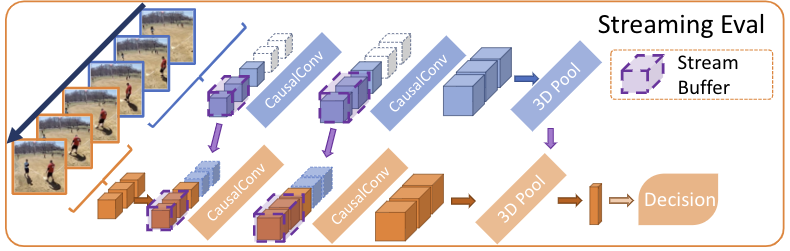

Mobile Video Networks (MoViNets) are efficient video classification models

runnable on mobile devices. MoViNets demonstrate state-of-the-art accuracy and

efficiency on several large-scale video action recognition datasets.

There is a large gap between video model performance of accurate models and

efficient models for video action recognition. On the one hand, 2D MobileNet

CNNs are fast and can operate on streaming video in real time, but are prone to

be noisy and are inaccurate. On the other hand, 3D CNNs are accurate, but are

memory and computation intensive and cannot operate on streaming video.

MoViNets bridge this gap, producing:

-

State-of-the art efficiency and accuracy across the model family (MoViNet-A0

to A6).

-

Streaming models with 3D causal convolutions substantially reducing memory

usage.

-

Temporal ensembles of models to boost efficiency even higher.

Small MoViNets demonstrate higher efficiency and accuracy than MobileNetV3 for

video action recognition (Kinetics 600).

MoViNets also improve efficiency by outputting high-quality predictions with a

single frame, as opposed to the traditional multi-clip evaluation approach.

[

](https://arxiv.org/pdf/2103.11511.pdf)

[

](https://arxiv.org/pdf/2103.11511.pdf)

## History

-

Initial Commit.

## Authors and Maintainers

*

Dan Kondratyuk (

[

@hyperparticle

](

https://github.com/hyperparticle

)

)

*

Liangzhe Yuan (

[

@yuanliangzhe

](

https://github.com/yuanliangzhe

)

)

*

Yeqing Li (

[

@yeqingli

](

https://github.com/yeqingli

)

)

## Table of Contents

-

[

Requirements

](

#requirements

)

-

[

Results and Pretrained Weights

](

#results-and-pretrained-weights

)

-

[

Kinetics 600

](

#kinetics-600

)

-

[

Training and Evaluation

](

#training-and-evaluation

)

-

[

References

](

#references

)

-

[

License

](

#license

)

-

[

Citation

](

#citation

)

## Requirements

[

](https://github.com/tensorflow/tensorflow/releases/tag/v2.1.0)

[

](https://www.python.org/downloads/release/python-360/)

To install requirements:

```

shell

pip

install

-r

requirements.txt

```

## Results and Pretrained Weights

[

](https://tfhub.dev/google/collections/movinet)

[

](https://tensorboard.dev/experiment/Q07RQUlVRWOY4yDw3SnSkA/)

### Kinetics 600

[

](https://arxiv.org/pdf/2103.11511.pdf)

[

tensorboard.dev summary

](

https://tensorboard.dev/experiment/Q07RQUlVRWOY4yDw3SnSkA/

)

of training runs across all models.

The table below summarizes the performance of each model and provides links to

download pretrained models. All models are evaluated on single clips with the

same resolution as training.

Streaming MoViNets will be added in the future.

| Model Name | Top-1 Accuracy | Top-5 Accuracy | GFLOPs

\*

| Checkpoint | TF Hub SavedModel |

|------------|----------------|----------------|----------|------------|-------------------|

| MoViNet-A0-Base | 71.41 | 90.91 | 2.7 |

[

checkpoint (12 MiB)

](

https://storage.googleapis.com/tf_model_garden/vision/movinet/movinet_a0_base.tar.gz

)

|

[

tfhub

](

https://tfhub.dev/tensorflow/movinet/a0/base/kinetics-600/classification/

)

|

| MoViNet-A1-Base | 76.01 | 93.28 | 6.0 |

[

checkpoint (18 MiB)

](

https://storage.googleapis.com/tf_model_garden/vision/movinet/movinet_a1_base.tar.gz

)

|

[

tfhub

](

https://tfhub.dev/tensorflow/movinet/a1/base/kinetics-600/classification/

)

|

| MoViNet-A2-Base | 78.03 | 93.99 | 10 |

[

checkpoint (20 MiB)

](

https://storage.googleapis.com/tf_model_garden/vision/movinet/movinet_a2_base.tar.gz

)

|

[

tfhub

](

https://tfhub.dev/tensorflow/movinet/a2/base/kinetics-600/classification/

)

|

| MoViNet-A3-Base | 81.22 | 95.35 | 57 |

[

checkpoint (29 MiB)

](

https://storage.googleapis.com/tf_model_garden/vision/movinet/movinet_a3_base.tar.gz

)

|

[

tfhub

](

https://tfhub.dev/tensorflow/movinet/a3/base/kinetics-600/classification/

)

|

| MoViNet-A4-Base | 82.96 | 95.98 | 110 |

[

checkpoint (44 MiB)

](

https://storage.googleapis.com/tf_model_garden/vision/movinet/movinet_a4_base.tar.gz

)

|

[

tfhub

](

https://tfhub.dev/tensorflow/movinet/a4/base/kinetics-600/classification/

)

|

| MoViNet-A5-Base | 84.22 | 96.36 | 280 |

[

checkpoint (72 MiB)

](

https://storage.googleapis.com/tf_model_garden/vision/movinet/movinet_a5_base.tar.gz

)

|

[

tfhub

](

https://tfhub.dev/tensorflow/movinet/a5/base/kinetics-600/classification/

)

|

\*

GFLOPs per video on Kinetics 600.

## Training and Evaluation

Please check out our

[

Colab Notebook

](

https://colab.research.google.com/github/tensorflow/models/tree/master/official/vision/beta/projects/movinet/movinet_tutorial.ipynb

)

to get started with MoViNets.

Run this command line for continuous training and evaluation.

```

shell

MODE

=

train_and_eval

# Can also be 'train'

CONFIG_FILE

=

official/vision/beta/projects/movinet/configs/yaml/movinet_a0_k600_8x8.yaml

python3 official/vision/beta/projects/movinet/train.py

\

--experiment

=

movinet_kinetics600

\

--mode

=

${

MODE

}

\

--model_dir

=

/tmp/movinet/

\

--config_file

=

${

CONFIG_FILE

}

\

--params_override

=

""

\

--gin_file

=

""

\

--gin_params

=

""

\

--tpu

=

""

\

--tf_data_service

=

""

```

Run this command line for evaluation.

```

shell

MODE

=

eval

# Can also be 'eval_continuous' for use during training

CONFIG_FILE

=

official/vision/beta/projects/movinet/configs/yaml/movinet_a0_k600_8x8.yaml

python3 official/vision/beta/projects/movinet/train.py

\

--experiment

=

movinet_kinetics600

\

--mode

=

${

MODE

}

\

--model_dir

=

/tmp/movinet/

\

--config_file

=

${

CONFIG_FILE

}

\

--params_override

=

""

\

--gin_file

=

""

\

--gin_params

=

""

\

--tpu

=

""

\

--tf_data_service

=

""

```

## References

-

[

Kinetics Datasets

](

https://deepmind.com/research/open-source/kinetics

)

-

[

MoViNets (Mobile Video Networks)

](

https://arxiv.org/abs/2103.11511

)

## License

[

](https://opensource.org/licenses/Apache-2.0)

This project is licensed under the terms of the

**Apache License 2.0**

.

## Citation

If you want to cite this code in your research paper, please use the following

information.

```

@article{kondratyuk2021movinets,

title={MoViNets: Mobile Video Networks for Efficient Video Recognition},

author={Dan Kondratyuk, Liangzhe Yuan, Yandong Li, Li Zhang, Matthew Brown, and Boqing Gong},

journal={arXiv preprint arXiv:2103.11511},

year={2021}

}

```

official/vision/beta/projects/movinet/configs/movinet.py

0 → 100644

View file @

7adc6ec1

# Copyright 2021 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

"""Definitions for MoViNet structures.

Reference: "MoViNets: Mobile Video Networks for Efficient Video Recognition"

https://arxiv.org/pdf/2103.11511.pdf

MoViNets are efficient video classification networks that are part of a model

family, ranging from the smallest model, MoViNet-A0, to the largest model,

MoViNet-A6. Each model has various width, depth, input resolution, and input

frame-rate associated with them. See the main paper for more details.

"""

import

dataclasses

from

official.core

import

config_definitions

as

cfg

from

official.core

import

exp_factory

from

official.modeling

import

hyperparams

from

official.vision.beta.configs

import

backbones_3d

from

official.vision.beta.configs

import

common

from

official.vision.beta.configs.google

import

video_classification

@

dataclasses

.

dataclass

class

Movinet

(

hyperparams

.

Config

):

"""Backbone config for Base MoViNet."""

model_id

:

str

=

'a0'

causal

:

bool

=

False

use_positional_encoding

:

bool

=

False

# Choose from ['3d', '2plus1d', '3d_2plus1d']

# 3d: default 3D convolution

# 2plus1d: (2+1)D convolution with Conv2D (2D reshaping)

# 3d_2plus1d: (2+1)D convolution with Conv3D (no 2D reshaping)

conv_type

:

str

=

'3d'

stochastic_depth_drop_rate

:

float

=

0.2

@

dataclasses

.

dataclass

class

MovinetA0

(

Movinet

):

"""Backbone config for MoViNet-A0.

Represents the smallest base MoViNet searched by NAS.

Reference: https://arxiv.org/pdf/2103.11511.pdf

"""

model_id

:

str

=

'a0'

@

dataclasses

.

dataclass

class

MovinetA1

(

Movinet

):

"""Backbone config for MoViNet-A1."""

model_id

:

str

=

'a1'

@

dataclasses

.

dataclass

class

MovinetA2

(

Movinet

):

"""Backbone config for MoViNet-A2."""

model_id

:

str

=

'a2'

@

dataclasses

.

dataclass

class

MovinetA3

(

Movinet

):

"""Backbone config for MoViNet-A3."""

model_id

:

str

=

'a3'

@

dataclasses

.

dataclass

class

MovinetA4

(

Movinet

):

"""Backbone config for MoViNet-A4."""

model_id

:

str

=

'a4'

@

dataclasses

.

dataclass

class

MovinetA5

(

Movinet

):

"""Backbone config for MoViNet-A5.

Represents the largest base MoViNet searched by NAS.

"""

model_id

:

str

=

'a5'

@

dataclasses

.

dataclass

class

MovinetT0

(

Movinet

):

"""Backbone config for MoViNet-T0.

MoViNet-T0 is a smaller version of MoViNet-A0 for even faster processing.

"""

model_id

:

str

=

't0'

@

dataclasses

.

dataclass

class

Backbone3D

(

backbones_3d

.

Backbone3D

):

"""Configuration for backbones.

Attributes:

type: 'str', type of backbone be used, on the of fields below.

movinet: movinet backbone config.

"""

type

:

str

=

'movinet'

movinet

:

Movinet

=

Movinet

()

@

dataclasses

.

dataclass

class

MovinetModel

(

video_classification

.

VideoClassificationModel

):

"""The MoViNet model config."""

model_type

:

str

=

'movinet'

backbone

:

Backbone3D

=

Backbone3D

()

norm_activation

:

common

.

NormActivation

=

common

.

NormActivation

(

activation

=

'swish'

,

norm_momentum

=

0.99

,

norm_epsilon

=

1e-3

,

use_sync_bn

=

True

)

output_states

:

bool

=

False

@

exp_factory

.

register_config_factory

(

'movinet_kinetics600'

)

def

movinet_kinetics600

()

->

cfg

.

ExperimentConfig

:

"""Video classification on Videonet with MoViNet backbone."""

exp

=

video_classification

.

video_classification_kinetics600

()

exp

.

task

.

train_data

.

dtype

=

'bfloat16'

exp

.

task

.

validation_data

.

dtype

=

'bfloat16'

model

=

MovinetModel

()

exp

.

task

.

model

=

model

return

exp

official/vision/beta/projects/movinet/configs/movinet_test.py

0 → 100644

View file @

7adc6ec1

# Copyright 2021 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

"""Tests for movinet video classification."""

from

absl.testing

import

parameterized

import

tensorflow

as

tf

from

official.core

import

config_definitions

as

cfg

from

official.core

import

exp_factory

from

official.vision.beta.configs

import

video_classification

as

exp_cfg

from

official.vision.beta.projects.movinet.configs

import

movinet

class

MovinetConfigTest

(

tf

.

test

.

TestCase

,

parameterized

.

TestCase

):

@

parameterized

.

parameters

(

(

'movinet_kinetics600'

,),)

def

test_video_classification_configs

(

self

,

config_name

):

config

=

exp_factory

.

get_exp_config

(

config_name

)

self

.

assertIsInstance

(

config

,

cfg

.

ExperimentConfig

)

self

.

assertIsInstance

(

config

.

task

,

exp_cfg

.

VideoClassificationTask

)

self

.

assertIsInstance

(

config

.

task

.

model

,

movinet

.

MovinetModel

)

self

.

assertIsInstance

(

config

.

task

.

train_data

,

exp_cfg

.

DataConfig

)

config

.

task

.

train_data

.

is_training

=

None

with

self

.

assertRaises

(

KeyError

):

config

.

validate

()

if

__name__

==

'__main__'

:

tf

.

test

.

main

()

official/vision/beta/projects/movinet/configs/yaml/movinet_a0_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A0 backbone.

# --experiment_type=movinet_kinetics600

# Achieves 71.65% Top-1 accuracy.

# http://mldash/experiments/4591693621833944103

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a0'

stochastic_depth_drop_rate

:

0.2

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

50

-

172

-

172

-

3

temporal_stride

:

5

random_stride_range

:

1

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

192

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

autoaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

50

-

172

-

172

-

3

temporal_stride

:

5

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

192

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a0_k600_cpu_local.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A0 backbone.

# --experiment_type=movinet_kinetics600

runtime

:

distribution_strategy

:

'

mirrored'

mixed_precision_dtype

:

'

float32'

task

:

model

:

backbone

:

movinet

:

model_id

:

'

a0'

norm_activation

:

use_sync_bn

:

false

dropout_rate

:

0.5

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

4

-

172

-

172

-

3

temporal_stride

:

5

random_stride_range

:

0

global_batch_size

:

2

dtype

:

'

float32'

shuffle_buffer_size

:

32

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

4

-

172

-

172

-

3

temporal_stride

:

5

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

2

dtype

:

'

float32'

drop_remainder

:

true

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

0.8

decay_steps

:

42104

warmup

:

linear

:

warmup_steps

:

1053

train_steps

:

10

validation_steps

:

10

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a0_stream_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A0-Stream backbone.

# --experiment_type=movinet_kinetics600

# Achieves 69.56% Top-1 accuracy.

# http://mldash/experiments/6696393165423234453

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a0'

causal

:

true

stochastic_depth_drop_rate

:

0.2

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

50

-

172

-

172

-

3

temporal_stride

:

5

random_stride_range

:

0

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

192

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

autoaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

50

-

172

-

172

-

3

temporal_stride

:

5

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

192

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a1_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A1 backbone.

# --experiment_type=movinet_kinetics600

# Achieves 76.63% Top-1 accuracy.

# http://mldash/experiments/6004897086445740406

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a1'

stochastic_depth_drop_rate

:

0.2

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

50

-

172

-

172

-

3

temporal_stride

:

5

random_stride_range

:

1

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

192

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

autoaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

50

-

172

-

172

-

3

temporal_stride

:

5

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

192

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a1_stream_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A1-Stream backbone.

# --experiment_type=movinet_kinetics600

# Achieves x% Top-1 accuracy.

# http://mldash/experiments/

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a1'

causal

:

true

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

stochastic_depth_rate

:

0.2

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

50

-

172

-

172

-

3

temporal_stride

:

5

random_stride_range

:

0

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

192

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

autoaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

50

-

172

-

172

-

3

temporal_stride

:

5

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

192

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a2_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A2 backbone.

# --experiment_type=movinet_kinetics600

# Achieves 78.62% Top-1 accuracy.

# http://mldash/experiments/7122292520723231204

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a2'

stochastic_depth_drop_rate

:

0.2

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

50

-

224

-

224

-

3

temporal_stride

:

5

random_stride_range

:

1

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

256

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

autoaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

50

-

224

-

224

-

3

temporal_stride

:

5

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

256

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a2_stream_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A2-Stream backbone.

# --experiment_type=movinet_kinetics600

# Achieves 78.40% Top-1 accuracy.

# http://mldash/experiments/3089118812758230318

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a2'

causal

:

true

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

stochastic_depth_rate

:

0.2

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

50

-

224

-

224

-

3

temporal_stride

:

5

random_stride_range

:

0

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

256

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

autoaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

50

-

224

-

224

-

3

temporal_stride

:

5

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

256

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a3_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A3 backbone.

# --experiment_type=movinet_kinetics600

# Achieves 81.79% Top-1 accuracy.

# http://mldash/experiments/1893120685388985498

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a3'

stochastic_depth_drop_rate

:

0.2

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

64

-

256

-

256

-

3

temporal_stride

:

2

random_stride_range

:

1

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

288

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

autoaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

120

-

256

-

256

-

3

temporal_stride

:

2

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

288

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a3_stream_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A3-Stream backbone.

# --experiment_type=movinet_kinetics600

# Achieves x% Top-1 accuracy.

# http://mldash/experiments/

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a3'

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

stochastic_depth_rate

:

0.2

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

64

-

256

-

256

-

3

temporal_stride

:

2

random_stride_range

:

0

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

288

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

autoaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

120

-

256

-

256

-

3

temporal_stride

:

2

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

288

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a4_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A4 backbone.

# --experiment_type=movinet_kinetics600

# Achieves 83.48% Top-1 accuracy.

# http://mldash/experiments/8781090241570014456

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a4'

stochastic_depth_drop_rate

:

0.2

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

32

-

290

-

290

-

3

temporal_stride

:

3

random_stride_range

:

1

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

320

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

autoaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

80

-

290

-

290

-

3

temporal_stride

:

3

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

320

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_a5_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-A5 backbone.

# --experiment_type=movinet_kinetics600

# Achieves 84.00% Top-1 accuracy.

# http://mldash/experiments/2864919645986275853

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

a5'

stochastic_depth_drop_rate

:

0.2

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

32

-

320

-

320

-

3

temporal_stride

:

2

random_stride_range

:

1

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

368

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

aug_type

:

'

randaug'

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

120

-

320

-

320

-

3

temporal_stride

:

2

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

32

min_image_size

:

368

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_t0_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-T0 backbone.

# --experiment_type=movinet_kinetics600

# Achieves 68.40% Top-1 accuracy.

# http://mldash/experiments/3958407113491615048

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

t0'

stochastic_depth_drop_rate

:

0.2

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

25

-

160

-

160

-

3

temporal_stride

:

10

random_stride_range

:

0

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

176

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

25

-

160

-

160

-

3

temporal_stride

:

10

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

176

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/configs/yaml/movinet_t0_stream_k600_8x8.yaml

0 → 100644

View file @

7adc6ec1

# Video classification on Kinetics-600 using MoViNet-T0-Stream backbone.

# --experiment_type=movinet_kinetics600

# Achieves x% Top-1 accuracy.

# http://mldash/experiments/

runtime

:

distribution_strategy

:

'

tpu'

mixed_precision_dtype

:

'

bfloat16'

task

:

losses

:

l2_weight_decay

:

0.00003

label_smoothing

:

0.1

model

:

backbone

:

movinet

:

model_id

:

'

t0'

norm_activation

:

use_sync_bn

:

true

dropout_rate

:

0.5

stochastic_depth_rate

:

0.2

train_data

:

name

:

kinetics600

variant_name

:

rgb

feature_shape

:

!!python/tuple

-

25

-

160

-

160

-

3

temporal_stride

:

10

random_stride_range

:

0

global_batch_size

:

1024

dtype

:

'

bfloat16'

shuffle_buffer_size

:

1024

min_image_size

:

176

aug_max_area_ratio

:

1.0

aug_max_aspect_ratio

:

2.0

aug_min_area_ratio

:

0.08

aug_min_aspect_ratio

:

0.5

validation_data

:

name

:

kinetics600

feature_shape

:

!!python/tuple

-

25

-

160

-

160

-

3

temporal_stride

:

10

num_test_clips

:

1

num_test_crops

:

1

global_batch_size

:

64

min_image_size

:

176

dtype

:

'

bfloat16'

drop_remainder

:

false

trainer

:

optimizer_config

:

learning_rate

:

cosine

:

initial_learning_rate

:

1.8

decay_steps

:

85785

warmup

:

linear

:

warmup_steps

:

2145

optimizer

:

type

:

'

rmsprop'

rmsprop

:

rho

:

0.9

momentum

:

0.9

epsilon

:

1.0

clipnorm

:

1.0

train_steps

:

85785

steps_per_loop

:

500

summary_interval

:

500

validation_interval

:

500

official/vision/beta/projects/movinet/export_saved_model.py

0 → 100644

View file @

7adc6ec1

# Copyright 2021 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# Lint as: python3

r

"""Exports models to tf.saved_model.

Export example:

```shell

python3 export_saved_model.py \

--output_path=/tmp/movinet/ \

--model_id=a0 \

--causal=True \

--use_2plus1d=False \

--num_classes=600 \

--checkpoint_path=""

```

To use an exported saved_model in various applications:

```python

import tensorflow as tf

import tensorflow_hub as hub

saved_model_path = ...

inputs = tf.keras.layers.Input(

shape=[None, None, None, 3],

dtype=tf.float32)

encoder = hub.KerasLayer(saved_model_path, trainable=True)

outputs = encoder(inputs)

model = tf.keras.Model(inputs, outputs)

example_input = tf.ones([1, 8, 172, 172, 3])

outputs = model(example_input, states)

```

"""

from

typing

import

Sequence

from

absl

import

app

from

absl

import

flags

import

tensorflow

as

tf

from

official.vision.beta.projects.movinet.modeling

import

movinet

from

official.vision.beta.projects.movinet.modeling

import

movinet_model

flags

.

DEFINE_string

(

'output_path'

,

'/tmp/movinet/'

,

'Path to saved exported saved_model file.'

)

flags

.

DEFINE_string

(

'model_id'

,

'a0'

,

'MoViNet model name.'

)

flags

.

DEFINE_bool

(

'causal'

,

False

,

'Run the model in causal mode.'

)

flags

.

DEFINE_bool

(

'use_2plus1d'

,

False

,

'Use (2+1)D features instead of 3D.'

)

flags

.

DEFINE_integer

(

'num_classes'

,

600

,

'The number of classes for prediction.'

)

flags

.

DEFINE_string

(

'checkpoint_path'

,

''

,

'Checkpoint path to load. Leave blank for default initialization.'

)

FLAGS

=

flags

.

FLAGS

def

main

(

argv

:

Sequence

[

str

])

->

None

:

if

len

(

argv

)

>

1

:

raise

app

.

UsageError

(

'Too many command-line arguments.'

)

# Use dimensions of 1 except the channels to export faster,

# since we only really need the last dimension to build and get the output

# states. These dimensions will be set to `None` once the model is built.

input_shape

=

[

1

,

1

,

1

,

1

,

3

]

backbone

=

movinet

.

Movinet

(

FLAGS

.

model_id

,

causal

=

FLAGS

.

causal

,

use_2plus1d

=

FLAGS

.

use_2plus1d

)

model

=

movinet_model

.

MovinetClassifier

(

backbone

,

num_classes

=

FLAGS

.

num_classes

,

output_states

=

FLAGS

.

causal

)

model

.

build

(

input_shape

)

if

FLAGS

.

checkpoint_path

:

model

.

load_weights

(

FLAGS

.

checkpoint_path

)

if

FLAGS

.

causal

:

# Call the model once to get the output states. Call again with `states`

# input to ensure that the inputs with the `states` argument is built

_

,

states

=

model

(

dict

(

image

=

tf

.

ones

(

input_shape

),

states

=

{}))

_

,

states

=

model

(

dict

(

image

=

tf

.

ones

(

input_shape

),

states

=

states

))

input_spec

=

tf

.

TensorSpec

(

shape

=

[

None

,

None

,

None

,

None

,

3

],

dtype

=

tf

.

float32

,

name

=

'inputs'

)

state_specs

=

{}

for

name

,

state

in

states

.

items

():

shape

=

state

.

shape

if

len

(

state

.

shape

)

==

5

:

shape

=

[

None

,

state

.

shape

[

1

],

None

,

None

,

state

.

shape

[

-

1

]]

new_spec

=

tf

.

TensorSpec

(

shape

=

shape

,

dtype

=

state

.

dtype

,

name

=

name

)

state_specs

[

name

]

=

new_spec

specs

=

(

input_spec

,

state_specs

)

# Define a tf.keras.Model with custom signatures to allow it to accept

# a state dict as an argument. We define it inline here because

# we first need to determine the shape of the state tensors before

# applying the `input_signature` argument to `tf.function`.

class

ExportStateModule

(

tf

.

Module

):

"""Module with state for exporting to saved_model."""

def

__init__

(

self

,

model

):

self

.

model

=

model

@

tf

.

function

(

input_signature

=

[

input_spec

])

def

__call__

(

self

,

inputs

):

return

self

.

model

(

dict

(

image

=

inputs

,

states

=

{}))

@

tf

.

function

(

input_signature

=

[

input_spec

])

def

base

(

self

,

inputs

):

return

self

.

model

(

dict

(

image

=

inputs

,

states

=

{}))

@

tf

.

function

(

input_signature

=

specs

)

def

stream

(

self

,

inputs

,

states

):

return

self

.

model

(

dict

(

image

=

inputs

,

states

=

states

))

module

=

ExportStateModule

(

model

)

tf

.

saved_model

.

save

(

module

,

FLAGS

.

output_path

)

else

:

_

=

model

(

tf

.

ones

(

input_shape

))

tf

.

keras

.

models

.

save_model

(

model

,

FLAGS

.

output_path

)

print

(

' ----- Done. Saved Model is saved at {}'

.

format

(

FLAGS

.

output_path

))

if

__name__

==

'__main__'

:

app

.

run

(

main

)

official/vision/beta/projects/movinet/modeling/movinet.py

0 → 100644

View file @

7adc6ec1

# Copyright 2021 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# Lint as: python3

"""Contains definitions of Mobile Video Networks.

Reference: https://arxiv.org/pdf/2103.11511.pdf

"""

from

typing

import

Optional

,

Sequence

,

Tuple

import

dataclasses

import

tensorflow

as

tf

from

official.modeling

import

hyperparams

from

official.vision.beta.modeling.backbones

import

factory

from

official.vision.beta.projects.movinet.modeling

import

movinet_layers

# Defines a set of kernel sizes and stride sizes to simplify and shorten

# architecture definitions for configs below.

KernelSize

=

Tuple

[

int

,

int

,

int

]

# K(ab) represents a 3D kernel of size (a, b, b)

K13

:

KernelSize

=

(

1

,

3

,

3

)

K15

:

KernelSize

=

(

1

,

5

,

5

)

K33

:

KernelSize

=

(

3

,

3

,

3

)

K53

:

KernelSize

=

(

5

,

3

,

3

)

# S(ab) represents a 3D stride of size (a, b, b)

S11

:

KernelSize

=

(

1

,

1

,

1

)

S12

:

KernelSize

=

(

1

,

2

,

2

)

S22

:

KernelSize

=

(

2

,

2

,

2

)

S21

:

KernelSize

=

(

2

,

1

,

1

)

@

dataclasses

.

dataclass

class

BlockSpec

:

"""Configuration of a block."""

pass

@

dataclasses

.

dataclass

class

StemSpec

(

BlockSpec

):

"""Configuration of a Movinet block."""

filters

:

int

=

0

kernel_size

:

KernelSize

=

(

0

,

0

,

0

)

strides

:

KernelSize

=

(

0

,

0

,

0

)

@

dataclasses

.

dataclass

class

MovinetBlockSpec

(

BlockSpec

):

"""Configuration of a Movinet block."""

base_filters

:

int

=

0

expand_filters

:

Sequence

[

int

]

=

()

kernel_sizes

:

Sequence

[

KernelSize

]

=

()

strides

:

Sequence

[

KernelSize

]

=

()

@

dataclasses

.

dataclass

class

HeadSpec

(

BlockSpec

):

"""Configuration of a Movinet block."""

project_filters

:

int

=

0

head_filters

:

int

=

0

output_per_frame

:

bool

=

False

max_pool_predictions

:

bool

=

False

# Block specs specify the architecture of each model

BLOCK_SPECS

=

{

'a0'

:

(

StemSpec

(

filters

=

8

,

kernel_size

=

K13

,

strides

=

S12

),

MovinetBlockSpec

(

base_filters

=

8

,

expand_filters

=

(

24

,),

kernel_sizes

=

(

K15

,),

strides

=

(

S12

,)),

MovinetBlockSpec

(

base_filters

=

32

,

expand_filters

=

(

80

,

80

,

80

),

kernel_sizes

=

(

K33

,

K33

,

K33

),

strides

=

(

S12

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

56

,

expand_filters

=

(

184

,

112

,

184

),

kernel_sizes

=

(

K53

,

K33

,

K33

),

strides

=

(

S12

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

56

,

expand_filters

=

(

184

,

184

,

184

,

184

),

kernel_sizes

=

(

K53

,

K33

,

K33

,

K33

),

strides

=

(

S11

,

S11

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

104

,

expand_filters

=

(

384

,

280

,

280

,

344

),

kernel_sizes

=

(

K53

,

K15

,

K15

,

K15

),

strides

=

(

S12

,

S11

,

S11

,

S11

)),

HeadSpec

(

project_filters

=

480

,

head_filters

=

2048

),

),

'a1'

:

(

StemSpec

(

filters

=

16

,

kernel_size

=

K13

,

strides

=

S12

),

MovinetBlockSpec

(

base_filters

=

16

,

expand_filters

=

(

40

,

40

),

kernel_sizes

=

(

K15

,

K33

),

strides

=

(

S12

,

S11

)),

MovinetBlockSpec

(

base_filters

=

40

,

expand_filters

=

(

96

,

120

,

96

,

96

),

kernel_sizes

=

(

K33

,

K33

,

K33

,

K33

),

strides

=

(

S12

,

S11

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

64

,

expand_filters

=

(

216

,

128

,

216

,

168

,

216

),

kernel_sizes

=

(

K53

,

K33

,

K33

,

K33

,

K33

),

strides

=

(

S12

,

S11

,

S11

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

64

,

expand_filters

=

(

216

,

216

,

216

,

128

,

128

,

216

),

kernel_sizes

=

(

K53

,

K33

,

K33

,

K33

,

K15

,

K33

),

strides

=

(

S11

,

S11

,

S11

,

S11

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

136

,

expand_filters

=

(

456

,

360

,

360

,

360

,

456

,

456

,

544

),

kernel_sizes

=

(

K53

,

K15

,

K15

,

K15

,

K15

,

K33

,

K13

),

strides

=

(

S12

,

S11

,

S11

,

S11

,

S11

,

S11

,

S11

)),

HeadSpec

(

project_filters

=

600

,

head_filters

=

2048

),

),

'a2'

:

(

StemSpec

(

filters

=

16

,

kernel_size

=

K13

,

strides

=

S12

),

MovinetBlockSpec

(

base_filters

=

16

,

expand_filters

=

(

40

,

40

,

64

),

kernel_sizes

=

(

K15

,

K33

,

K33

),

strides

=

(

S12

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

40

,

expand_filters

=

(

96

,

120

,

96

,

96

,

120

),

kernel_sizes

=

(

K33

,

K33

,

K33

,

K33

,

K33

),

strides

=

(

S12

,

S11

,

S11

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

72

,

expand_filters

=

(

240

,

160

,

240

,

192

,

240

),

kernel_sizes

=

(

K53

,

K33

,

K33

,

K33

,

K33

),

strides

=

(

S12

,

S11

,

S11

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

72

,

expand_filters

=

(

240

,

240

,

240

,

240

,

144

,

240

),

kernel_sizes

=

(

K53

,

K33

,

K33

,

K33

,

K15

,

K33

),

strides

=

(

S11

,

S11

,

S11

,

S11

,

S11

,

S11

)),

MovinetBlockSpec

(

base_filters

=

144

,

expand_filters

=

(

480

,

384

,

384

,

480

,

480

,

480

,

576

),

kernel_sizes

=

(

K53

,

K15

,

K15

,

K15

,

K15

,

K33

,

K13

),

strides

=

(

S12

,

S11

,

S11

,

S11

,

S11

,

S11

,

S11

)),

HeadSpec

(

project_filters

=

640

,

head_filters

=

2048

),

),

'a3'

:

(

StemSpec

(

filters

=

16

,

kernel_size

=

K13

,

strides

=

S12

),

MovinetBlockSpec

(

base_filters

=

16

,

expand_filters

=

(

40

,

40

,