首次提交

parents

Showing

LICENSE

0 → 100644

README.md

0 → 100644

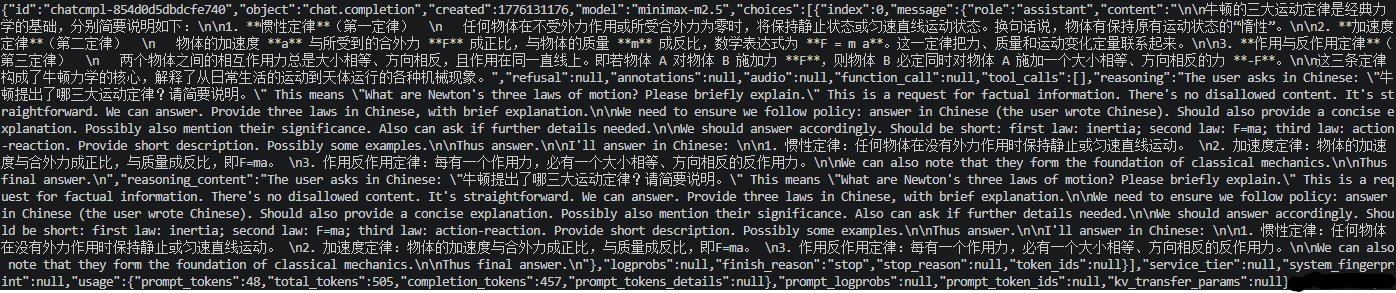

doc/01.png

0 → 100644

76.5 KB

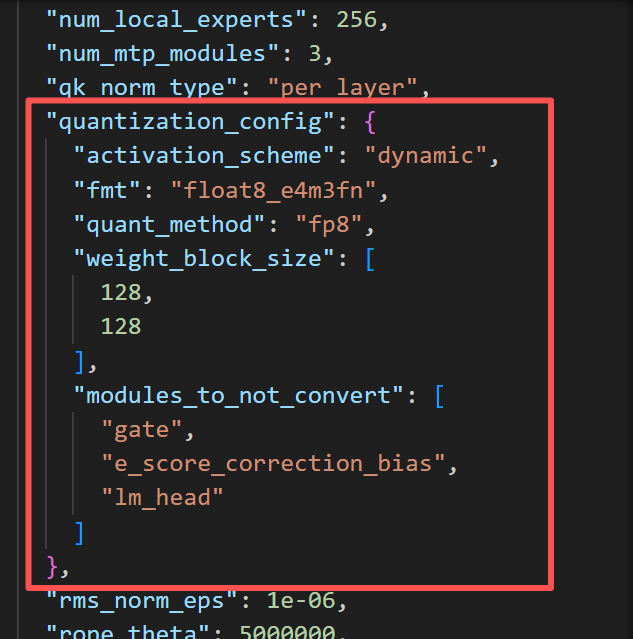

doc/quant.png

0 → 100644

57.2 KB

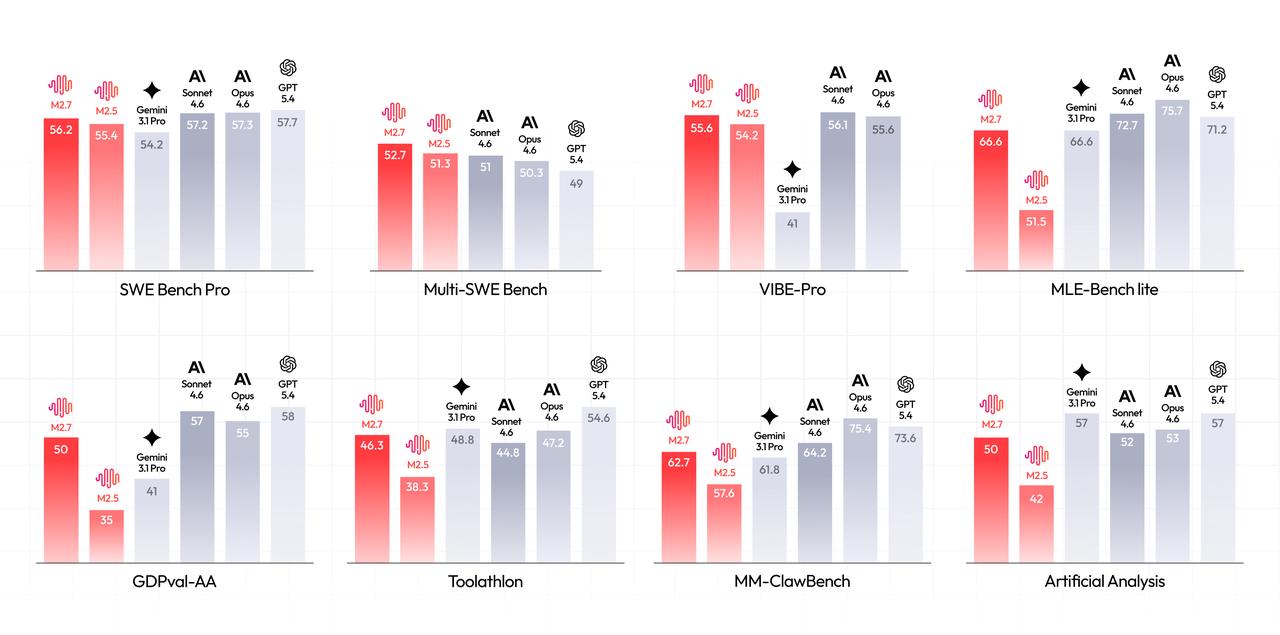

doc/result.png

0 → 100644

76.7 KB

icon.png

0 → 100644

62.1 KB

model.properties

0 → 100644