Update model.properties, Dockerfile, requirements.txt, LICENSE.txt, README.md,...

Update model.properties, Dockerfile, requirements.txt, LICENSE.txt, README.md, whl.zip, doc/training_loss.png, doc/result.png, doc/internlm2_math.pdf, finetune/single_node.sh, finetune/multi_node.sh, finetune/data/README.md, finetune/data/identity.json, finetune/data/mllm_demo.json, finetune/data/dataset_info.json, finetune/data/alpaca_zh_demo.json, finetune/data/alpaca_en_demo.json, finetune/data/glaive_toolcall_zh_demo.json, finetune/data/dpo_zh_demo.json, finetune/data/c4_demo.json, finetune/data/glaive_toolcall_en_demo.json, finetune/data/kto_en_demo.json, finetune/data/dpo_en_demo.json, finetune/data/README_zh.md, finetune/data/wiki_demo.txt, finetune/scripts/cal_flops.py, finetune/scripts/length_cdf.py, finetune/scripts/cal_ppl.py, finetune/scripts/llamafy_qwen.py, finetune/scripts/cal_lr.py, finetune/scripts/llamafy_baichuan2.py, finetune/scripts/llama_pro.py, finetune/scripts/loftq_init.py, finetune/src/api.py, finetune/src/train.py, finetune/src/webui.py, finetune/src/llmfactory/__init__.py, finetune/src/llmfactory/cli.py, finetune/src/llmfactory/api/__init__.py, finetune/src/llmfactory/api/common.py, finetune/src/llmfactory/api/chat.py, finetune/src/llmfactory/api/app.py, finetune/src/llmfactory/api/protocol.py, finetune/src/llmfactory/chat/__init__.py, finetune/src/llmfactory/chat/base_engine.py, finetune/src/llmfactory/chat/vllm_engine.py, finetune/src/llmfactory/chat/chat_model.py, finetune/src/llmfactory/chat/hf_engine.py, finetune/src/llmfactory/data/__init__.py, finetune/src/llmfactory/data/loader.py, finetune/src/llmfactory/data/utils.py, finetune/src/llmfactory/data/collator.py, finetune/src/llmfactory/data/formatter.py, finetune/src/llmfactory/data/aligner.py, finetune/src/llmfactory/data/template.py, finetune/src/llmfactory/data/parser.py, finetune/src/llmfactory/data/preprocess.py, finetune/src/llmfactory/eval/__init__.py, finetune/src/llmfactory/eval/template.py, finetune/src/llmfactory/eval/evaluator.py, finetune/src/llmfactory/extras/__init__.py, finetune/src/llmfactory/extras/logging.py, finetune/src/llmfactory/extras/constants.py, finetune/src/llmfactory/extras/misc.py, finetune/src/llmfactory/extras/packages.py, finetune/src/llmfactory/extras/ploting.py, finetune/src/llmfactory/extras/callbacks.py, finetune/src/llmfactory/hparams/__init__.py, finetune/src/llmfactory/hparams/data_args.py, finetune/src/llmfactory/hparams/finetuning_args.py, finetune/src/llmfactory/hparams/generating_args.py, finetune/src/llmfactory/hparams/evaluation_args.py, finetune/src/llmfactory/hparams/model_args.py, finetune/src/llmfactory/hparams/parser.py, finetune/src/llmfactory/model/__init__.py, finetune/src/llmfactory/model/patcher.py, finetune/src/llmfactory/model/adapter.py, finetune/src/llmfactory/model/loader.py, finetune/src/llmfactory/model/utils/__init__.py, finetune/src/llmfactory/model/utils/misc.py, finetune/src/llmfactory/model/utils/checkpointing.py, finetune/src/llmfactory/model/utils/embedding.py, finetune/src/llmfactory/model/utils/attention.py, finetune/src/llmfactory/model/utils/longlora.py, finetune/src/llmfactory/model/utils/visual.py, finetune/src/llmfactory/model/utils/moe.py, finetune/src/llmfactory/model/utils/valuehead.py, finetune/src/llmfactory/model/utils/rope.py, finetune/src/llmfactory/model/utils/quantization.py, finetune/src/llmfactory/model/utils/mod.py, finetune/src/llmfactory/model/utils/unsloth.py, finetune/src/llmfactory/train/__init__.py, finetune/src/llmfactory/train/utils.py, finetune/src/llmfactory/train/tuner.py, finetune/src/llmfactory/train/dpo/__init__.py, finetune/src/llmfactory/train/dpo/trainer.py, finetune/src/llmfactory/train/dpo/workflow.py, finetune/src/llmfactory/train/kto/__init__.py, finetune/src/llmfactory/train/kto/trainer.py, finetune/src/llmfactory/train/kto/workflow.py, finetune/src/llmfactory/train/orpo/trainer.py, finetune/src/llmfactory/train/orpo/__init__.py, finetune/src/llmfactory/train/orpo/workflow.py, finetune/src/llmfactory/train/ppo/__init__.py, finetune/src/llmfactory/train/ppo/workflow.py, finetune/src/llmfactory/train/ppo/utils.py, finetune/src/llmfactory/train/ppo/trainer.py, finetune/src/llmfactory/train/pt/__init__.py, finetune/src/llmfactory/train/pt/workflow.py, finetune/src/llmfactory/train/pt/trainer.py, finetune/src/llmfactory/train/rm/__init__.py, finetune/src/llmfactory/train/rm/metric.py, finetune/src/llmfactory/train/rm/workflow.py, finetune/src/llmfactory/train/rm/trainer.py, finetune/src/llmfactory/train/sft/__init__.py, finetune/src/llmfactory/train/sft/metric.py, finetune/src/llmfactory/train/sft/trainer.py, finetune/src/llmfactory/train/sft/workflow.py, finetune/src/llmfactory/webui/__init__.py, finetune/src/llmfactory/webui/chatter.py, finetune/src/llmfactory/webui/common.py, finetune/src/llmfactory/webui/css.py, finetune/src/llmfactory/webui/manager.py, finetune/src/llmfactory/webui/engine.py, finetune/src/llmfactory/webui/runner.py, finetune/src/llmfactory/webui/interface.py, finetune/src/llmfactory/webui/utils.py, finetune/src/llmfactory/webui/locales.py, finetune/src/llmfactory/webui/components/__init__.py, finetune/src/llmfactory/webui/components/chatbot.py, finetune/src/llmfactory/webui/components/data.py, finetune/src/llmfactory/webui/components/eval.py, finetune/src/llmfactory/webui/components/export.py, finetune/src/llmfactory/webui/components/infer.py, finetune/src/llmfactory/webui/components/top.py, finetune/src/llmfactory/webui/components/train.py, inference/single_dcu.py files

Showing

Dockerfile

0 → 100644

LICENSE.txt

0 → 100644

README.md

0 → 100644

doc/internlm2_math.pdf

0 → 100644

File added

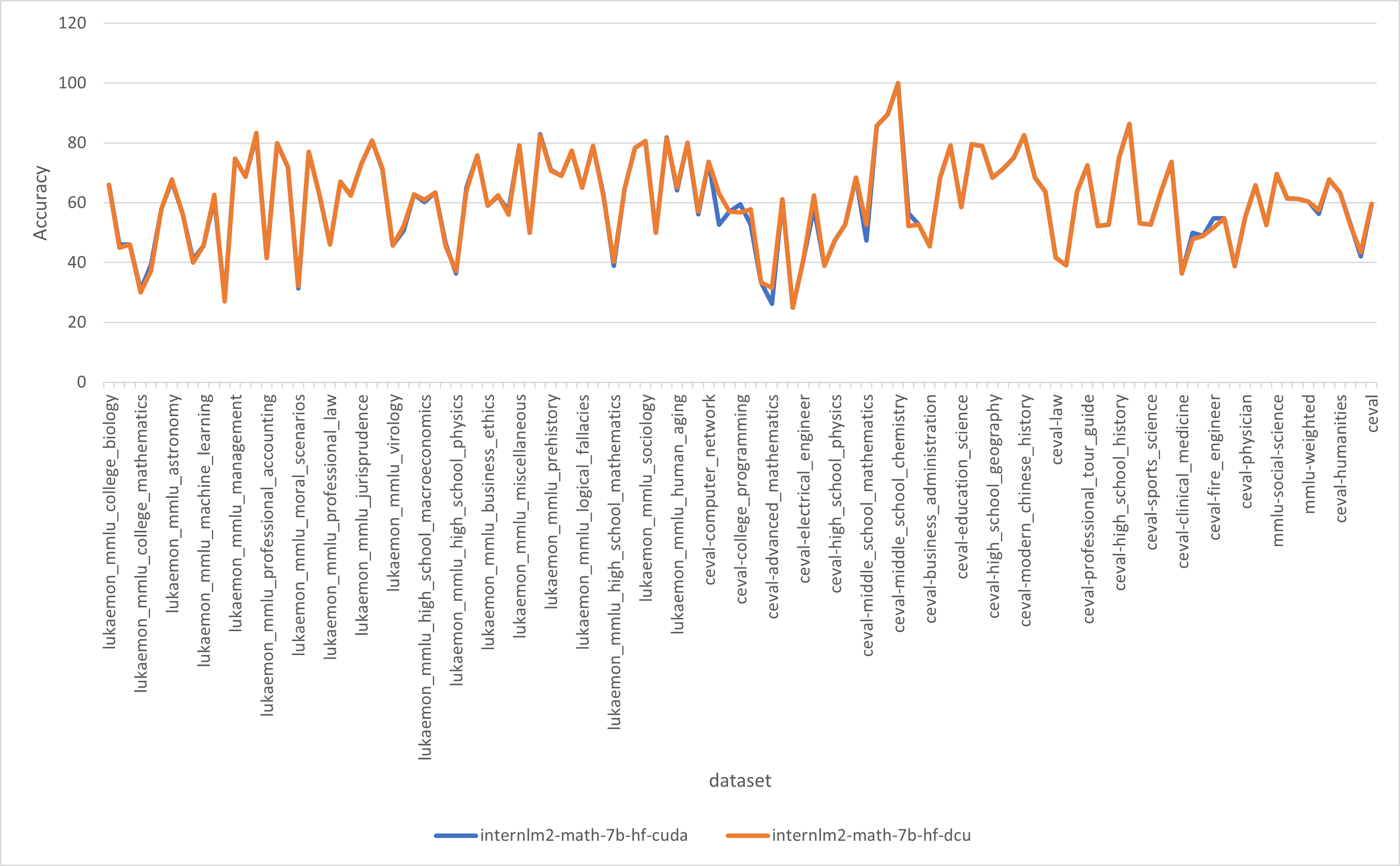

doc/result.png

0 → 100644

359 KB

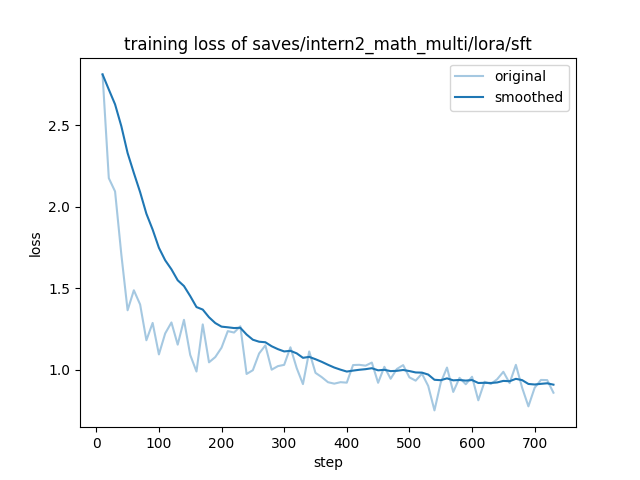

doc/training_loss.png

0 → 100644

37.2 KB

finetune/data/README.md

0 → 100644

finetune/data/README_zh.md

0 → 100644

This source diff could not be displayed because it is too large. You can view the blob instead.

This source diff could not be displayed because it is too large. You can view the blob instead.

finetune/data/c4_demo.json

0 → 100644

This source diff could not be displayed because it is too large. You can view the blob instead.

This source diff could not be displayed because it is too large. You can view the blob instead.

This source diff could not be displayed because it is too large. You can view the blob instead.

This source diff could not be displayed because it is too large. You can view the blob instead.

This source diff could not be displayed because it is too large. You can view the blob instead.

finetune/data/identity.json

0 → 100644

This source diff could not be displayed because it is too large. You can view the blob instead.

finetune/data/mllm_demo.json

0 → 100644

finetune/data/wiki_demo.txt

0 → 100644

This source diff could not be displayed because it is too large. You can view the blob instead.