# Internlm-Xcomposer2 最佳实践

## 目录

- [环境准备](#环境准备)

- [推理](#推理)

- [微调](#微调)

- [微调后推理](#微调后推理)

## 环境准备

```shell

pip install 'ms-swift[llm]' -U

```

## 推理

推理[internlm-xcomposer2-7b-chat](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm-xcomposer2-7b/summary):

```shell

# Experimental environment: A10, 3090, V100, ...

# 21GB GPU memory

CUDA_VISIBLE_DEVICES=0 swift infer --model_type internlm-xcomposer2-7b-chat

```

输出: (支持传入本地路径或URL)

```python

"""

<<< 你是谁?

我是你的助手,一个基于语言的人工智能模型,可以回答你的问题。

--------------------------------------------------

<<< ![]() http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/animal.png

http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/animal.png![]() http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/cat.png这两张图片有什么区别

这两张图片是不同的, 第一张是羊的图片, 第二张是猫的图片

--------------------------------------------------

<<<

http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/cat.png这两张图片有什么区别

这两张图片是不同的, 第一张是羊的图片, 第二张是猫的图片

--------------------------------------------------

<<< ![]() http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/animal.png图中有几只羊

图中有4只羊

--------------------------------------------------

<<<

http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/animal.png图中有几只羊

图中有4只羊

--------------------------------------------------

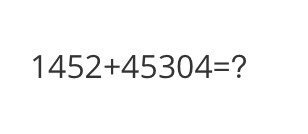

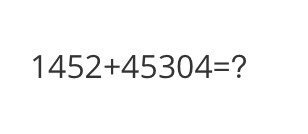

<<< ![]() http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/math.png计算结果是多少

计算结果是1452+45304=46756

--------------------------------------------------

<<<

http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/math.png计算结果是多少

计算结果是1452+45304=46756

--------------------------------------------------

<<< ![]() http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/poem.png根据图片中的内容写首诗

湖面波光粼粼,小舟独自飘荡。

船上点灯,照亮夜色,

星星点点,倒映水中。

远处山峦,云雾缭绕,

天空繁星,闪烁不停。

湖面如镜,倒影清晰,

小舟穿行,如诗如画。

"""

```

示例图片如下:

cat:

http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/poem.png根据图片中的内容写首诗

湖面波光粼粼,小舟独自飘荡。

船上点灯,照亮夜色,

星星点点,倒映水中。

远处山峦,云雾缭绕,

天空繁星,闪烁不停。

湖面如镜,倒影清晰,

小舟穿行,如诗如画。

"""

```

示例图片如下:

cat:

animal:

animal:

math:

math:

poem:

poem:

**单样本推理**

```python

import os

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

from swift.llm import (

get_model_tokenizer, get_template, inference, ModelType,

get_default_template_type, inference_stream

)

from swift.utils import seed_everything

import torch

model_type = ModelType.internlm_xcomposer2_7b_chat

template_type = get_default_template_type(model_type)

print(f'template_type: {template_type}')

model, tokenizer = get_model_tokenizer(model_type, torch.float16,

model_kwargs={'device_map': 'auto'})

model.generation_config.max_new_tokens = 256

template = get_template(template_type, tokenizer)

seed_everything(42)

query = """

**单样本推理**

```python

import os

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

from swift.llm import (

get_model_tokenizer, get_template, inference, ModelType,

get_default_template_type, inference_stream

)

from swift.utils import seed_everything

import torch

model_type = ModelType.internlm_xcomposer2_7b_chat

template_type = get_default_template_type(model_type)

print(f'template_type: {template_type}')

model, tokenizer = get_model_tokenizer(model_type, torch.float16,

model_kwargs={'device_map': 'auto'})

model.generation_config.max_new_tokens = 256

template = get_template(template_type, tokenizer)

seed_everything(42)

query = """![]() http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/road.png距离各城市多远?"""

response, history = inference(model, template, query)

print(f'query: {query}')

print(f'response: {response}')

# 流式

query = '距离最远的城市是哪?'

gen = inference_stream(model, template, query, history)

print_idx = 0

print(f'query: {query}\nresponse: ', end='')

for response, history in gen:

delta = response[print_idx:]

print(delta, end='', flush=True)

print_idx = len(response)

print()

print(f'history: {history}')

"""

query:

http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/road.png距离各城市多远?"""

response, history = inference(model, template, query)

print(f'query: {query}')

print(f'response: {response}')

# 流式

query = '距离最远的城市是哪?'

gen = inference_stream(model, template, query, history)

print_idx = 0

print(f'query: {query}\nresponse: ', end='')

for response, history in gen:

delta = response[print_idx:]

print(delta, end='', flush=True)

print_idx = len(response)

print()

print(f'history: {history}')

"""

query: ![]() http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/road.png距离各城市多远?

response: 马鞍山距离阳江62公里,广州距离广州293公里。

query: 距离最远的城市是哪?

response: 距离最最远的城市是广州,距离广州293公里。

history: [['

http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/road.png距离各城市多远?

response: 马鞍山距离阳江62公里,广州距离广州293公里。

query: 距离最远的城市是哪?

response: 距离最最远的城市是广州,距离广州293公里。

history: [['![]() http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/road.png距离各城市多远?', ' 马鞍山距离阳江62公里,广州距离广州293公里。'], ['距离最远的城市是哪?', ' 距离最远的城市是广州,距离广州293公里。']]

"""

```

示例图片如下:

road:

http://modelscope-open.oss-cn-hangzhou.aliyuncs.com/images/road.png距离各城市多远?', ' 马鞍山距离阳江62公里,广州距离广州293公里。'], ['距离最远的城市是哪?', ' 距离最远的城市是广州,距离广州293公里。']]

"""

```

示例图片如下:

road:

## 微调

多模态大模型微调通常使用**自定义数据集**进行微调. 这里展示可直接运行的demo:

(默认只对LLM部分的qkv进行lora微调. 不支持`--lora_target_modules ALL`. 支持全参数微调.)

```shell

# Experimental environment: A10, 3090, V100, ...

# 21GB GPU memory

CUDA_VISIBLE_DEVICES=0 swift sft \

--model_type internlm-xcomposer2-7b-chat \

--dataset coco-en-mini \

```

[自定义数据集](../LLM/自定义与拓展.md#-推荐命令行参数的形式)支持json, jsonl样式, 以下是自定义数据集的例子:

(支持多轮对话, 支持每轮对话含多张图片或不含图片, 支持传入本地路径或URL. 该模型不支持merge-lora)

```json

[

{"conversations": [

{"from": "user", "value": "

## 微调

多模态大模型微调通常使用**自定义数据集**进行微调. 这里展示可直接运行的demo:

(默认只对LLM部分的qkv进行lora微调. 不支持`--lora_target_modules ALL`. 支持全参数微调.)

```shell

# Experimental environment: A10, 3090, V100, ...

# 21GB GPU memory

CUDA_VISIBLE_DEVICES=0 swift sft \

--model_type internlm-xcomposer2-7b-chat \

--dataset coco-en-mini \

```

[自定义数据集](../LLM/自定义与拓展.md#-推荐命令行参数的形式)支持json, jsonl样式, 以下是自定义数据集的例子:

(支持多轮对话, 支持每轮对话含多张图片或不含图片, 支持传入本地路径或URL. 该模型不支持merge-lora)

```json

[

{"conversations": [

{"from": "user", "value": "![]() img_path11111"},

{"from": "assistant", "value": "22222"}

]},

{"conversations": [

{"from": "user", "value": "

img_path11111"},

{"from": "assistant", "value": "22222"}

]},

{"conversations": [

{"from": "user", "value": "![]() img_path

img_path![]() img_path2

img_path2![]() img_path3aaaaa"},

{"from": "assistant", "value": "bbbbb"},

{"from": "user", "value": "

img_path3aaaaa"},

{"from": "assistant", "value": "bbbbb"},

{"from": "user", "value": "![]() img_pathccccc"},

{"from": "assistant", "value": "ddddd"}

]},

{"conversations": [

{"from": "user", "value": "AAAAA"},

{"from": "assistant", "value": "BBBBB"},

{"from": "user", "value": "CCCCC"},

{"from": "assistant", "value": "DDDDD"}

]}

]

```

## 微调后推理

```shell

CUDA_VISIBLE_DEVICES=0 swift infer \

--ckpt_dir output/internlm-xcomposer2-7b-chat/vx-xxx/checkpoint-xxx \

--load_dataset_config true \

```

img_pathccccc"},

{"from": "assistant", "value": "ddddd"}

]},

{"conversations": [

{"from": "user", "value": "AAAAA"},

{"from": "assistant", "value": "BBBBB"},

{"from": "user", "value": "CCCCC"},

{"from": "assistant", "value": "DDDDD"}

]}

]

```

## 微调后推理

```shell

CUDA_VISIBLE_DEVICES=0 swift infer \

--ckpt_dir output/internlm-xcomposer2-7b-chat/vx-xxx/checkpoint-xxx \

--load_dataset_config true \

```

animal:

animal:

math:

math:

poem:

poem:

**单样本推理**

```python

import os

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

from swift.llm import (

get_model_tokenizer, get_template, inference, ModelType,

get_default_template_type, inference_stream

)

from swift.utils import seed_everything

import torch

model_type = ModelType.internlm_xcomposer2_7b_chat

template_type = get_default_template_type(model_type)

print(f'template_type: {template_type}')

model, tokenizer = get_model_tokenizer(model_type, torch.float16,

model_kwargs={'device_map': 'auto'})

model.generation_config.max_new_tokens = 256

template = get_template(template_type, tokenizer)

seed_everything(42)

query = """

**单样本推理**

```python

import os

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

from swift.llm import (

get_model_tokenizer, get_template, inference, ModelType,

get_default_template_type, inference_stream

)

from swift.utils import seed_everything

import torch

model_type = ModelType.internlm_xcomposer2_7b_chat

template_type = get_default_template_type(model_type)

print(f'template_type: {template_type}')

model, tokenizer = get_model_tokenizer(model_type, torch.float16,

model_kwargs={'device_map': 'auto'})

model.generation_config.max_new_tokens = 256

template = get_template(template_type, tokenizer)

seed_everything(42)

query = """ ## 微调

多模态大模型微调通常使用**自定义数据集**进行微调. 这里展示可直接运行的demo:

(默认只对LLM部分的qkv进行lora微调. 不支持`--lora_target_modules ALL`. 支持全参数微调.)

```shell

# Experimental environment: A10, 3090, V100, ...

# 21GB GPU memory

CUDA_VISIBLE_DEVICES=0 swift sft \

--model_type internlm-xcomposer2-7b-chat \

--dataset coco-en-mini \

```

[自定义数据集](../LLM/自定义与拓展.md#-推荐命令行参数的形式)支持json, jsonl样式, 以下是自定义数据集的例子:

(支持多轮对话, 支持每轮对话含多张图片或不含图片, 支持传入本地路径或URL. 该模型不支持merge-lora)

```json

[

{"conversations": [

{"from": "user", "value": "

## 微调

多模态大模型微调通常使用**自定义数据集**进行微调. 这里展示可直接运行的demo:

(默认只对LLM部分的qkv进行lora微调. 不支持`--lora_target_modules ALL`. 支持全参数微调.)

```shell

# Experimental environment: A10, 3090, V100, ...

# 21GB GPU memory

CUDA_VISIBLE_DEVICES=0 swift sft \

--model_type internlm-xcomposer2-7b-chat \

--dataset coco-en-mini \

```

[自定义数据集](../LLM/自定义与拓展.md#-推荐命令行参数的形式)支持json, jsonl样式, 以下是自定义数据集的例子:

(支持多轮对话, 支持每轮对话含多张图片或不含图片, 支持传入本地路径或URL. 该模型不支持merge-lora)

```json

[

{"conversations": [

{"from": "user", "value": "