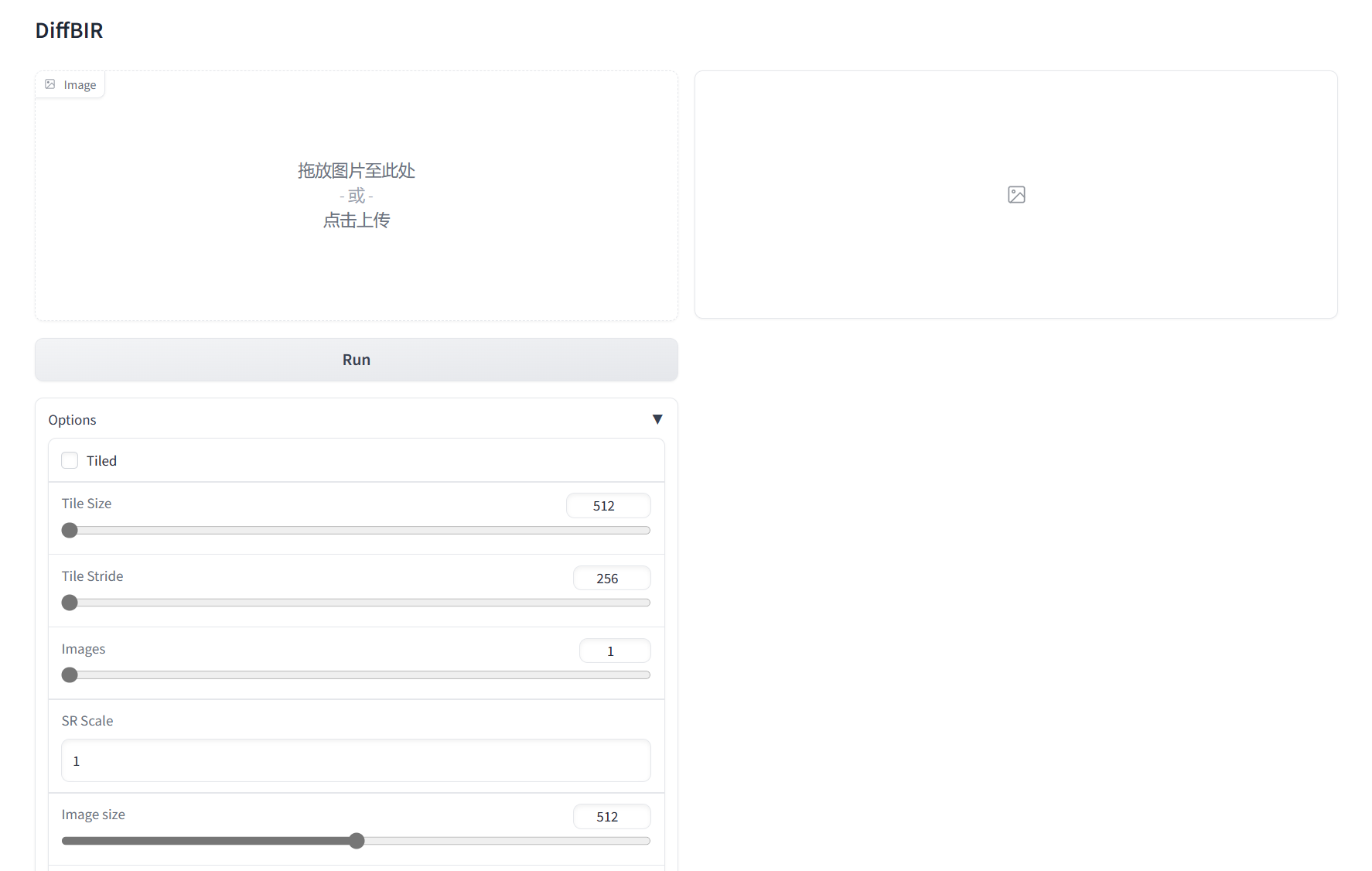

integrate a patch-based sampling strategy

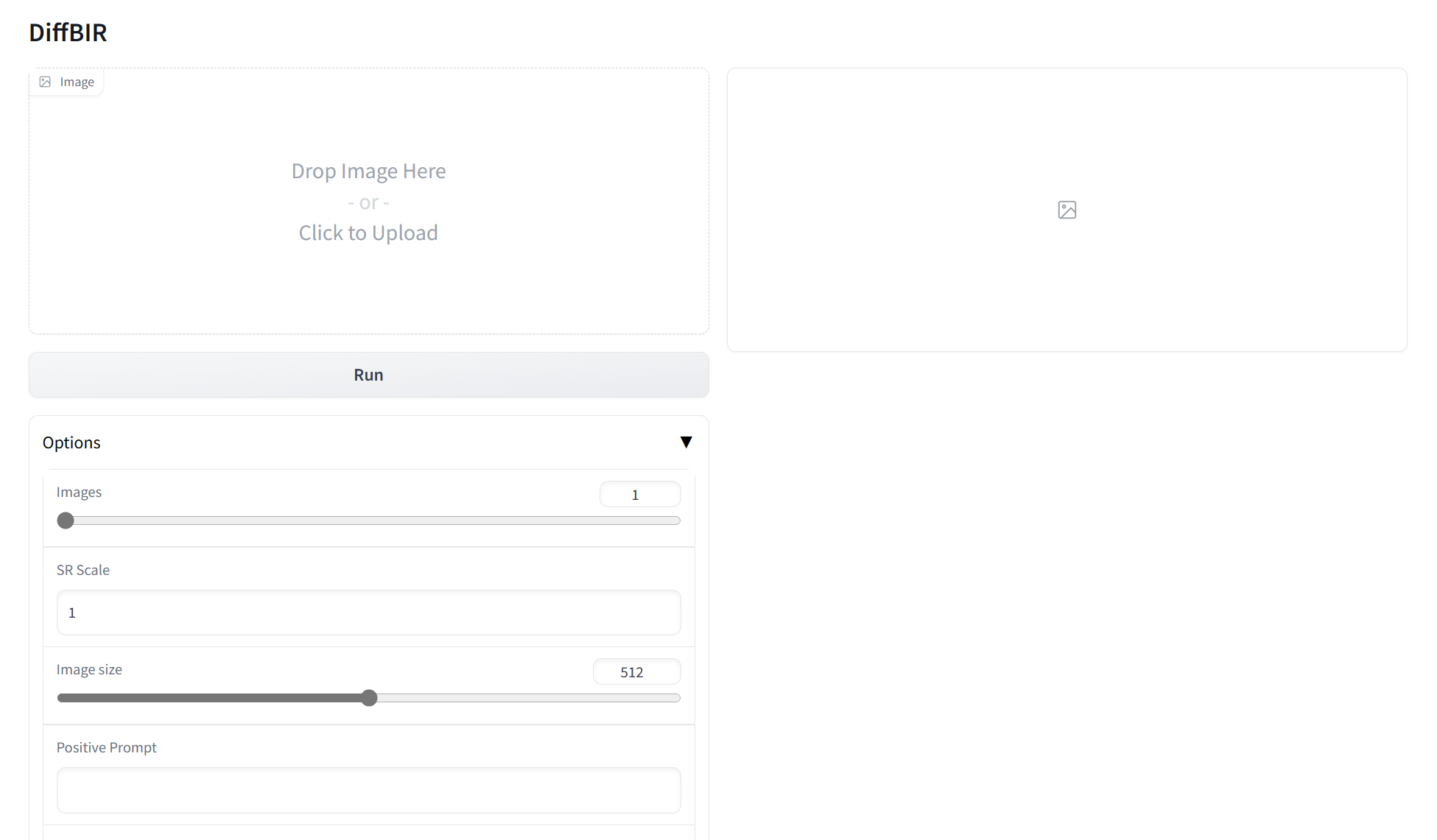

Showing

| W: | H:

| W: | H:

| --extra-index-url https://download.pytorch.org/whl/cu116 | ||

| torch==1.13.1+cu116 | ||

| torchvision==0.14.1+cu116 | ||

| torchaudio==0.13.1 | ||

| xformers==0.0.16 | ||

| pytorch_lightning==1.4.2 | ||

| einops | ||

| open-clip-torch | ||

| omegaconf | ||

| torchmetrics==0.6.0 | ||

| triton | ||

| triton==2.0.0 | ||

| opencv-python-headless | ||

| scipy | ||

| matplotlib | ||

| ... | ... |

utils/realesrgan/rrdbnet.py

0 → 100644