Initial commit

parents

Showing

LICENSE

0 → 100644

README.md

0 → 100644

convert_weight/convert.py

0 → 100644

doc/result_dcu.png

0 → 100644

405 KB

icon.png

0 → 100644

53.8 KB

inference/README.md

0 → 100644

inference/config.json

0 → 100644

inference/encoding_dsv4.py

0 → 100644

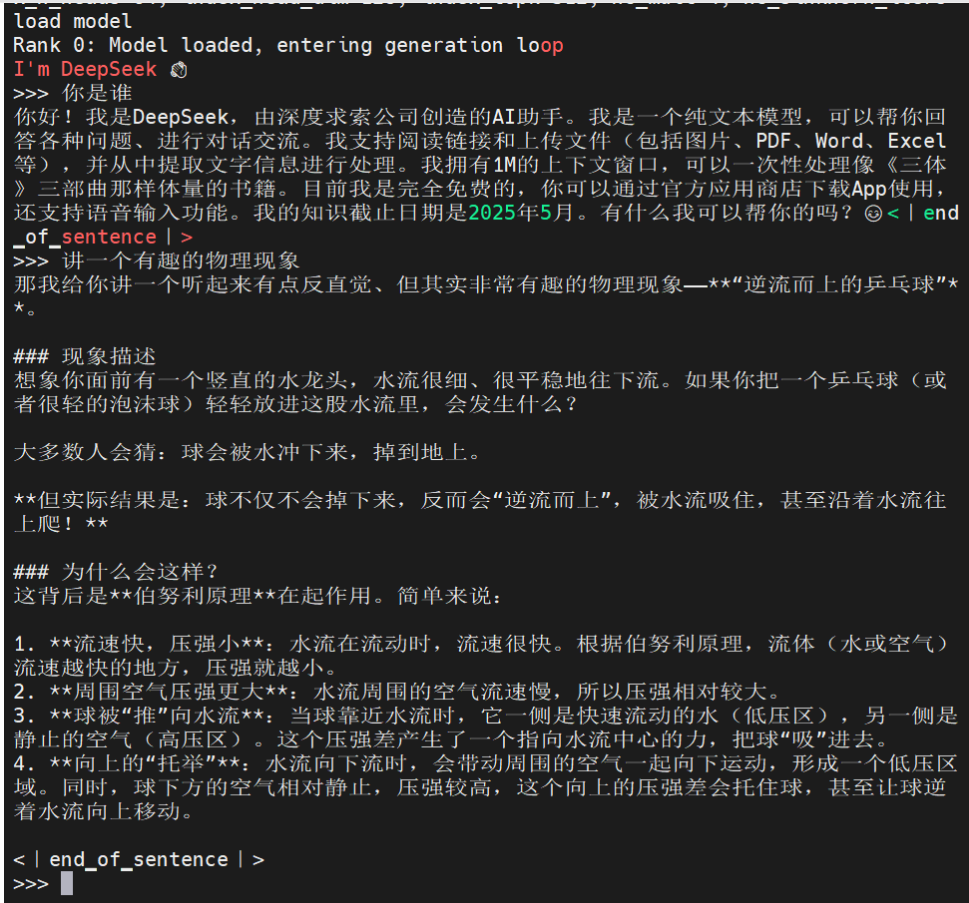

inference/generate.py

0 → 100644

inference/kernel.py

0 → 100644

inference/model.py

0 → 100644

inference/start_torch.sh

0 → 100644

model.properties

0 → 100644