arcface_pytorch

Showing

LICENSE.md

0 → 100644

README2.md

deleted

100644 → 0

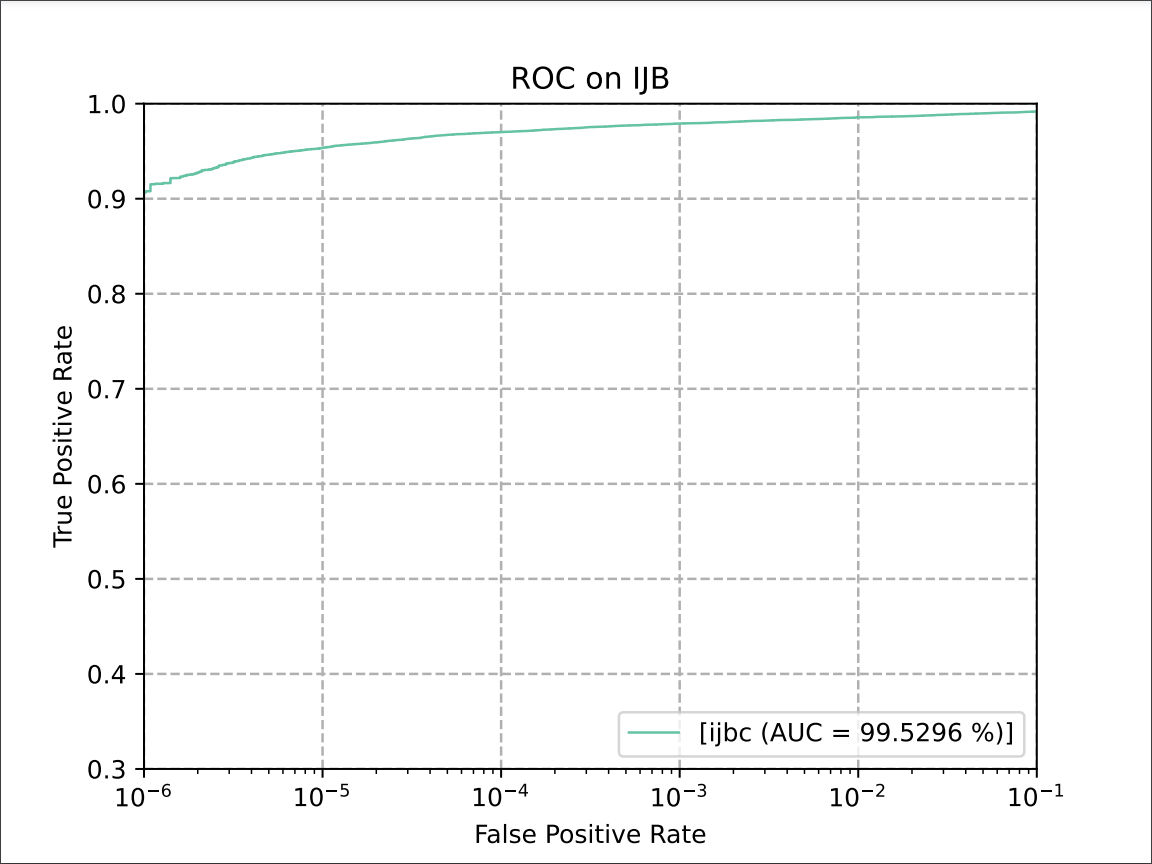

docs/auc_base.png

0 → 100644

59.1 KB

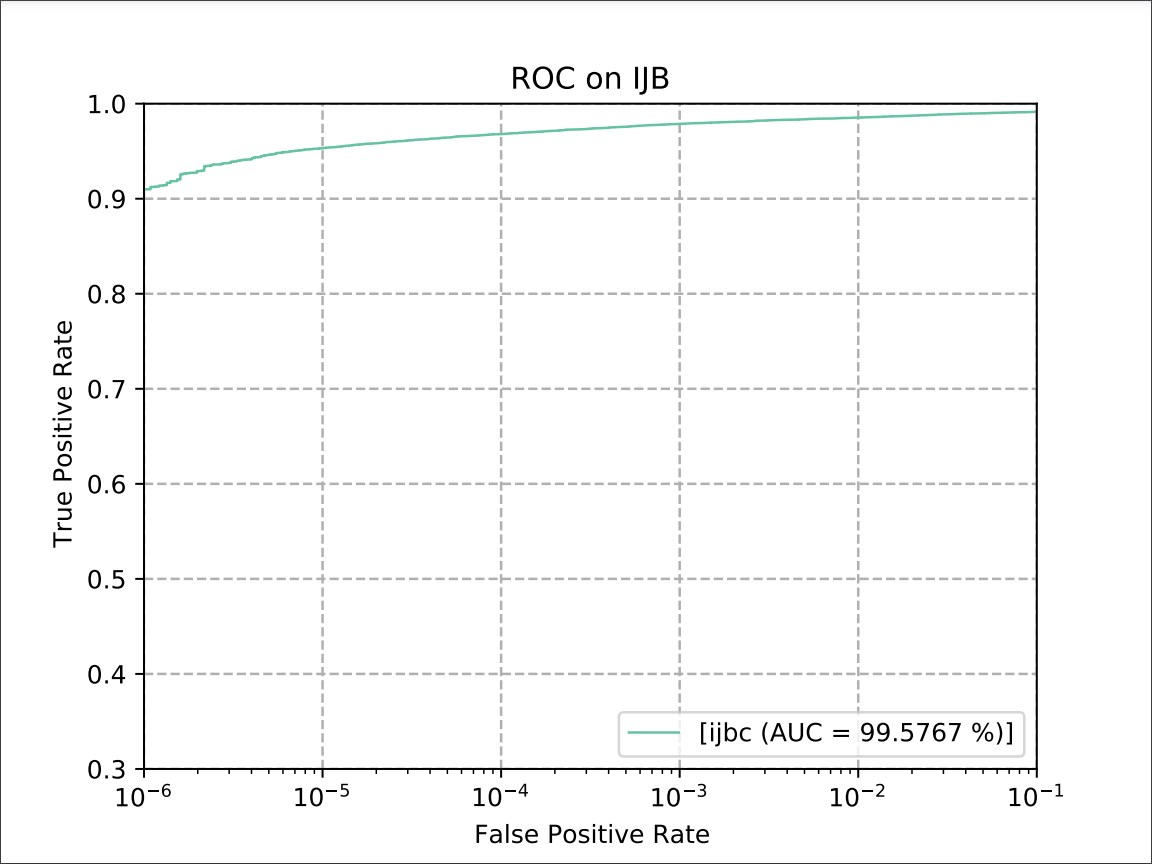

docs/auc_test.png

0 → 100644

59.7 KB

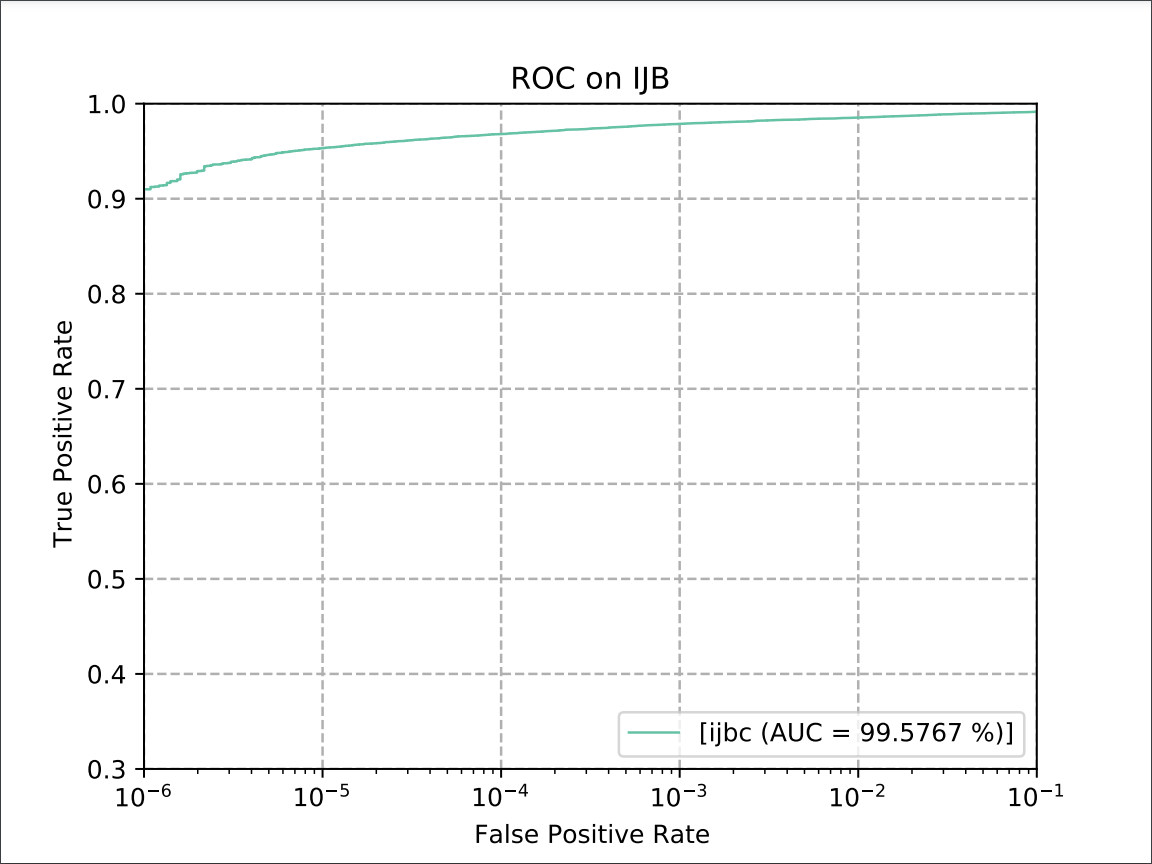

image.png

0 → 100644

65.8 KB

model.properties

0 → 100644