first

Showing

Too many changes to show.

To preserve performance only 674 of 674+ files are displayed.

File added

File added

docs/parameters.md

0 → 100644

docs/tutorial.md

0 → 100644

evaluation/readme.md

0 → 100644

evaluation/score_CEVAL.py

0 → 100644

evaluation/score_MMLU.py

0 → 100644

This source diff could not be displayed because it is too large. You can view the blob instead.

This source diff could not be displayed because it is too large. You can view the blob instead.

example_datas/test.jsonl

0 → 100644

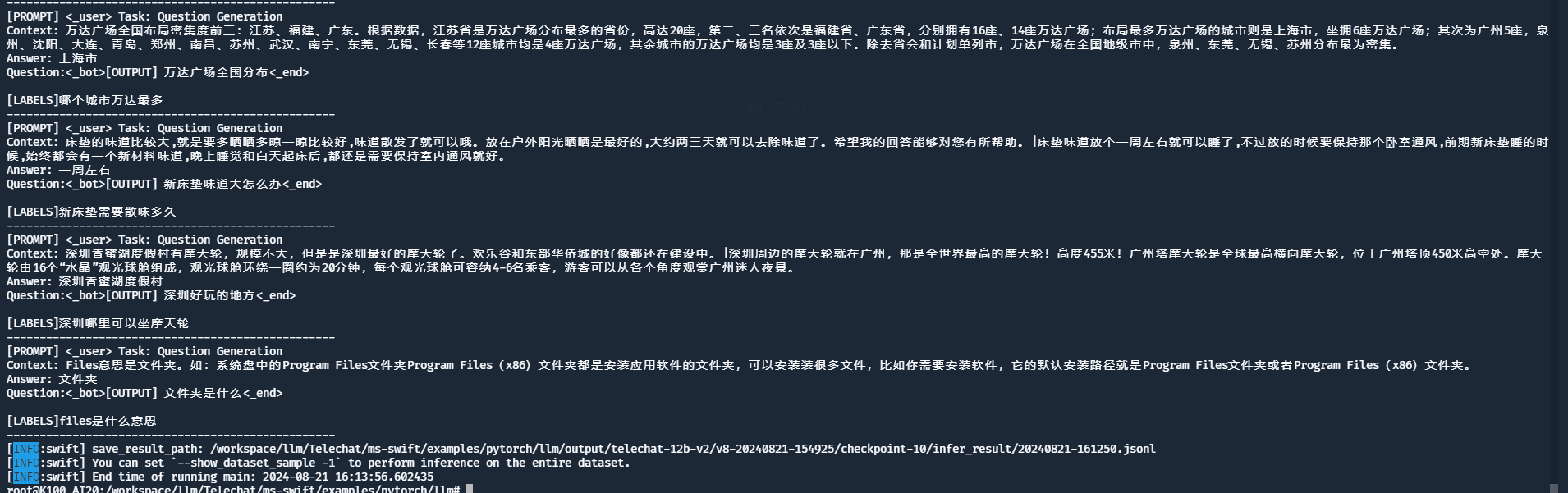

image-20240821162322664.png

0 → 100644

162 KB

88.9 KB

images/api页面.png

0 → 100644

65.2 KB

images/lora微调正常结果.png

0 → 100644

111 KB

images/web页面.png

0 → 100644

34.9 KB

images/wechat.jpg

0 → 100644

72 KB

images/单节点全参数微调正常结果.png

0 → 100644

117 KB