Update DEPLOYMENT_en.md, MODEL_LICENSE, PROMPT.md, PROMPT_en.md, README.md,...

Update DEPLOYMENT_en.md, MODEL_LICENSE, PROMPT.md, PROMPT_en.md, README.md, README_en.md, requirements.txt, .gitignore, LICENSE, update_requirements.sh files Deleted lvzhen.log, basic_demo/cli_demo.py, basic_demo/cli_demo_bad_word_ids.py, basic_demo/infer_test.py, basic_demo/utils.py, basic_demo/vocab.txt, basic_demo/web_demo.py, basic_demo/web_demo2.py, composite_demo/.streamlit/config.toml, composite_demo/assets/demo.png, composite_demo/assets/emojis.png, composite_demo/assets/heart.png, composite_demo/assets/tool.png, composite_demo/README.md, composite_demo/README_en.md, composite_demo/client.py, composite_demo/conversation.py, composite_demo/demo_chat.py, composite_demo/demo_ci.py, composite_demo/demo_tool.py, composite_demo/main.py, composite_demo/requirements.txt, composite_demo/tool_registry.py, cookbook/data/toutiao_cat_data_example.txt, cookbook/accurate_prompt.ipynb, cookbook/finetune_muti_classfication.ipynb, finetune_basemodel_demo/scripts/finetune_lora.sh, finetune_basemodel_demo/scripts/formate_alpaca2jsonl.py, finetune_basemodel_demo/README.md, finetune_basemodel_demo/arguments.py, finetune_basemodel_demo/finetune.py, finetune_basemodel_demo/inference.py, finetune_basemodel_demo/preprocess_utils.py, finetune_basemodel_demo/requirements.txt, finetune_basemodel_demo/trainer.py, finetune_chatmodel_demo/AdvertiseGen/dev.json, finetune_chatmodel_demo/AdvertiseGen/train.json, finetune_chatmodel_demo/configs/deepspeed.json, finetune_chatmodel_demo/formatted_data/advertise_gen.jsonl, finetune_chatmodel_demo/formatted_data/tool_alpaca.jsonl, finetune_chatmodel_demo/scripts/finetune_ds.sh, finetune_chatmodel_demo/scripts/finetune_ds_multiturn.sh, finetune_chatmodel_demo/scripts/finetune_pt.sh, finetune_chatmodel_demo/scripts/finetune_pt_multiturn.sh, finetune_chatmodel_demo/scripts/format_advertise_gen.py, finetune_chatmodel_demo/scripts/format_tool_alpaca.py, finetune_chatmodel_demo/README.md, finetune_chatmodel_demo/arguments.py, finetune_chatmodel_demo/finetune.py, finetune_chatmodel_demo/inference.py, finetune_chatmodel_demo/preprocess_utils.py, finetune_chatmodel_demo/requirements.txt, finetune_chatmodel_demo/train_data.json, finetune_chatmodel_demo/trainer.py, langchain_demo/Tool/Calculator.py, langchain_demo/Tool/Calculator.yaml, langchain_demo/Tool/Weather.py, langchain_demo/Tool/arxiv_example.yaml, langchain_demo/Tool/weather.yaml, langchain_demo/ChatGLM3.py, langchain_demo/README.md, langchain_demo/main.py, langchain_demo/requirements.txt, langchain_demo/utils.py, media/GLM.png, media/cli.png, media/transformers.jpg, openai_api_demo/openai_api.py, openai_api_demo/openai_api_request.py, openai_api_demo/requirements.txt, openai_api_demo/utils.py, resources/WECHAT.md, resources/cli-demo.png, resources/code_en.gif, resources/heart.png, resources/tool.png, resources/tool_en.png, resources/web-demo.gif, resources/web-demo2.gif, resources/web-demo2.png, resources/wechat.jpg, tool_using/README.md, tool_using/README_en.md, tool_using/cli_demo_tool.py, tool_using/openai_api_demo.py, tool_using/requirements.txt, tool_using/test.py, tool_using/tool_register.py, Dockerfile, README_old.md, model.properties files

Showing

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

lvzhen.log

deleted

100644 → 0

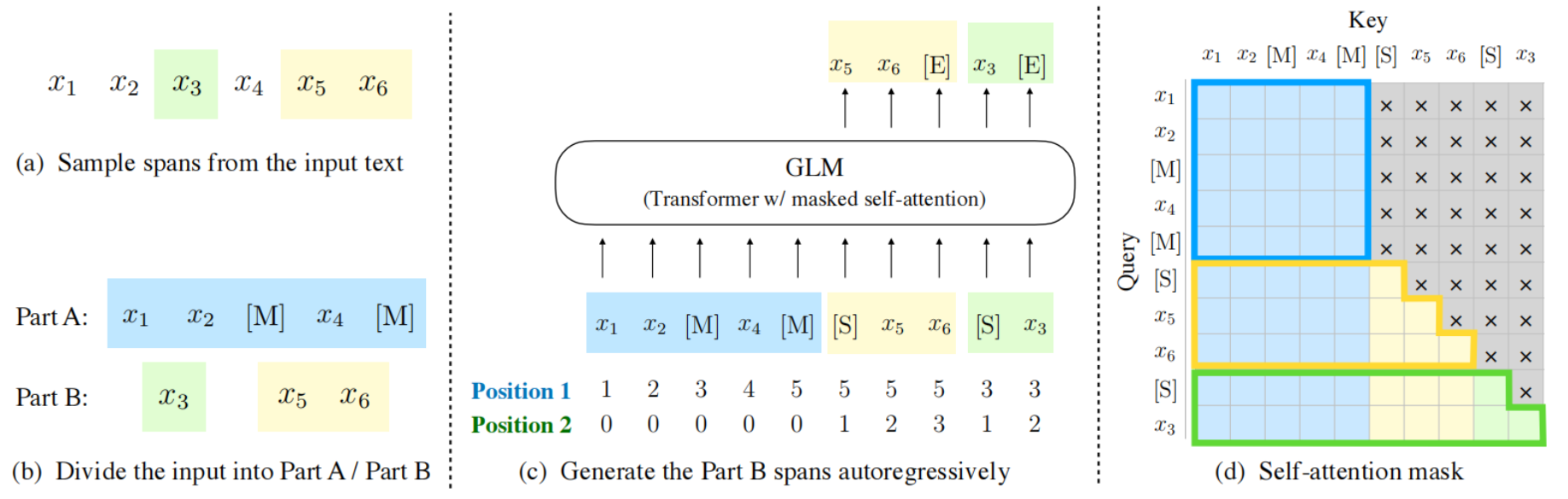

media/GLM.png

deleted

100644 → 0

261 KB

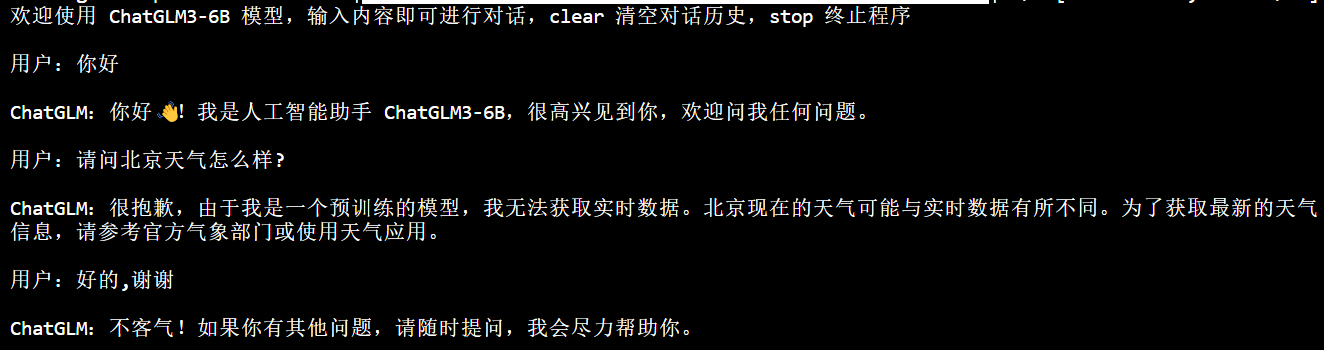

media/cli.png

deleted

100644 → 0

55.7 KB

32.7 KB

model.properties

deleted

100644 → 0

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.

This diff is collapsed.