*This model was released on 2022-02-28 and added to Hugging Face Transformers on 2022-10-12.*

# LiLT

## Overview

The LiLT model was proposed in [LiLT: A Simple yet Effective Language-Independent Layout Transformer for Structured Document Understanding](https://huggingface.co/papers/2202.13669) by Jiapeng Wang, Lianwen Jin, Kai Ding.

LiLT allows to combine any pre-trained RoBERTa text encoder with a lightweight Layout Transformer, to enable [LayoutLM](layoutlm)-like document understanding for many

languages.

The abstract from the paper is the following:

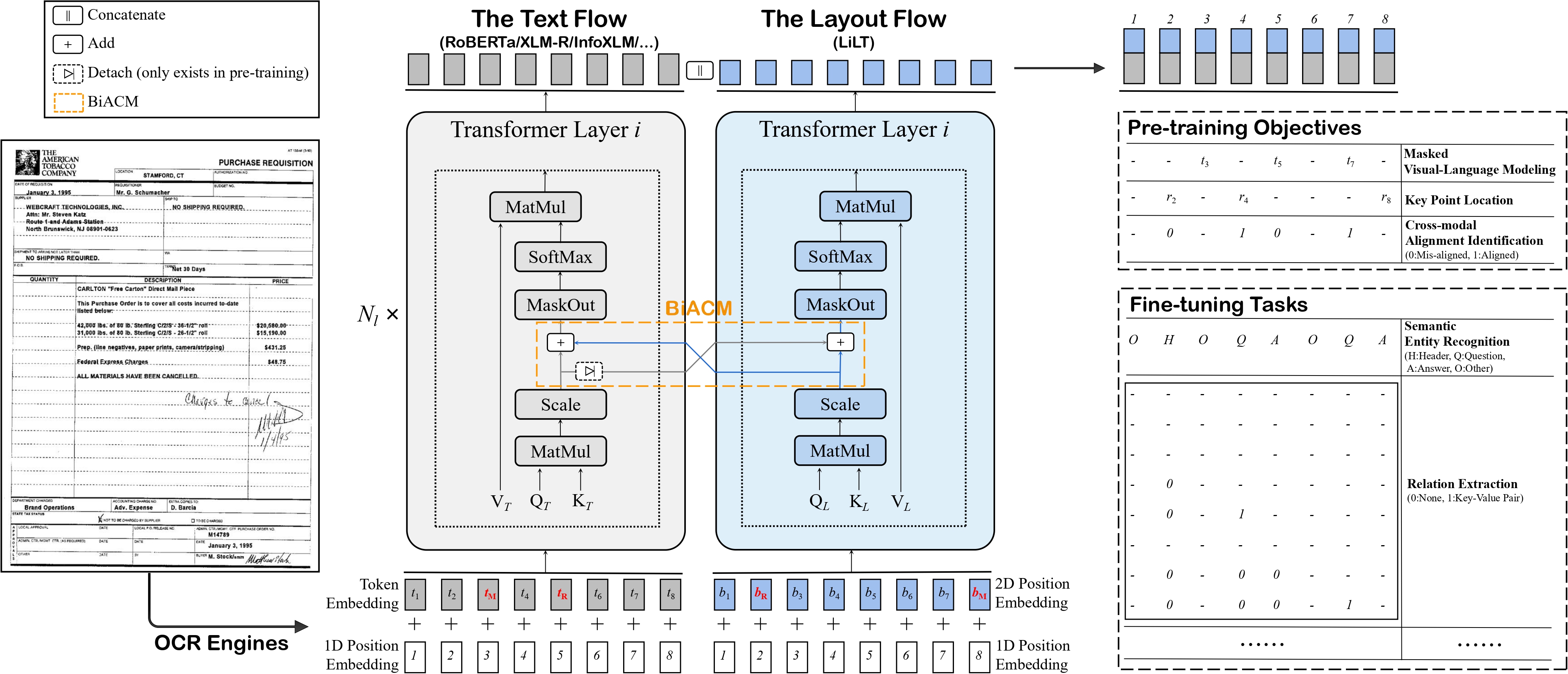

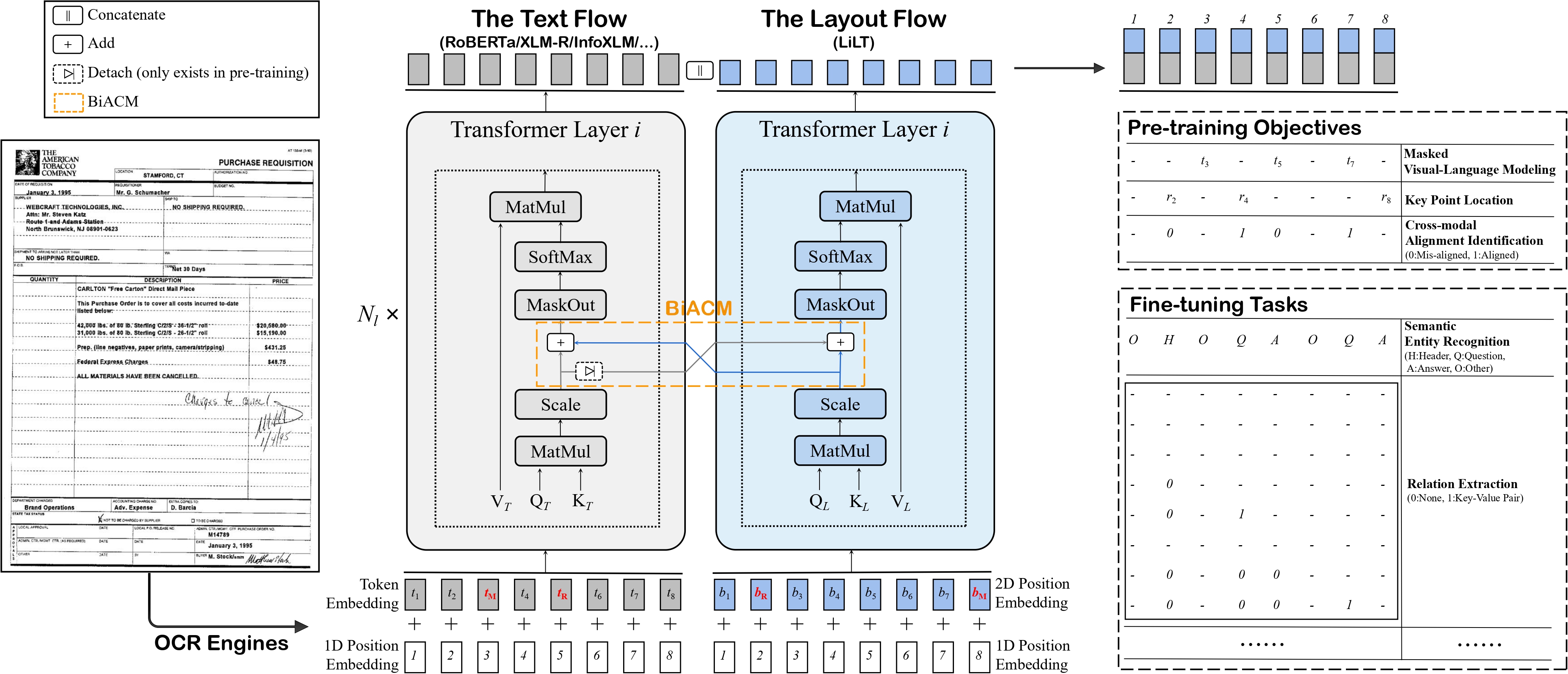

*Structured document understanding has attracted considerable attention and made significant progress recently, owing to its crucial role in intelligent document processing. However, most existing related models can only deal with the document data of specific language(s) (typically English) included in the pre-training collection, which is extremely limited. To address this issue, we propose a simple yet effective Language-independent Layout Transformer (LiLT) for structured document understanding. LiLT can be pre-trained on the structured documents of a single language and then directly fine-tuned on other languages with the corresponding off-the-shelf monolingual/multilingual pre-trained textual models. Experimental results on eight languages have shown that LiLT can achieve competitive or even superior performance on diverse widely-used downstream benchmarks, which enables language-independent benefit from the pre-training of document layout structure.*

LiLT architecture. Taken from the original paper.

This model was contributed by [nielsr](https://huggingface.co/nielsr).

The original code can be found [here](https://github.com/jpwang/lilt).

## Usage tips

- To combine the Language-Independent Layout Transformer with a new RoBERTa checkpoint from the [hub](https://huggingface.co/models?search=roberta), refer to [this guide](https://github.com/jpWang/LiLT#or-generate-your-own-checkpoint-optional).

The script will result in `config.json` and `pytorch_model.bin` files being stored locally. After doing this, one can do the following (assuming you're logged in with your HuggingFace account):

```python

from transformers import LiltModel

model = LiltModel.from_pretrained("path_to_your_files")

model.push_to_hub("name_of_repo_on_the_hub")

```

- When preparing data for the model, make sure to use the token vocabulary that corresponds to the RoBERTa checkpoint you combined with the Layout Transformer.

- As [lilt-roberta-en-base](https://huggingface.co/SCUT-DLVCLab/lilt-roberta-en-base) uses the same vocabulary as [LayoutLMv3](layoutlmv3), one can use [`LayoutLMv3TokenizerFast`] to prepare data for the model.

The same is true for [lilt-roberta-en-base](https://huggingface.co/SCUT-DLVCLab/lilt-infoxlm-base): one can use [`LayoutXLMTokenizerFast`] for that model.

## Resources

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with LiLT.

- Demo notebooks for LiLT can be found [here](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LiLT).

**Documentation resources**

- [Text classification task guide](../tasks/sequence_classification)

- [Token classification task guide](../tasks/token_classification)

- [Question answering task guide](../tasks/question_answering)

If you're interested in submitting a resource to be included here, please feel free to open a Pull Request and we'll review it! The resource should ideally demonstrate something new instead of duplicating an existing resource.

## LiltConfig

[[autodoc]] LiltConfig

## LiltModel

[[autodoc]] LiltModel

- forward

## LiltForSequenceClassification

[[autodoc]] LiltForSequenceClassification

- forward

## LiltForTokenClassification

[[autodoc]] LiltForTokenClassification

- forward

## LiltForQuestionAnswering

[[autodoc]] LiltForQuestionAnswering

- forward

LiLT architecture. Taken from the original paper.

This model was contributed by [nielsr](https://huggingface.co/nielsr).

The original code can be found [here](https://github.com/jpwang/lilt).

## Usage tips

- To combine the Language-Independent Layout Transformer with a new RoBERTa checkpoint from the [hub](https://huggingface.co/models?search=roberta), refer to [this guide](https://github.com/jpWang/LiLT#or-generate-your-own-checkpoint-optional).

The script will result in `config.json` and `pytorch_model.bin` files being stored locally. After doing this, one can do the following (assuming you're logged in with your HuggingFace account):

```python

from transformers import LiltModel

model = LiltModel.from_pretrained("path_to_your_files")

model.push_to_hub("name_of_repo_on_the_hub")

```

- When preparing data for the model, make sure to use the token vocabulary that corresponds to the RoBERTa checkpoint you combined with the Layout Transformer.

- As [lilt-roberta-en-base](https://huggingface.co/SCUT-DLVCLab/lilt-roberta-en-base) uses the same vocabulary as [LayoutLMv3](layoutlmv3), one can use [`LayoutLMv3TokenizerFast`] to prepare data for the model.

The same is true for [lilt-roberta-en-base](https://huggingface.co/SCUT-DLVCLab/lilt-infoxlm-base): one can use [`LayoutXLMTokenizerFast`] for that model.

## Resources

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with LiLT.

- Demo notebooks for LiLT can be found [here](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LiLT).

**Documentation resources**

- [Text classification task guide](../tasks/sequence_classification)

- [Token classification task guide](../tasks/token_classification)

- [Question answering task guide](../tasks/question_answering)

If you're interested in submitting a resource to be included here, please feel free to open a Pull Request and we'll review it! The resource should ideally demonstrate something new instead of duplicating an existing resource.

## LiltConfig

[[autodoc]] LiltConfig

## LiltModel

[[autodoc]] LiltModel

- forward

## LiltForSequenceClassification

[[autodoc]] LiltForSequenceClassification

- forward

## LiltForTokenClassification

[[autodoc]] LiltForTokenClassification

- forward

## LiltForQuestionAnswering

[[autodoc]] LiltForQuestionAnswering

- forward

LiLT architecture. Taken from the original paper.

This model was contributed by [nielsr](https://huggingface.co/nielsr).

The original code can be found [here](https://github.com/jpwang/lilt).

## Usage tips

- To combine the Language-Independent Layout Transformer with a new RoBERTa checkpoint from the [hub](https://huggingface.co/models?search=roberta), refer to [this guide](https://github.com/jpWang/LiLT#or-generate-your-own-checkpoint-optional).

The script will result in `config.json` and `pytorch_model.bin` files being stored locally. After doing this, one can do the following (assuming you're logged in with your HuggingFace account):

```python

from transformers import LiltModel

model = LiltModel.from_pretrained("path_to_your_files")

model.push_to_hub("name_of_repo_on_the_hub")

```

- When preparing data for the model, make sure to use the token vocabulary that corresponds to the RoBERTa checkpoint you combined with the Layout Transformer.

- As [lilt-roberta-en-base](https://huggingface.co/SCUT-DLVCLab/lilt-roberta-en-base) uses the same vocabulary as [LayoutLMv3](layoutlmv3), one can use [`LayoutLMv3TokenizerFast`] to prepare data for the model.

The same is true for [lilt-roberta-en-base](https://huggingface.co/SCUT-DLVCLab/lilt-infoxlm-base): one can use [`LayoutXLMTokenizerFast`] for that model.

## Resources

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with LiLT.

- Demo notebooks for LiLT can be found [here](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LiLT).

**Documentation resources**

- [Text classification task guide](../tasks/sequence_classification)

- [Token classification task guide](../tasks/token_classification)

- [Question answering task guide](../tasks/question_answering)

If you're interested in submitting a resource to be included here, please feel free to open a Pull Request and we'll review it! The resource should ideally demonstrate something new instead of duplicating an existing resource.

## LiltConfig

[[autodoc]] LiltConfig

## LiltModel

[[autodoc]] LiltModel

- forward

## LiltForSequenceClassification

[[autodoc]] LiltForSequenceClassification

- forward

## LiltForTokenClassification

[[autodoc]] LiltForTokenClassification

- forward

## LiltForQuestionAnswering

[[autodoc]] LiltForQuestionAnswering

- forward

LiLT architecture. Taken from the original paper.

This model was contributed by [nielsr](https://huggingface.co/nielsr).

The original code can be found [here](https://github.com/jpwang/lilt).

## Usage tips

- To combine the Language-Independent Layout Transformer with a new RoBERTa checkpoint from the [hub](https://huggingface.co/models?search=roberta), refer to [this guide](https://github.com/jpWang/LiLT#or-generate-your-own-checkpoint-optional).

The script will result in `config.json` and `pytorch_model.bin` files being stored locally. After doing this, one can do the following (assuming you're logged in with your HuggingFace account):

```python

from transformers import LiltModel

model = LiltModel.from_pretrained("path_to_your_files")

model.push_to_hub("name_of_repo_on_the_hub")

```

- When preparing data for the model, make sure to use the token vocabulary that corresponds to the RoBERTa checkpoint you combined with the Layout Transformer.

- As [lilt-roberta-en-base](https://huggingface.co/SCUT-DLVCLab/lilt-roberta-en-base) uses the same vocabulary as [LayoutLMv3](layoutlmv3), one can use [`LayoutLMv3TokenizerFast`] to prepare data for the model.

The same is true for [lilt-roberta-en-base](https://huggingface.co/SCUT-DLVCLab/lilt-infoxlm-base): one can use [`LayoutXLMTokenizerFast`] for that model.

## Resources

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with LiLT.

- Demo notebooks for LiLT can be found [here](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LiLT).

**Documentation resources**

- [Text classification task guide](../tasks/sequence_classification)

- [Token classification task guide](../tasks/token_classification)

- [Question answering task guide](../tasks/question_answering)

If you're interested in submitting a resource to be included here, please feel free to open a Pull Request and we'll review it! The resource should ideally demonstrate something new instead of duplicating an existing resource.

## LiltConfig

[[autodoc]] LiltConfig

## LiltModel

[[autodoc]] LiltModel

- forward

## LiltForSequenceClassification

[[autodoc]] LiltForSequenceClassification

- forward

## LiltForTokenClassification

[[autodoc]] LiltForTokenClassification

- forward

## LiltForQuestionAnswering

[[autodoc]] LiltForQuestionAnswering

- forward