add dtk24.04 code

Showing

Too many changes to show.

To preserve performance only 520 of 520+ files are displayed.

74.5 KB

9.13 KB

9.46 KB

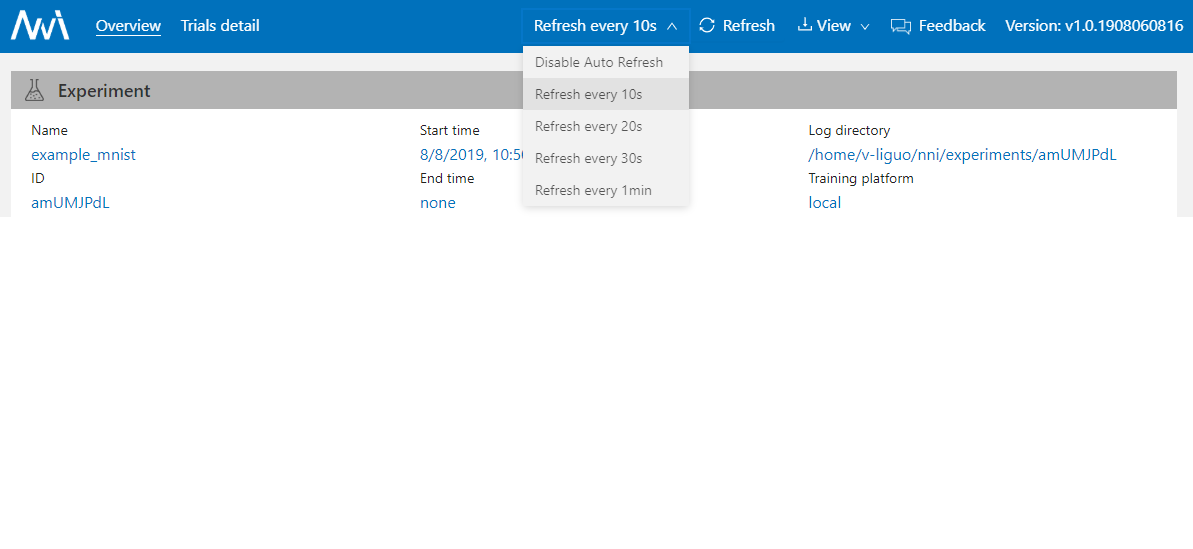

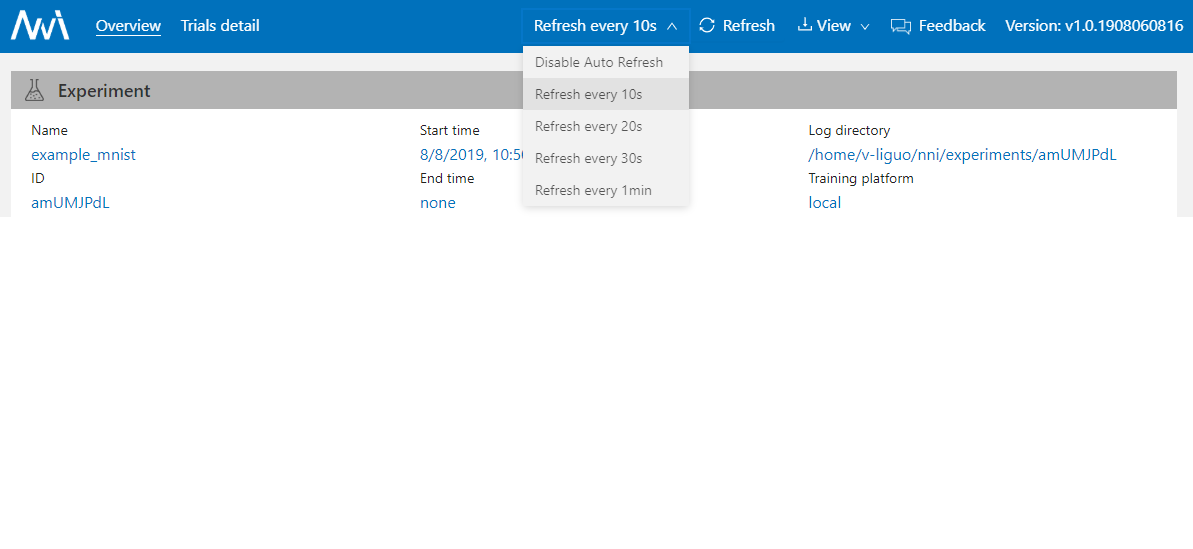

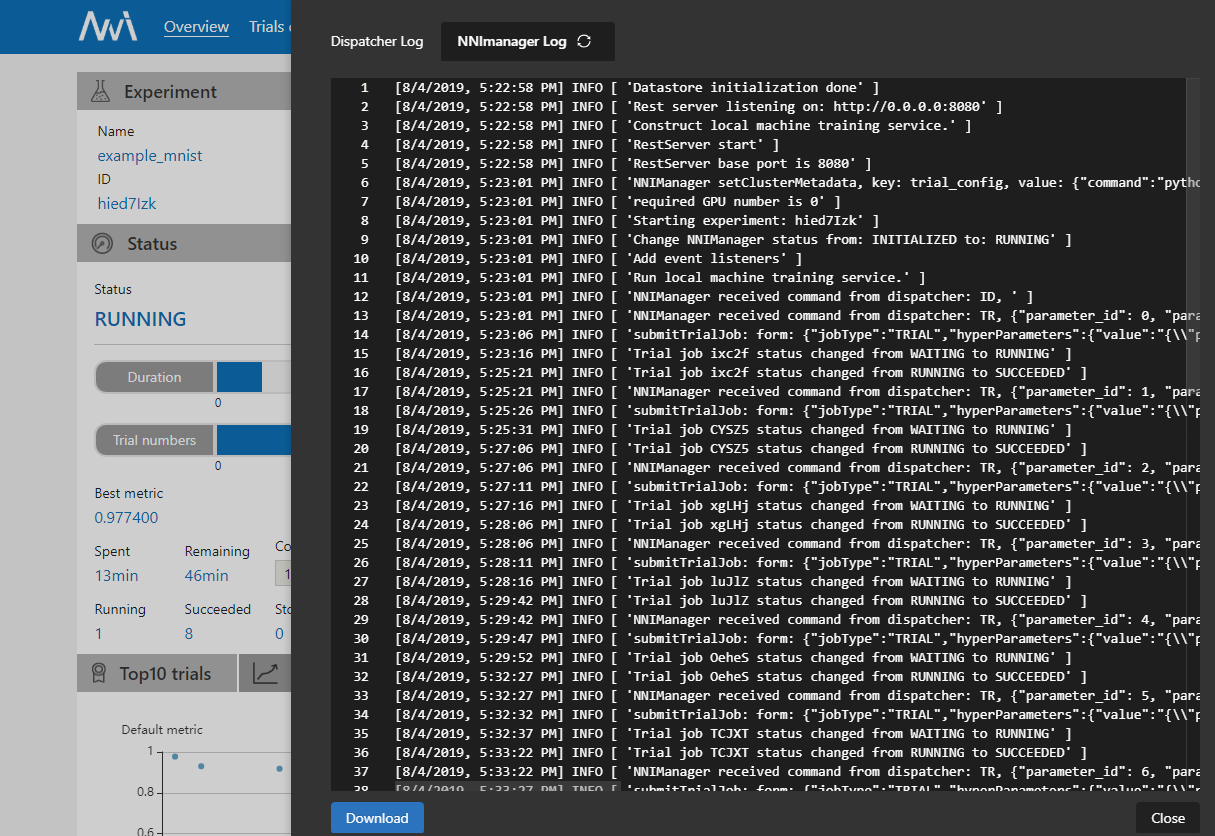

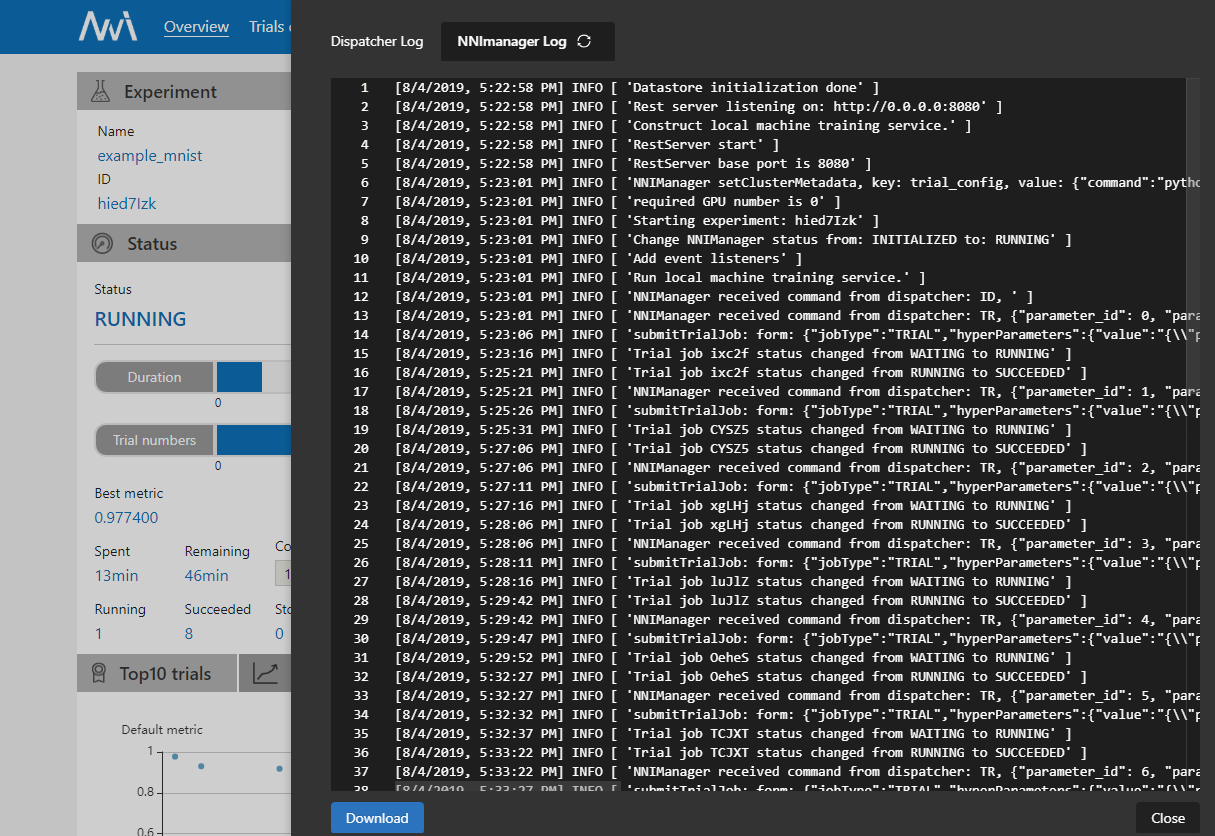

| W: | H:

| W: | H:

| W: | H:

| W: | H:

| W: | H:

| W: | H:

| W: | H:

| W: | H:

24.4 KB

22.1 KB

| W: | H:

| W: | H:

33.3 KB

| W: | H:

| W: | H:

38.7 KB

22 KB

| W: | H:

| W: | H: