diff --git a/CITATION.cff b/CITATION.cff

new file mode 100644

index 0000000000000000000000000000000000000000..81ea8f792645b1904e792918590eb215c62dd323

--- /dev/null

+++ b/CITATION.cff

@@ -0,0 +1,9 @@

+cff-version: 1.2.0

+message: "If you use this software, please cite it as below."

+title: "OpenMMLab's Pre-training Toolbox and Benchmark"

+authors:

+ - name: "MMPreTrain Contributors"

+version: 0.15.0

+date-released: 2023-04-06

+repository-code: "https://github.com/open-mmlab/mmpretrain"

+license: Apache-2.0

diff --git a/CONTRIBUTING.md b/CONTRIBUTING.md

new file mode 100644

index 0000000000000000000000000000000000000000..ce84c2a09f59785d3220a722b8ba1282c97a8030

--- /dev/null

+++ b/CONTRIBUTING.md

@@ -0,0 +1,73 @@

+# Contributing to MMPreTrain

+

+- [Contributing to MMPreTrain](#contributing-to-mmpretrain)

+ - [Workflow](#workflow)

+ - [Code style](#code-style)

+ - [Python](#python)

+ - [C++ and CUDA](#c-and-cuda)

+ - [Pre-commit Hook](#pre-commit-hook)

+

+Thanks for your interest in contributing to MMPreTrain! All kinds of contributions are welcome, including but not limited to the following.

+

+- Fix typo or bugs

+- Add documentation or translate the documentation into other languages

+- Add new features and components

+

+## Workflow

+

+We recommend the potential contributors follow this workflow for contribution.

+

+1. Fork and pull the latest MMPreTrain repository, follow [get started](https://mmpretrain.readthedocs.io/en/latest/get_started.html) to setup the environment.

+2. Checkout a new branch (**do not use the master or dev branch** for PRs)

+

+```bash

+git checkout -b xxxx # xxxx is the name of new branch

+```

+

+3. Edit the related files follow the code style mentioned below

+4. Use [pre-commit hook](https://pre-commit.com/) to check and format your changes.

+5. Commit your changes

+6. Create a PR with related information

+

+## Code style

+

+### Python

+

+We adopt [PEP8](https://www.python.org/dev/peps/pep-0008/) as the preferred code style.

+

+We use the following tools for linting and formatting:

+

+- [flake8](https://github.com/PyCQA/flake8): A wrapper around some linter tools.

+- [isort](https://github.com/timothycrosley/isort): A Python utility to sort imports.

+- [yapf](https://github.com/google/yapf): A formatter for Python files.

+- [codespell](https://github.com/codespell-project/codespell): A Python utility to fix common misspellings in text files.

+- [mdformat](https://github.com/executablebooks/mdformat): Mdformat is an opinionated Markdown formatter that can be used to enforce a consistent style in Markdown files.

+- [docformatter](https://github.com/myint/docformatter): A formatter to format docstring.

+

+Style configurations of yapf and isort can be found in [setup.cfg](https://github.com/open-mmlab/mmpretrain/blob/main/setup.cfg).

+

+### C++ and CUDA

+

+We follow the [Google C++ Style Guide](https://google.github.io/styleguide/cppguide.html).

+

+## Pre-commit Hook

+

+We use [pre-commit hook](https://pre-commit.com/) that checks and formats for `flake8`, `yapf`, `isort`, `trailing whitespaces`, `markdown files`,

+fixes `end-of-files`, `double-quoted-strings`, `python-encoding-pragma`, `mixed-line-ending`, sorts `requirments.txt` automatically on every commit.

+The config for a pre-commit hook is stored in [.pre-commit-config](https://github.com/open-mmlab/mmpretrain/blob/main/.pre-commit-config.yaml).

+

+After you clone the repository, you will need to install initialize pre-commit hook.

+

+```shell

+pip install -U pre-commit

+```

+

+From the repository folder

+

+```shell

+pre-commit install

+```

+

+After this on every commit check code linters and formatter will be enforced.

+

+> Before you create a PR, make sure that your code lints and is formatted by yapf.

diff --git a/LICENSE b/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..ae87343779455c4c4b43e10a27d1657142666726

--- /dev/null

+++ b/LICENSE

@@ -0,0 +1,203 @@

+Copyright (c) OpenMMLab. All rights reserved

+

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright 2020 MMPreTrain Authors.

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/MANIFEST.in b/MANIFEST.in

new file mode 100644

index 0000000000000000000000000000000000000000..ad4d8dafbdeb31327429c94430a8338e5f024acb

--- /dev/null

+++ b/MANIFEST.in

@@ -0,0 +1,5 @@

+include requirements/*.txt

+include mmpretrain/.mim/model-index.yml

+include mmpretrain/.mim/dataset-index.yml

+recursive-include mmpretrain/.mim/configs *.py *.yml

+recursive-include mmpretrain/.mim/tools *.py *.sh

diff --git a/README.md b/README.md

index a67d4b0aa6efa336d0b9be3f0244c77fc3f00c98..5318df5b958b8f54dcba1896776eebfb04ba9871 100644

--- a/README.md

+++ b/README.md

@@ -1,2 +1,339 @@

-# mmpretrain

+

+

+

+

+

+

+[](https://pypi.org/project/mmpretrain)

+[](https://mmpretrain.readthedocs.io/en/latest/)

+[](https://github.com/open-mmlab/mmpretrain/actions)

+[](https://codecov.io/gh/open-mmlab/mmpretrain)

+[](https://github.com/open-mmlab/mmpretrain/blob/main/LICENSE)

+[](https://github.com/open-mmlab/mmpretrain/issues)

+[](https://github.com/open-mmlab/mmpretrain/issues)

+

+[📘 Documentation](https://mmpretrain.readthedocs.io/en/latest/) |

+[🛠️ Installation](https://mmpretrain.readthedocs.io/en/latest/get_started.html#installation) |

+[👀 Model Zoo](https://mmpretrain.readthedocs.io/en/latest/modelzoo_statistics.html) |

+[🆕 Update News](https://mmpretrain.readthedocs.io/en/latest/notes/changelog.html) |

+[🤔 Reporting Issues](https://github.com/open-mmlab/mmpretrain/issues/new/choose)

+

+

+

+English | [简体中文](/README_zh-CN.md)

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+ Overview

+

+

+

+

+ |

+ Supported Backbones

+ |

+

+ Self-supervised Learning

+ |

+

+ Multi-Modality Algorithms

+ |

+

+ Others

+ |

+

+

+ |

+

+ |

+

+

+ |

+

+

+ |

+

+ Image Retrieval Task:

+

+ Training&Test Tips:

+

+ |

+

+

+

+## Contributing

+

+We appreciate all contributions to improve MMPreTrain.

+Please refer to [CONTRUBUTING](https://mmpretrain.readthedocs.io/en/latest/notes/contribution_guide.html) for the contributing guideline.

+

+## Acknowledgement

+

+MMPreTrain is an open source project that is contributed by researchers and engineers from various colleges and companies. We appreciate all the contributors who implement their methods or add new features, as well as users who give valuable feedbacks.

+We wish that the toolbox and benchmark could serve the growing research community by providing a flexible toolkit to reimplement existing methods and supporting their own academic research.

+

+## Citation

+

+If you find this project useful in your research, please consider cite:

+

+```BibTeX

+@misc{2023mmpretrain,

+ title={OpenMMLab's Pre-training Toolbox and Benchmark},

+ author={MMPreTrain Contributors},

+ howpublished = {\url{https://github.com/open-mmlab/mmpretrain}},

+ year={2023}

+}

+```

+

+## License

+

+This project is released under the [Apache 2.0 license](LICENSE).

+

+## Projects in OpenMMLab

+

+- [MMEngine](https://github.com/open-mmlab/mmengine): OpenMMLab foundational library for training deep learning models.

+- [MMCV](https://github.com/open-mmlab/mmcv): OpenMMLab foundational library for computer vision.

+- [MIM](https://github.com/open-mmlab/mim): MIM installs OpenMMLab packages.

+- [MMEval](https://github.com/open-mmlab/mmeval): A unified evaluation library for multiple machine learning libraries.

+- [MMPreTrain](https://github.com/open-mmlab/mmpretrain): OpenMMLab pre-training toolbox and benchmark.

+- [MMDetection](https://github.com/open-mmlab/mmdetection): OpenMMLab detection toolbox and benchmark.

+- [MMDetection3D](https://github.com/open-mmlab/mmdetection3d): OpenMMLab's next-generation platform for general 3D object detection.

+- [MMRotate](https://github.com/open-mmlab/mmrotate): OpenMMLab rotated object detection toolbox and benchmark.

+- [MMYOLO](https://github.com/open-mmlab/mmyolo): OpenMMLab YOLO series toolbox and benchmark.

+- [MMSegmentation](https://github.com/open-mmlab/mmsegmentation): OpenMMLab semantic segmentation toolbox and benchmark.

+- [MMOCR](https://github.com/open-mmlab/mmocr): OpenMMLab text detection, recognition, and understanding toolbox.

+- [MMPose](https://github.com/open-mmlab/mmpose): OpenMMLab pose estimation toolbox and benchmark.

+- [MMHuman3D](https://github.com/open-mmlab/mmhuman3d): OpenMMLab 3D human parametric model toolbox and benchmark.

+- [MMSelfSup](https://github.com/open-mmlab/mmselfsup): OpenMMLab self-supervised learning toolbox and benchmark.

+- [MMRazor](https://github.com/open-mmlab/mmrazor): OpenMMLab model compression toolbox and benchmark.

+- [MMFewShot](https://github.com/open-mmlab/mmfewshot): OpenMMLab fewshot learning toolbox and benchmark.

+- [MMAction2](https://github.com/open-mmlab/mmaction2): OpenMMLab's next-generation action understanding toolbox and benchmark.

+- [MMTracking](https://github.com/open-mmlab/mmtracking): OpenMMLab video perception toolbox and benchmark.

+- [MMFlow](https://github.com/open-mmlab/mmflow): OpenMMLab optical flow toolbox and benchmark.

+- [MMagic](https://github.com/open-mmlab/mmagic): Open**MM**Lab **A**dvanced, **G**enerative and **I**ntelligent **C**reation toolbox.

+- [MMGeneration](https://github.com/open-mmlab/mmgeneration): OpenMMLab image and video generative models toolbox.

+- [MMDeploy](https://github.com/open-mmlab/mmdeploy): OpenMMLab model deployment framework.

+- [Playground](https://github.com/open-mmlab/playground): A central hub for gathering and showcasing amazing projects built upon OpenMMLab.

diff --git a/README_zh-CN.md b/README_zh-CN.md

new file mode 100644

index 0000000000000000000000000000000000000000..9ee8dffc401d414c0c2b7135ba2a4887f80608a4

--- /dev/null

+++ b/README_zh-CN.md

@@ -0,0 +1,353 @@

+

+

+

+

+

+

+

+[](https://pypi.org/project/mmpretrain)

+[](https://mmpretrain.readthedocs.io/zh_CN/latest/)

+[](https://github.com/open-mmlab/mmpretrain/actions)

+[](https://codecov.io/gh/open-mmlab/mmpretrain)

+[](https://github.com/open-mmlab/mmpretrain/blob/main/LICENSE)

+[](https://github.com/open-mmlab/mmpretrain/issues)

+[](https://github.com/open-mmlab/mmpretrain/issues)

+

+[📘 中文文档](https://mmpretrain.readthedocs.io/zh_CN/latest/) |

+[🛠️ 安装教程](https://mmpretrain.readthedocs.io/zh_CN/latest/get_started.html) |

+[👀 模型库](https://mmpretrain.readthedocs.io/zh_CN/latest/modelzoo_statistics.html) |

+[🆕 更新日志](https://mmpretrain.readthedocs.io/zh_CN/latest/notes/changelog.html) |

+[🤔 报告问题](https://github.com/open-mmlab/mmpretrain/issues/new/choose)

+

+

+

+[English](/README.md) | 简体中文

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+ 概览

+

+

+

+

+ |

+ 支持的主干网络

+ |

+

+ 自监督学习

+ |

+

+ 多模态算法

+ |

+

+ 其它

+ |

+

+

+ |

+

+ |

+

+

+ |

+

+

+ |

+

+ 图像检索任务:

+

+ 训练和测试 Tips:

+

+ |

+

+

+

+## 参与贡献

+

+我们非常欢迎任何有助于提升 MMPreTrain 的贡献,请参考 [贡献指南](https://mmpretrain.readthedocs.io/zh_CN/latest/notes/contribution_guide.html) 来了解如何参与贡献。

+

+## 致谢

+

+MMPreTrain 是一款由不同学校和公司共同贡献的开源项目。我们感谢所有为项目提供算法复现和新功能支持的贡献者,以及提供宝贵反馈的用户。

+我们希望该工具箱和基准测试可以为社区提供灵活的代码工具,供用户复现现有算法并开发自己的新模型,从而不断为开源社区提供贡献。

+

+## 引用

+

+如果你在研究中使用了本项目的代码或者性能基准,请参考如下 bibtex 引用 MMPreTrain。

+

+```BibTeX

+@misc{2023mmpretrain,

+ title={OpenMMLab's Pre-training Toolbox and Benchmark},

+ author={MMPreTrain Contributors},

+ howpublished = {\url{https://github.com/open-mmlab/mmpretrain}},

+ year={2023}

+}

+```

+

+## 许可证

+

+该项目开源自 [Apache 2.0 license](LICENSE).

+

+## OpenMMLab 的其他项目

+

+- [MMEngine](https://github.com/open-mmlab/mmengine): OpenMMLab 深度学习模型训练基础库

+- [MMCV](https://github.com/open-mmlab/mmcv): OpenMMLab 计算机视觉基础库

+- [MIM](https://github.com/open-mmlab/mim): MIM 是 OpenMMlab 项目、算法、模型的统一入口

+- [MMEval](https://github.com/open-mmlab/mmeval): 统一开放的跨框架算法评测库

+- [MMPreTrain](https://github.com/open-mmlab/mmpretrain): OpenMMLab 深度学习预训练工具箱

+- [MMDetection](https://github.com/open-mmlab/mmdetection): OpenMMLab 目标检测工具箱

+- [MMDetection3D](https://github.com/open-mmlab/mmdetection3d): OpenMMLab 新一代通用 3D 目标检测平台

+- [MMRotate](https://github.com/open-mmlab/mmrotate): OpenMMLab 旋转框检测工具箱与测试基准

+- [MMYOLO](https://github.com/open-mmlab/mmyolo): OpenMMLab YOLO 系列工具箱与测试基准

+- [MMSegmentation](https://github.com/open-mmlab/mmsegmentation): OpenMMLab 语义分割工具箱

+- [MMOCR](https://github.com/open-mmlab/mmocr): OpenMMLab 全流程文字检测识别理解工具包

+- [MMPose](https://github.com/open-mmlab/mmpose): OpenMMLab 姿态估计工具箱

+- [MMHuman3D](https://github.com/open-mmlab/mmhuman3d): OpenMMLab 人体参数化模型工具箱与测试基准

+- [MMSelfSup](https://github.com/open-mmlab/mmselfsup): OpenMMLab 自监督学习工具箱与测试基准

+- [MMRazor](https://github.com/open-mmlab/mmrazor): OpenMMLab 模型压缩工具箱与测试基准

+- [MMFewShot](https://github.com/open-mmlab/mmfewshot): OpenMMLab 少样本学习工具箱与测试基准

+- [MMAction2](https://github.com/open-mmlab/mmaction2): OpenMMLab 新一代视频理解工具箱

+- [MMTracking](https://github.com/open-mmlab/mmtracking): OpenMMLab 一体化视频目标感知平台

+- [MMFlow](https://github.com/open-mmlab/mmflow): OpenMMLab 光流估计工具箱与测试基准

+- [MMagic](https://github.com/open-mmlab/mmagic): OpenMMLab 新一代人工智能内容生成(AIGC)工具箱

+- [MMGeneration](https://github.com/open-mmlab/mmgeneration): OpenMMLab 图片视频生成模型工具箱

+- [MMDeploy](https://github.com/open-mmlab/mmdeploy): OpenMMLab 模型部署框架

+- [Playground](https://github.com/open-mmlab/playground): 收集和展示 OpenMMLab 相关的前沿、有趣的社区项目

+

+## 欢迎加入 OpenMMLab 社区

+

+扫描下方的二维码可关注 OpenMMLab 团队的 [知乎官方账号](https://www.zhihu.com/people/openmmlab),扫描下方微信二维码添加喵喵好友,进入 MMPretrain 微信交流社群。【加好友申请格式:研究方向+地区+学校/公司+姓名】

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+Show the paper's abstract

+

+

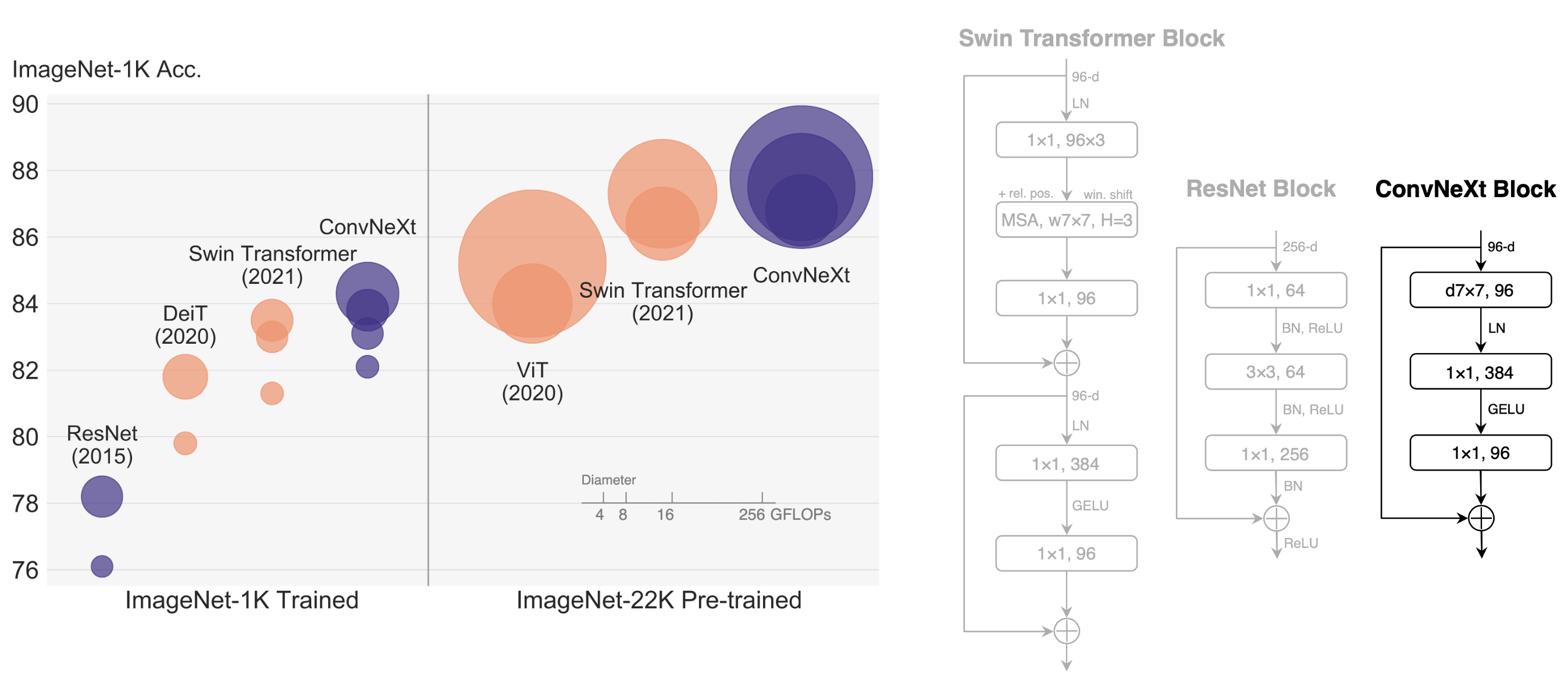

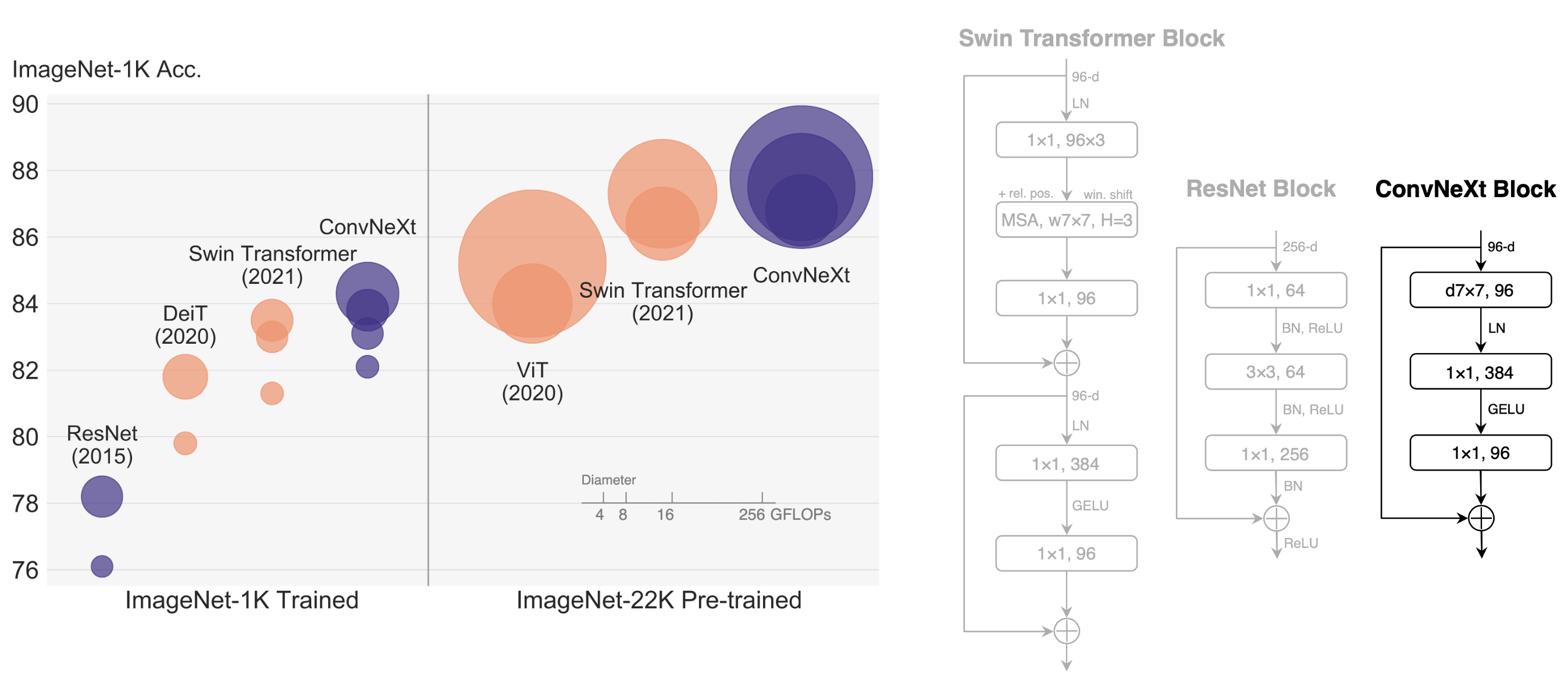

+The "Roaring 20s" of visual recognition began with the introduction of Vision Transformers (ViTs), which quickly superseded ConvNets as the state-of-the-art image classification model. A vanilla ViT, on the other hand, faces difficulties when applied to general computer vision tasks such as object detection and semantic segmentation. It is the hierarchical Transformers (e.g., Swin Transformers) that reintroduced several ConvNet priors, making Transformers practically viable as a generic vision backbone and demonstrating remarkable performance on a wide variety of vision tasks. However, the effectiveness of such hybrid approaches is still largely credited to the intrinsic superiority of Transformers, rather than the inherent inductive biases of convolutions. In this work, we reexamine the design spaces and test the limits of what a pure ConvNet can achieve. We gradually "modernize" a standard ResNet toward the design of a vision Transformer, and discover several key components that contribute to the performance difference along the way. The outcome of this exploration is a family of pure ConvNet models dubbed ConvNeXt. Constructed entirely from standard ConvNet modules, ConvNeXts compete favorably with Transformers in terms of accuracy and scalability, achieving 87.8% ImageNet top-1 accuracy and outperforming Swin Transformers on COCO detection and ADE20K segmentation, while maintaining the simplicity and efficiency of standard ConvNets.

+

+

+

+

+## How to use it?

+

+

+

+**Predict image**

+

+```python

+from mmpretrain import inference_model

+

+predict = inference_model('convnext-tiny_32xb128_in1k', 'demo/bird.JPEG')

+print(predict['pred_class'])

+print(predict['pred_score'])

+```

+

+**Use the model**

+

+```python

+import torch

+from mmpretrain import get_model

+

+model = get_model('convnext-tiny_32xb128_in1k', pretrained=True)

+inputs = torch.rand(1, 3, 224, 224)

+out = model(inputs)

+print(type(out))

+# To extract features.

+feats = model.extract_feat(inputs)

+print(type(feats))

+```

+

+**Train/Test Command**

+

+Prepare your dataset according to the [docs](https://mmpretrain.readthedocs.io/en/latest/user_guides/dataset_prepare.html#prepare-dataset).

+

+Train:

+

+```shell

+python tools/train.py configs/convnext/convnext-tiny_32xb128_in1k.py

+```

+

+Test:

+

+```shell

+python tools/test.py configs/convnext/convnext-tiny_32xb128_in1k.py https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_32xb128_in1k_20221207-998cf3e9.pth

+```

+

+

+

+## Models and results

+

+### Pretrained models

+

+| Model | Params (M) | Flops (G) | Config | Download |

+| :--------------------------------- | :--------: | :-------: | :---------------------------------------: | :--------------------------------------------------------------------------------------------------------: |

+| `convnext-base_3rdparty_in21k`\* | 88.59 | 15.36 | [config](convnext-base_32xb128_in21k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_3rdparty_in21k_20220124-13b83eec.pth) |

+| `convnext-large_3rdparty_in21k`\* | 197.77 | 34.37 | [config](convnext-large_64xb64_in21k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_3rdparty_in21k_20220124-41b5a79f.pth) |

+| `convnext-xlarge_3rdparty_in21k`\* | 350.20 | 60.93 | [config](convnext-xlarge_64xb64_in21k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-xlarge_3rdparty_in21k_20220124-f909bad7.pth) |

+

+*Models with * are converted from the [official repo](https://github.com/facebookresearch/ConvNeXt). The config files of these models are only for inference. We haven't reproduce the training results.*

+

+### Image Classification on ImageNet-1k

+

+| Model | Pretrain | Params (M) | Flops (G) | Top-1 (%) | Top-5 (%) | Config | Download |

+| :------------------------------------------------ | :----------: | :--------: | :-------: | :-------: | :-------: | :--------------------------------------------: | :------------------------------------------------------: |

+| `convnext-tiny_32xb128_in1k` | From scratch | 28.59 | 4.46 | 82.14 | 96.06 | [config](convnext-tiny_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_32xb128_in1k_20221207-998cf3e9.pth) \| [log](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_32xb128_in1k_20221207-998cf3e9.json) |

+| `convnext-tiny_32xb128-noema_in1k` | From scratch | 28.59 | 4.46 | 81.95 | 95.89 | [config](convnext-tiny_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_32xb128-noema_in1k_20221208-5d4509c7.pth) \| [log](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_32xb128_in1k_20221207-998cf3e9.json) |

+| `convnext-tiny_in21k-pre_3rdparty_in1k`\* | ImageNet-21k | 28.59 | 4.46 | 82.90 | 96.62 | [config](convnext-tiny_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_in21k-pre_3rdparty_in1k_20221219-7501e534.pth) |

+| `convnext-tiny_in21k-pre_3rdparty_in1k-384px`\* | ImageNet-21k | 28.59 | 13.14 | 84.11 | 97.14 | [config](convnext-tiny_32xb128_in1k-384px.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_in21k-pre_3rdparty_in1k-384px_20221219-c1182362.pth) |

+| `convnext-small_32xb128_in1k` | From scratch | 50.22 | 8.69 | 83.16 | 96.56 | [config](convnext-small_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_32xb128_in1k_20221207-4ab7052c.pth) \| [log](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_32xb128_in1k_20221207-4ab7052c.json) |

+| `convnext-small_32xb128-noema_in1k` | From scratch | 50.22 | 8.69 | 83.21 | 96.48 | [config](convnext-small_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_32xb128-noema_in1k_20221208-4a618995.pth) \| [log](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_32xb128_in1k_20221207-4ab7052c.json) |

+| `convnext-small_in21k-pre_3rdparty_in1k`\* | ImageNet-21k | 50.22 | 8.69 | 84.59 | 97.41 | [config](convnext-small_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_in21k-pre_3rdparty_in1k_20221219-aeca4c93.pth) |

+| `convnext-small_in21k-pre_3rdparty_in1k-384px`\* | ImageNet-21k | 50.22 | 25.58 | 85.75 | 97.88 | [config](convnext-small_32xb128_in1k-384px.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_in21k-pre_3rdparty_in1k-384px_20221219-96f0bb87.pth) |

+| `convnext-base_32xb128_in1k` | From scratch | 88.59 | 15.36 | 83.66 | 96.74 | [config](convnext-base_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_32xb128_in1k_20221207-fbdb5eb9.pth) \| [log](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_32xb128_in1k_20221207-fbdb5eb9.json) |

+| `convnext-base_32xb128-noema_in1k` | From scratch | 88.59 | 15.36 | 83.64 | 96.61 | [config](convnext-base_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_32xb128-noema_in1k_20221208-f8182678.pth) \| [log](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_32xb128_in1k_20221207-fbdb5eb9.json) |

+| `convnext-base_3rdparty_in1k`\* | From scratch | 88.59 | 15.36 | 83.85 | 96.74 | [config](convnext-base_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_3rdparty_32xb128_in1k_20220124-d0915162.pth) |

+| `convnext-base_3rdparty-noema_in1k`\* | From scratch | 88.59 | 15.36 | 83.71 | 96.60 | [config](convnext-base_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_3rdparty_32xb128-noema_in1k_20220222-dba4f95f.pth) |

+| `convnext-base_3rdparty_in1k-384px`\* | From scratch | 88.59 | 45.21 | 85.10 | 97.34 | [config](convnext-base_32xb128_in1k-384px.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_3rdparty_in1k-384px_20221219-c8f1dc2b.pth) |

+| `convnext-base_in21k-pre_3rdparty_in1k`\* | ImageNet-21k | 88.59 | 15.36 | 85.81 | 97.86 | [config](convnext-base_32xb128_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_in21k-pre-3rdparty_32xb128_in1k_20220124-eb2d6ada.pth) |

+| `convnext-base_in21k-pre-3rdparty_in1k-384px`\* | From scratch | 88.59 | 45.21 | 86.82 | 98.25 | [config](convnext-base_32xb128_in1k-384px.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_in21k-pre-3rdparty_in1k-384px_20221219-4570f792.pth) |

+| `convnext-large_3rdparty_in1k`\* | From scratch | 197.77 | 34.37 | 84.30 | 96.89 | [config](convnext-large_64xb64_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_3rdparty_64xb64_in1k_20220124-f8a0ded0.pth) |

+| `convnext-large_3rdparty_in1k-384px`\* | From scratch | 197.77 | 101.10 | 85.50 | 97.59 | [config](convnext-large_64xb64_in1k-384px.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_3rdparty_in1k-384px_20221219-6dd29d10.pth) |

+| `convnext-large_in21k-pre_3rdparty_in1k`\* | ImageNet-21k | 197.77 | 34.37 | 86.61 | 98.04 | [config](convnext-large_64xb64_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_in21k-pre-3rdparty_64xb64_in1k_20220124-2412403d.pth) |

+| `convnext-large_in21k-pre-3rdparty_in1k-384px`\* | From scratch | 197.77 | 101.10 | 87.46 | 98.37 | [config](convnext-large_64xb64_in1k-384px.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_in21k-pre-3rdparty_in1k-384px_20221219-6d38dd66.pth) |

+| `convnext-xlarge_in21k-pre_3rdparty_in1k`\* | ImageNet-21k | 350.20 | 60.93 | 86.97 | 98.20 | [config](convnext-xlarge_64xb64_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-xlarge_in21k-pre-3rdparty_64xb64_in1k_20220124-76b6863d.pth) |

+| `convnext-xlarge_in21k-pre-3rdparty_in1k-384px`\* | From scratch | 350.20 | 179.20 | 87.76 | 98.55 | [config](convnext-xlarge_64xb64_in1k-384px.py) | [model](https://download.openmmlab.com/mmclassification/v0/convnext/convnext-xlarge_in21k-pre-3rdparty_in1k-384px_20221219-b161bc14.pth) |

+

+*Models with * are converted from the [official repo](https://github.com/facebookresearch/ConvNeXt). The config files of these models are only for inference. We haven't reproduce the training results.*

+

+## Citation

+

+```bibtex

+@Article{liu2022convnet,

+ author = {Zhuang Liu and Hanzi Mao and Chao-Yuan Wu and Christoph Feichtenhofer and Trevor Darrell and Saining Xie},

+ title = {A ConvNet for the 2020s},

+ journal = {arXiv preprint arXiv:2201.03545},

+ year = {2022},

+}

+```

diff --git a/configs/convnext/convnext-base_32xb128_in1k-384px.py b/configs/convnext/convnext-base_32xb128_in1k-384px.py

new file mode 100644

index 0000000000000000000000000000000000000000..65546942562ac17b3d4510c78d3090aa8b87a831

--- /dev/null

+++ b/configs/convnext/convnext-base_32xb128_in1k-384px.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-base.py',

+ '../_base_/datasets/imagenet_bs64_swin_384.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=128)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=dict(max_norm=5.0),

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=4e-5, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (32 GPUs) x (128 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-base_32xb128_in1k.py b/configs/convnext/convnext-base_32xb128_in1k.py

new file mode 100644

index 0000000000000000000000000000000000000000..5ae8ec47c4c7ac3f22712c97dbad315c7a798e6f

--- /dev/null

+++ b/configs/convnext/convnext-base_32xb128_in1k.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-base.py',

+ '../_base_/datasets/imagenet_bs64_swin_224.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=128)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=None,

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=1e-4, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (32 GPUs) x (128 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-base_32xb128_in21k.py b/configs/convnext/convnext-base_32xb128_in21k.py

new file mode 100644

index 0000000000000000000000000000000000000000..c343526c7f084501fc3651c1581752209f5019a4

--- /dev/null

+++ b/configs/convnext/convnext-base_32xb128_in21k.py

@@ -0,0 +1,24 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-base.py',

+ '../_base_/datasets/imagenet21k_bs128.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# model setting

+model = dict(head=dict(num_classes=21841))

+

+# dataset setting

+data_preprocessor = dict(num_classes=21841)

+train_dataloader = dict(batch_size=128)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=dict(max_norm=5.0),

+)

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (32 GPUs) x (128 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-large_64xb64_in1k-384px.py b/configs/convnext/convnext-large_64xb64_in1k-384px.py

new file mode 100644

index 0000000000000000000000000000000000000000..6698b9edcdae463d6d1cf943237efbaf236cd71c

--- /dev/null

+++ b/configs/convnext/convnext-large_64xb64_in1k-384px.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-large.py',

+ '../_base_/datasets/imagenet_bs64_swin_384.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=64)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=dict(max_norm=5.0),

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=4e-5, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (64 GPUs) x (64 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-large_64xb64_in1k.py b/configs/convnext/convnext-large_64xb64_in1k.py

new file mode 100644

index 0000000000000000000000000000000000000000..8a78c58bc3d85e0e08083d339378886f870388bc

--- /dev/null

+++ b/configs/convnext/convnext-large_64xb64_in1k.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-large.py',

+ '../_base_/datasets/imagenet_bs64_swin_224.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=64)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=None,

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=1e-4, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (64 GPUs) x (64 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-large_64xb64_in21k.py b/configs/convnext/convnext-large_64xb64_in21k.py

new file mode 100644

index 0000000000000000000000000000000000000000..420edab67b1dc094f08b4a3810af522b2a988b62

--- /dev/null

+++ b/configs/convnext/convnext-large_64xb64_in21k.py

@@ -0,0 +1,24 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-base.py',

+ '../_base_/datasets/imagenet21k_bs128.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# model setting

+model = dict(head=dict(num_classes=21841))

+

+# dataset setting

+data_preprocessor = dict(num_classes=21841)

+train_dataloader = dict(batch_size=64)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=dict(max_norm=5.0),

+)

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (32 GPUs) x (128 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-small_32xb128_in1k-384px.py b/configs/convnext/convnext-small_32xb128_in1k-384px.py

new file mode 100644

index 0000000000000000000000000000000000000000..729f00ad2fdf53943ffae9de165e2e9985e733c7

--- /dev/null

+++ b/configs/convnext/convnext-small_32xb128_in1k-384px.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-small.py',

+ '../_base_/datasets/imagenet_bs64_swin_384.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=128)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=dict(max_norm=5.0),

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=4e-5, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (32 GPUs) x (128 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-small_32xb128_in1k.py b/configs/convnext/convnext-small_32xb128_in1k.py

new file mode 100644

index 0000000000000000000000000000000000000000..b623e900f830fbea7891b61c737398c0dee1076e

--- /dev/null

+++ b/configs/convnext/convnext-small_32xb128_in1k.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-small.py',

+ '../_base_/datasets/imagenet_bs64_swin_224.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=128)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=None,

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=1e-4, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (32 GPUs) x (128 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-tiny_32xb128_in1k-384px.py b/configs/convnext/convnext-tiny_32xb128_in1k-384px.py

new file mode 100644

index 0000000000000000000000000000000000000000..6513ad8dfa41714ecb5c9de5992496716337c596

--- /dev/null

+++ b/configs/convnext/convnext-tiny_32xb128_in1k-384px.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-tiny.py',

+ '../_base_/datasets/imagenet_bs64_swin_384.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=128)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=dict(max_norm=5.0),

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=4e-5, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (32 GPUs) x (128 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-tiny_32xb128_in1k.py b/configs/convnext/convnext-tiny_32xb128_in1k.py

new file mode 100644

index 0000000000000000000000000000000000000000..59d3004bde89510b5c44110c8a6513957c0cbba0

--- /dev/null

+++ b/configs/convnext/convnext-tiny_32xb128_in1k.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-tiny.py',

+ '../_base_/datasets/imagenet_bs64_swin_224.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=128)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=None,

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=1e-4, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (32 GPUs) x (128 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-xlarge_64xb64_in1k-384px.py b/configs/convnext/convnext-xlarge_64xb64_in1k-384px.py

new file mode 100644

index 0000000000000000000000000000000000000000..6edc94d2448157fc82bf38a988bf4393f192a89f

--- /dev/null

+++ b/configs/convnext/convnext-xlarge_64xb64_in1k-384px.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-xlarge.py',

+ '../_base_/datasets/imagenet_bs64_swin_384.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=64)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=dict(max_norm=5.0),

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=4e-5, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (64 GPUs) x (64 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-xlarge_64xb64_in1k.py b/configs/convnext/convnext-xlarge_64xb64_in1k.py

new file mode 100644

index 0000000000000000000000000000000000000000..528894e808b7085ee66d8be89cf84f860ddec979

--- /dev/null

+++ b/configs/convnext/convnext-xlarge_64xb64_in1k.py

@@ -0,0 +1,23 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-xlarge.py',

+ '../_base_/datasets/imagenet_bs64_swin_224.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# dataset setting

+train_dataloader = dict(batch_size=64)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=None,

+)

+

+# runtime setting

+custom_hooks = [dict(type='EMAHook', momentum=1e-4, priority='ABOVE_NORMAL')]

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (64 GPUs) x (64 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/convnext-xlarge_64xb64_in21k.py b/configs/convnext/convnext-xlarge_64xb64_in21k.py

new file mode 100644

index 0000000000000000000000000000000000000000..420edab67b1dc094f08b4a3810af522b2a988b62

--- /dev/null

+++ b/configs/convnext/convnext-xlarge_64xb64_in21k.py

@@ -0,0 +1,24 @@

+_base_ = [

+ '../_base_/models/convnext/convnext-base.py',

+ '../_base_/datasets/imagenet21k_bs128.py',

+ '../_base_/schedules/imagenet_bs1024_adamw_swin.py',

+ '../_base_/default_runtime.py',

+]

+

+# model setting

+model = dict(head=dict(num_classes=21841))

+

+# dataset setting

+data_preprocessor = dict(num_classes=21841)

+train_dataloader = dict(batch_size=64)

+

+# schedule setting

+optim_wrapper = dict(

+ optimizer=dict(lr=4e-3),

+ clip_grad=dict(max_norm=5.0),

+)

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR

+# based on the actual training batch size.

+# base_batch_size = (32 GPUs) x (128 samples per GPU)

+auto_scale_lr = dict(base_batch_size=4096)

diff --git a/configs/convnext/metafile.yml b/configs/convnext/metafile.yml

new file mode 100644

index 0000000000000000000000000000000000000000..16896629f07ffadd5313a6e38bc1532ddc3c08f2

--- /dev/null

+++ b/configs/convnext/metafile.yml

@@ -0,0 +1,410 @@

+Collections:

+ - Name: ConvNeXt

+ Metadata:

+ Training Data: ImageNet-1k

+ Architecture:

+ - 1x1 Convolution

+ - LayerScale

+ Paper:

+ URL: https://arxiv.org/abs/2201.03545v1

+ Title: A ConvNet for the 2020s

+ README: configs/convnext/README.md

+ Code:

+ Version: v0.20.1

+ URL: https://github.com/open-mmlab/mmpretrain/blob/v0.20.1/mmcls/models/backbones/convnext.py

+

+Models:

+ - Name: convnext-tiny_32xb128_in1k

+ Metadata:

+ FLOPs: 4457472768

+ Parameters: 28589128

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 82.14

+ Top 5 Accuracy: 96.06

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_32xb128_in1k_20221207-998cf3e9.pth

+ Config: configs/convnext/convnext-tiny_32xb128_in1k.py

+ - Name: convnext-tiny_32xb128-noema_in1k

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 4457472768

+ Parameters: 28589128

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 81.95

+ Top 5 Accuracy: 95.89

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_32xb128-noema_in1k_20221208-5d4509c7.pth

+ Config: configs/convnext/convnext-tiny_32xb128_in1k.py

+ - Name: convnext-tiny_in21k-pre_3rdparty_in1k

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 4457472768

+ Parameters: 28589128

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 82.90

+ Top 5 Accuracy: 96.62

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_in21k-pre_3rdparty_in1k_20221219-7501e534.pth

+ Config: configs/convnext/convnext-tiny_32xb128_in1k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_tiny_22k_1k_224.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-tiny_in21k-pre_3rdparty_in1k-384px

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 13135236864

+ Parameters: 28589128

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 84.11

+ Top 5 Accuracy: 97.14

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-tiny_in21k-pre_3rdparty_in1k-384px_20221219-c1182362.pth

+ Config: configs/convnext/convnext-tiny_32xb128_in1k-384px.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_tiny_22k_1k_384.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-small_32xb128_in1k

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 8687008512

+ Parameters: 50223688

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 83.16

+ Top 5 Accuracy: 96.56

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_32xb128_in1k_20221207-4ab7052c.pth

+ Config: configs/convnext/convnext-small_32xb128_in1k.py

+ - Name: convnext-small_32xb128-noema_in1k

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 8687008512

+ Parameters: 50223688

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 83.21

+ Top 5 Accuracy: 96.48

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_32xb128-noema_in1k_20221208-4a618995.pth

+ Config: configs/convnext/convnext-small_32xb128_in1k.py

+ - Name: convnext-small_in21k-pre_3rdparty_in1k

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 8687008512

+ Parameters: 50223688

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 84.59

+ Top 5 Accuracy: 97.41

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_in21k-pre_3rdparty_in1k_20221219-aeca4c93.pth

+ Config: configs/convnext/convnext-small_32xb128_in1k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_small_22k_1k_224.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-small_in21k-pre_3rdparty_in1k-384px

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 25580818176

+ Parameters: 50223688

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 85.75

+ Top 5 Accuracy: 97.88

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-small_in21k-pre_3rdparty_in1k-384px_20221219-96f0bb87.pth

+ Config: configs/convnext/convnext-small_32xb128_in1k-384px.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_small_22k_1k_384.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-base_32xb128_in1k

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 15359124480

+ Parameters: 88591464

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 83.66

+ Top 5 Accuracy: 96.74

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_32xb128_in1k_20221207-fbdb5eb9.pth

+ Config: configs/convnext/convnext-base_32xb128_in1k.py

+ - Name: convnext-base_32xb128-noema_in1k

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 15359124480

+ Parameters: 88591464

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 83.64

+ Top 5 Accuracy: 96.61

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_32xb128-noema_in1k_20221208-f8182678.pth

+ Config: configs/convnext/convnext-base_32xb128_in1k.py

+ - Name: convnext-base_3rdparty_in1k

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 15359124480

+ Parameters: 88591464

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 83.85

+ Top 5 Accuracy: 96.74

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_3rdparty_32xb128_in1k_20220124-d0915162.pth

+ Config: configs/convnext/convnext-base_32xb128_in1k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_base_1k_224_ema.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-base_3rdparty-noema_in1k

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 15359124480

+ Parameters: 88591464

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 83.71

+ Top 5 Accuracy: 96.60

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_3rdparty_32xb128-noema_in1k_20220222-dba4f95f.pth

+ Config: configs/convnext/convnext-base_32xb128_in1k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_base_1k_224.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-base_3rdparty_in1k-384px

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 45205885952

+ Parameters: 88591464

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 85.10

+ Top 5 Accuracy: 97.34

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_3rdparty_in1k-384px_20221219-c8f1dc2b.pth

+ Config: configs/convnext/convnext-base_32xb128_in1k-384px.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_base_1k_384.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-base_3rdparty_in21k

+ Metadata:

+ Training Data: ImageNet-21k

+ FLOPs: 15359124480

+ Parameters: 88591464

+ In Collection: ConvNeXt

+ Results: null

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_3rdparty_in21k_20220124-13b83eec.pth

+ Config: configs/convnext/convnext-base_32xb128_in21k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_base_22k_224.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-base_in21k-pre_3rdparty_in1k

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 15359124480

+ Parameters: 88591464

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 85.81

+ Top 5 Accuracy: 97.86

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_in21k-pre-3rdparty_32xb128_in1k_20220124-eb2d6ada.pth

+ Config: configs/convnext/convnext-base_32xb128_in1k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_base_22k_1k_224.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-base_in21k-pre-3rdparty_in1k-384px

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 45205885952

+ Parameters: 88591464

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 86.82

+ Top 5 Accuracy: 98.25

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-base_in21k-pre-3rdparty_in1k-384px_20221219-4570f792.pth

+ Config: configs/convnext/convnext-base_32xb128_in1k-384px.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_base_22k_1k_384.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-large_3rdparty_in1k

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 34368026112

+ Parameters: 197767336

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 84.30

+ Top 5 Accuracy: 96.89

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_3rdparty_64xb64_in1k_20220124-f8a0ded0.pth

+ Config: configs/convnext/convnext-large_64xb64_in1k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_large_1k_224_ema.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-large_3rdparty_in1k-384px

+ Metadata:

+ Training Data: ImageNet-1k

+ FLOPs: 101103214080

+ Parameters: 197767336

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 85.50

+ Top 5 Accuracy: 97.59

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_3rdparty_in1k-384px_20221219-6dd29d10.pth

+ Config: configs/convnext/convnext-large_64xb64_in1k-384px.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_large_1k_384.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-large_3rdparty_in21k

+ Metadata:

+ Training Data: ImageNet-21k

+ FLOPs: 34368026112

+ Parameters: 197767336

+ In Collection: ConvNeXt

+ Results: null

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_3rdparty_in21k_20220124-41b5a79f.pth

+ Config: configs/convnext/convnext-large_64xb64_in21k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_large_22k_224.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-large_in21k-pre_3rdparty_in1k

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 34368026112

+ Parameters: 197767336

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 86.61

+ Top 5 Accuracy: 98.04

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_in21k-pre-3rdparty_64xb64_in1k_20220124-2412403d.pth

+ Config: configs/convnext/convnext-large_64xb64_in1k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_large_22k_1k_224.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-large_in21k-pre-3rdparty_in1k-384px

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 101103214080

+ Parameters: 197767336

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 87.46

+ Top 5 Accuracy: 98.37

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-large_in21k-pre-3rdparty_in1k-384px_20221219-6d38dd66.pth

+ Config: configs/convnext/convnext-large_64xb64_in1k-384px.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_large_22k_1k_384.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-xlarge_3rdparty_in21k

+ Metadata:

+ Training Data: ImageNet-21k

+ FLOPs: 60929820672

+ Parameters: 350196968

+ In Collection: ConvNeXt

+ Results: null

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-xlarge_3rdparty_in21k_20220124-f909bad7.pth

+ Config: configs/convnext/convnext-xlarge_64xb64_in21k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_xlarge_22k_224.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-xlarge_in21k-pre_3rdparty_in1k

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 60929820672

+ Parameters: 350196968

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 86.97

+ Top 5 Accuracy: 98.20

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-xlarge_in21k-pre-3rdparty_64xb64_in1k_20220124-76b6863d.pth

+ Config: configs/convnext/convnext-xlarge_64xb64_in1k.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_xlarge_22k_1k_224_ema.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

+ - Name: convnext-xlarge_in21k-pre-3rdparty_in1k-384px

+ Metadata:

+ Training Data:

+ - ImageNet-21k

+ - ImageNet-1k

+ FLOPs: 179196798976

+ Parameters: 350196968

+ In Collection: ConvNeXt

+ Results:

+ - Dataset: ImageNet-1k

+ Metrics:

+ Top 1 Accuracy: 87.76

+ Top 5 Accuracy: 98.55

+ Task: Image Classification

+ Weights: https://download.openmmlab.com/mmclassification/v0/convnext/convnext-xlarge_in21k-pre-3rdparty_in1k-384px_20221219-b161bc14.pth

+ Config: configs/convnext/convnext-xlarge_64xb64_in1k-384px.py

+ Converted From:

+ Weights: https://dl.fbaipublicfiles.com/convnext/convnext_xlarge_22k_1k_384_ema.pth

+ Code: https://github.com/facebookresearch/ConvNeXt

diff --git a/configs/convnext_v2/README.md b/configs/convnext_v2/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..e561387412aa3a8e088cb7d015e7b98dba8e50c1

--- /dev/null

+++ b/configs/convnext_v2/README.md

@@ -0,0 +1,107 @@

+# ConvNeXt V2

+

+> [Co-designing and Scaling ConvNets with Masked Autoencoders](http://arxiv.org/abs/2301.00808)

+

+

+

+## Abstract

+

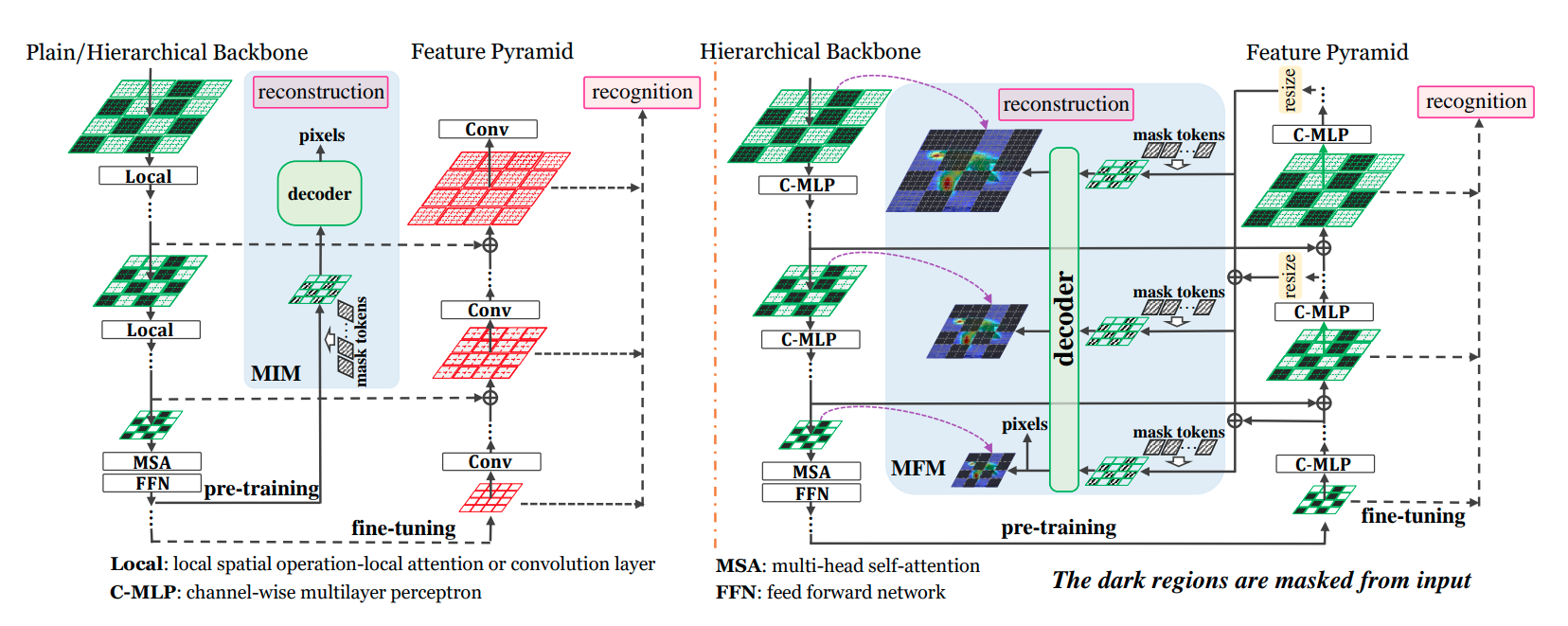

+Driven by improved architectures and better representation learning frameworks, the field of visual recognition has enjoyed rapid modernization and performance boost in the early 2020s. For example, modern ConvNets, represented by ConvNeXt, have demonstrated strong performance in various scenarios. While these models were originally designed for supervised learning with ImageNet labels, they can also potentially benefit from self-supervised learning techniques such as masked autoencoders (MAE). However, we found that simply combining these two approaches leads to subpar performance. In this paper, we propose a fully convolutional masked autoencoder framework and a new Global Response Normalization (GRN) layer that can be added to the ConvNeXt architecture to enhance inter-channel feature competition. This co-design of self-supervised learning techniques and architectural improvement results in a new model family called ConvNeXt V2, which significantly improves the performance of pure ConvNets on various recognition benchmarks, including ImageNet classification, COCO detection, and ADE20K segmentation. We also provide pre-trained ConvNeXt V2 models of various sizes, ranging from an efficient 3.7M-parameter Atto model with 76.7% top-1 accuracy on ImageNet, to a 650M Huge model that achieves a state-of-the-art 88.9% accuracy using only public training data.

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+Click to show the detailed Abstract

+

+

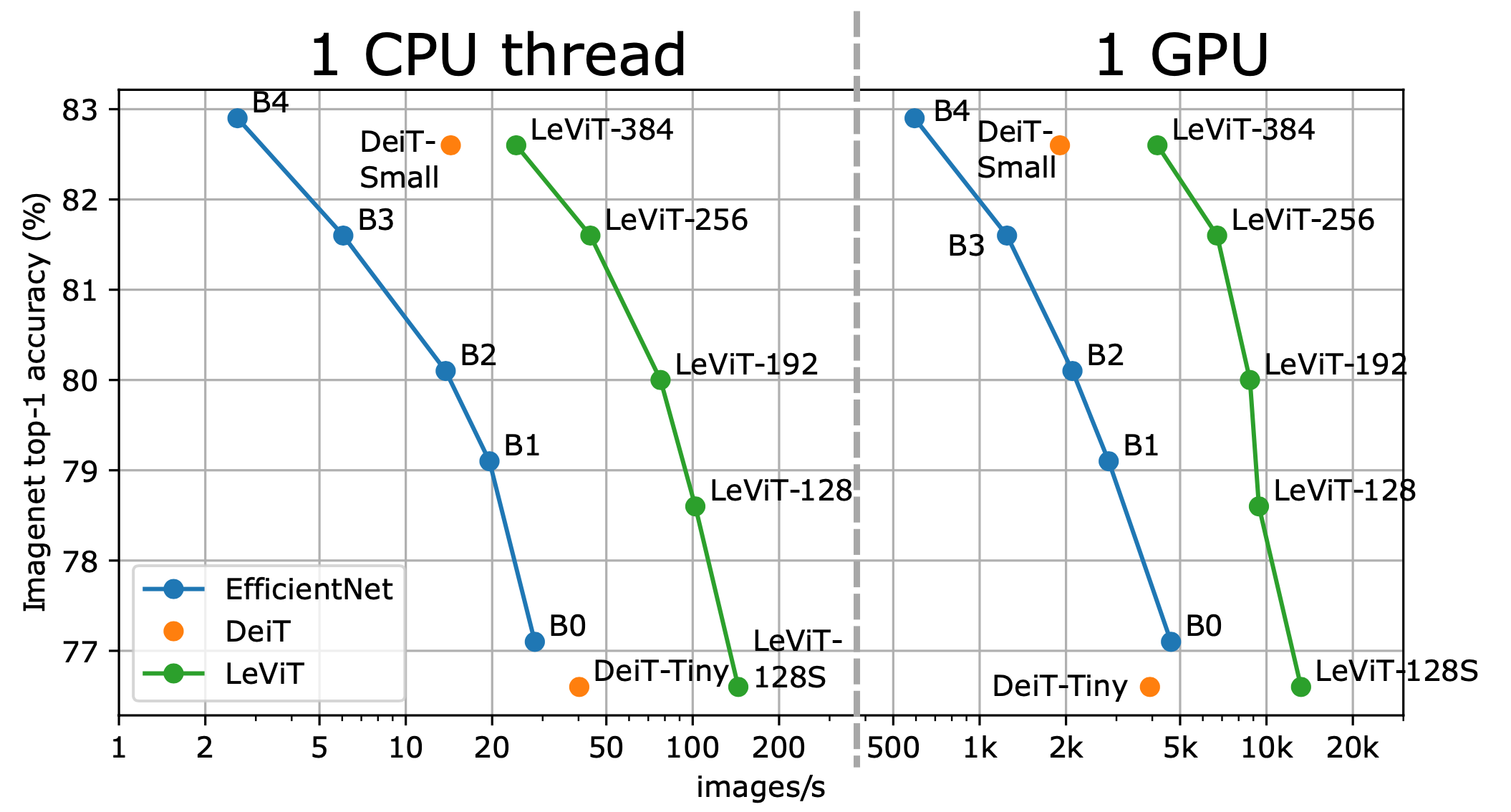

+Convolutional Neural Networks (ConvNets) are commonly developed at a fixed resource budget, and then scaled up for better accuracy if more resources are available. In this paper, we systematically study model scaling and identify that carefully balancing network depth, width, and resolution can lead to better performance. Based on this observation, we propose a new scaling method that uniformly scales all dimensions of depth/width/resolution using a simple yet highly effective compound coefficient. We demonstrate the effectiveness of this method on scaling up MobileNets and ResNet. To go even further, we use neural architecture search to design a new baseline network and scale it up to obtain a family of models, called EfficientNets, which achieve much better accuracy and efficiency than previous ConvNets. In particular, our EfficientNet-B7 achieves state-of-the-art 84.3% top-1 accuracy on ImageNet, while being 8.4x smaller and 6.1x faster on inference than the best existing ConvNet. Our EfficientNets also transfer well and achieve state-of-the-art accuracy on CIFAR-100 (91.7%), Flowers (98.8%), and 3 other transfer learning datasets, with an order of magnitude fewer parameters.

+

+

+

+## How to use it?

+

+

+

+**Predict image**

+

+```python

+from mmpretrain import inference_model

+

+predict = inference_model('efficientnet-b0_3rdparty_8xb32_in1k', 'demo/bird.JPEG')

+print(predict['pred_class'])

+print(predict['pred_score'])

+```

+

+**Use the model**

+

+```python

+import torch

+from mmpretrain import get_model

+

+model = get_model('efficientnet-b0_3rdparty_8xb32_in1k', pretrained=True)

+inputs = torch.rand(1, 3, 224, 224)

+out = model(inputs)

+print(type(out))

+# To extract features.

+feats = model.extract_feat(inputs)

+print(type(feats))

+```

+

+**Test Command**

+

+Prepare your dataset according to the [docs](https://mmpretrain.readthedocs.io/en/latest/user_guides/dataset_prepare.html#prepare-dataset).

+

+Test:

+

+```shell

+python tools/test.py configs/efficientnet/efficientnet-b0_8xb32_in1k.py https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b0_3rdparty_8xb32_in1k_20220119-a7e2a0b1.pth

+```

+

+

+

+## Models and results

+

+### Image Classification on ImageNet-1k

+

+| Model | Pretrain | Params (M) | Flops (G) | Top-1 (%) | Top-5 (%) | Config | Download |

+| :-------------------------------------------------- | :----------: | :--------: | :-------: | :-------: | :-------: | :--------------------------------------------: | :----------------------------------------------------: |

+| `efficientnet-b0_3rdparty_8xb32_in1k`\* | From scratch | 5.29 | 0.42 | 76.74 | 93.17 | [config](efficientnet-b0_8xb32_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b0_3rdparty_8xb32_in1k_20220119-a7e2a0b1.pth) |

+| `efficientnet-b0_3rdparty_8xb32-aa_in1k`\* | From scratch | 5.29 | 0.42 | 77.26 | 93.41 | [config](efficientnet-b0_8xb32_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b0_3rdparty_8xb32-aa_in1k_20220119-8d939117.pth) |

+| `efficientnet-b0_3rdparty_8xb32-aa-advprop_in1k`\* | From scratch | 5.29 | 0.42 | 77.53 | 93.61 | [config](efficientnet-b0_8xb32-01norm_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b0_3rdparty_8xb32-aa-advprop_in1k_20220119-26434485.pth) |

+| `efficientnet-b0_3rdparty-ra-noisystudent_in1k`\* | From scratch | 5.29 | 0.42 | 77.63 | 94.00 | [config](efficientnet-b0_8xb32_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b0_3rdparty-ra-noisystudent_in1k_20221103-75cd08d3.pth) |

+| `efficientnet-b1_3rdparty_8xb32_in1k`\* | From scratch | 7.79 | 0.74 | 78.68 | 94.28 | [config](efficientnet-b1_8xb32_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b1_3rdparty_8xb32_in1k_20220119-002556d9.pth) |

+| `efficientnet-b1_3rdparty_8xb32-aa_in1k`\* | From scratch | 7.79 | 0.74 | 79.20 | 94.42 | [config](efficientnet-b1_8xb32_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b1_3rdparty_8xb32-aa_in1k_20220119-619d8ae3.pth) |

+| `efficientnet-b1_3rdparty_8xb32-aa-advprop_in1k`\* | From scratch | 7.79 | 0.74 | 79.52 | 94.43 | [config](efficientnet-b1_8xb32-01norm_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b1_3rdparty_8xb32-aa-advprop_in1k_20220119-5715267d.pth) |

+| `efficientnet-b1_3rdparty-ra-noisystudent_in1k`\* | From scratch | 7.79 | 0.74 | 81.44 | 95.83 | [config](efficientnet-b1_8xb32_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b1_3rdparty-ra-noisystudent_in1k_20221103-756bcbc0.pth) |

+| `efficientnet-b2_3rdparty_8xb32_in1k`\* | From scratch | 9.11 | 1.07 | 79.64 | 94.80 | [config](efficientnet-b2_8xb32_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b2_3rdparty_8xb32_in1k_20220119-ea374a30.pth) |

+| `efficientnet-b2_3rdparty_8xb32-aa_in1k`\* | From scratch | 9.11 | 1.07 | 80.21 | 94.96 | [config](efficientnet-b2_8xb32_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b2_3rdparty_8xb32-aa_in1k_20220119-dd61e80b.pth) |

+| `efficientnet-b2_3rdparty_8xb32-aa-advprop_in1k`\* | From scratch | 9.11 | 1.07 | 80.45 | 95.07 | [config](efficientnet-b2_8xb32-01norm_in1k.py) | [model](https://download.openmmlab.com/mmclassification/v0/efficientnet/efficientnet-b2_3rdparty_8xb32-aa-advprop_in1k_20220119-1655338a.pth) |