Merge pull request #104 from laekov/faster-doc

Documents for FasterMoE

Showing

doc/fastermoe/README.md

0 → 100644

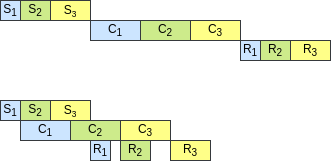

doc/fastermoe/smartsch.png

0 → 100644

9.63 KB

tests/README.md

0 → 100644

Documents for FasterMoE

9.63 KB