"tests/pytorch/test_quantized_tensor.py" did not exist on "1e7809460157f5d641fbd7ac1543d68648a57558"

Update documentation for 2.0 release (#1479)

* Updated docs for TE 2.0 Signed-off-by:Przemek Tredak <ptredak@nvidia.com> * Do not expose comm_gemm_overlap and cast_transpose_noop Signed-off-by:

Przemek Tredak <ptredak@nvidia.com> * Made the figures larger Signed-off-by:

Przemek Tredak <ptredak@nvidia.com> * Apply suggestions from code review Co-authored-by:

Tim Moon <4406448+timmoon10@users.noreply.github.com> Signed-off-by:

Przemyslaw Tredak <ptrendx@gmail.com> * Update quickstart_utils.py Signed-off-by:

Przemek Tredak <ptredak@nvidia.com> * [pre-commit.ci] auto fixes from pre-commit.com hooks for more information, see https://pre-commit.ci * Change from review Co-authored-by:

Kirthi Shankar Sivamani <ksivamani@nvidia.com> Signed-off-by:

Przemyslaw Tredak <ptrendx@gmail.com> --------- Signed-off-by:

Przemek Tredak <ptredak@nvidia.com> Signed-off-by:

Przemyslaw Tredak <ptrendx@gmail.com> Co-authored-by:

Tim Moon <4406448+timmoon10@users.noreply.github.com> Co-authored-by:

pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com> Co-authored-by:

Kirthi Shankar Sivamani <ksivamani@nvidia.com>

Showing

docs/api/c/normalization.rst

0 → 100644

docs/api/c/permutation.rst

0 → 100644

docs/api/c/recipe.rst

0 → 100644

docs/api/c/swizzle.rst

0 → 100644

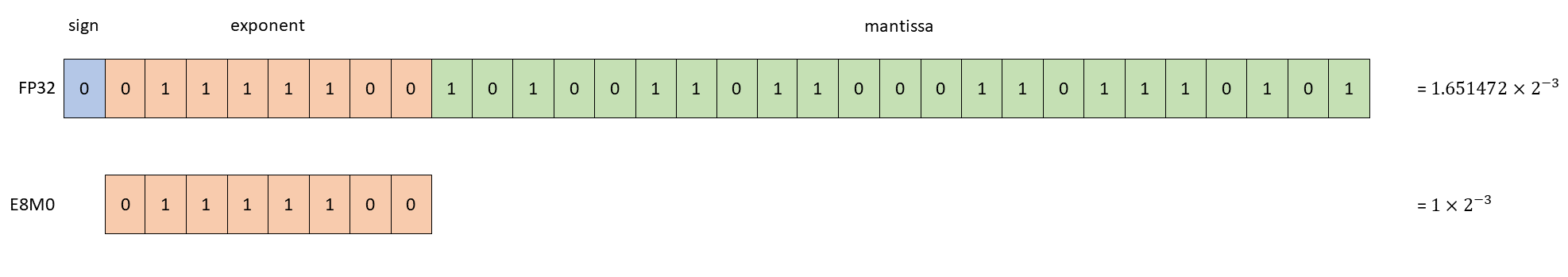

docs/examples/E8M0.png

0 → 100644

30.2 KB

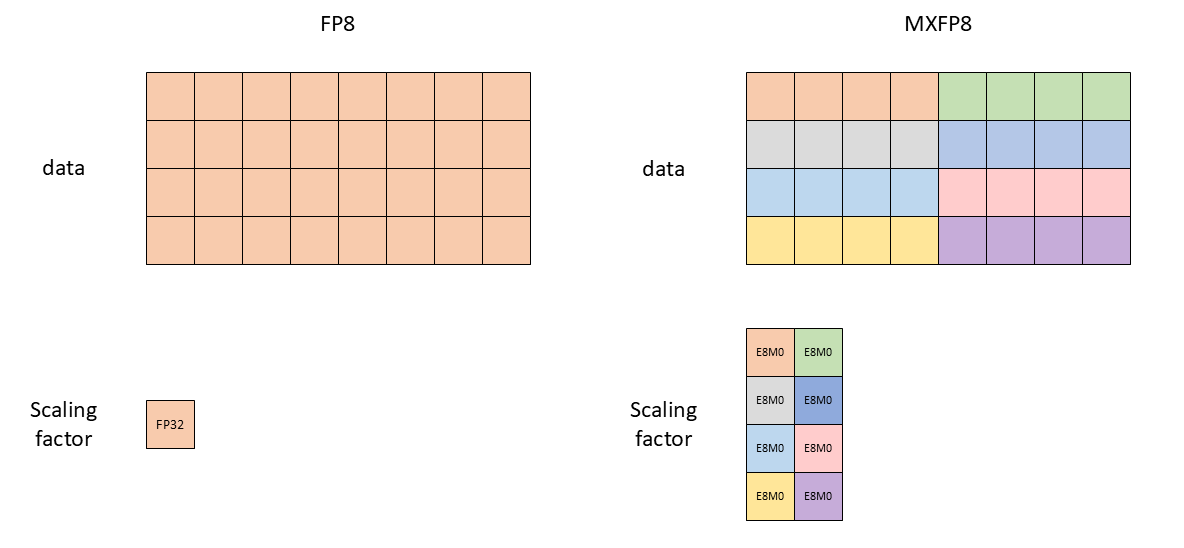

30.5 KB

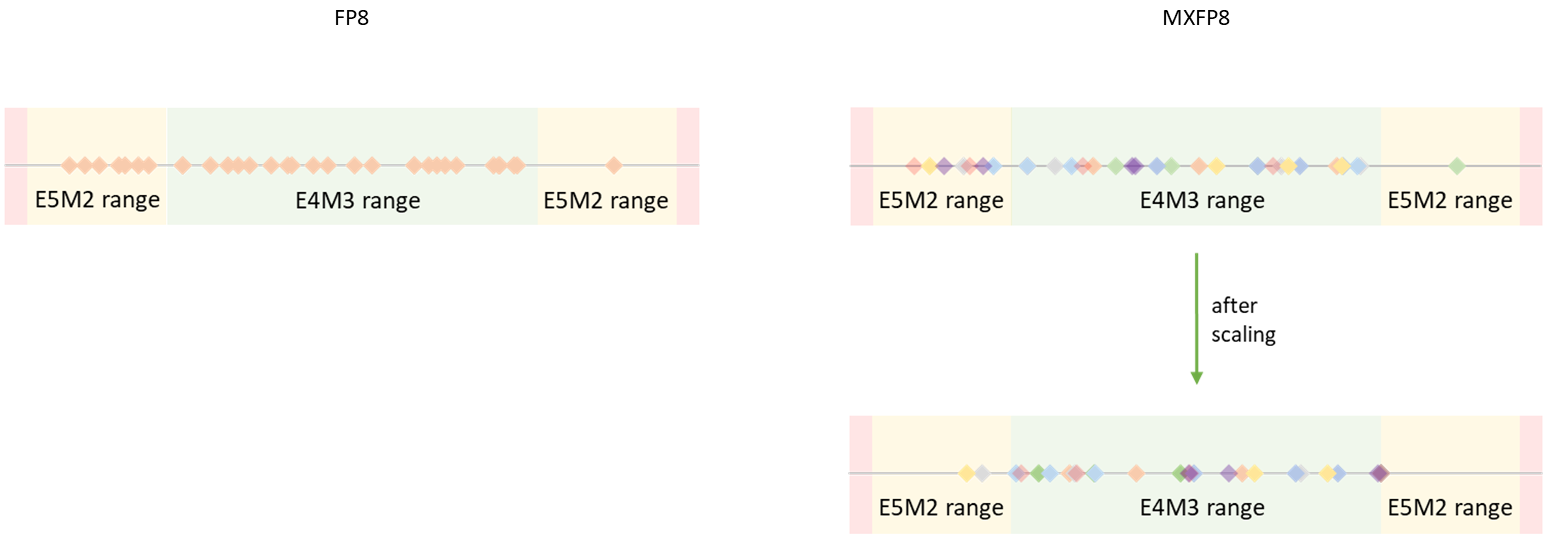

113 KB

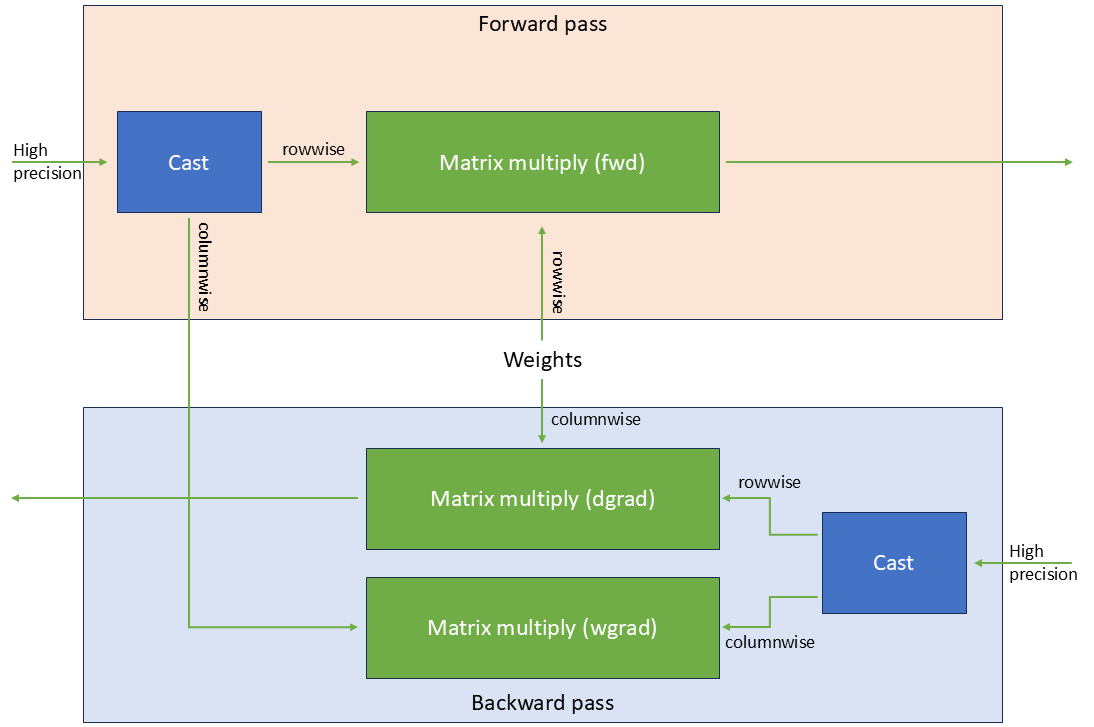

48.1 KB